Rob Cain

75.8K posts

@cain_rob

A Periodic Table of Swearing, Science Tidbits & Middle Class Picnic Chit Chat. Mastodon: [email protected]

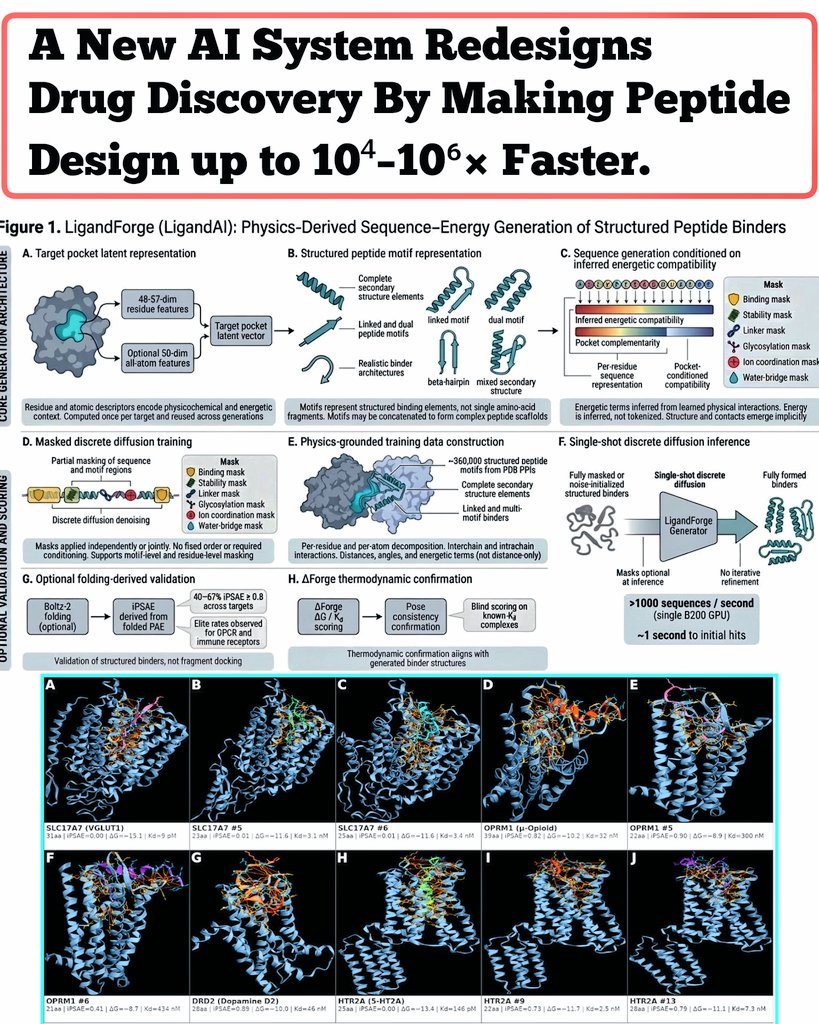

AI just broke drug discovery speed. Scientists have developed a new AI system called LigandForge that can design protein-binding peptides up to 10,000× - 1,000,000× faster than current methods 👀 Researchers used a single-pass discrete diffusion model that directly generates peptide sequences from a protein binding pocket, skipping slow steps like structure prediction and docking. In new study, published on bioRxiv, evaluated the system across ~150 protein targets and compared it to methods like BindCraft and BoltzGen. Results were HUGE 👀! LigandForge achieved ~83% correlation with experimental binding affinity data, showing strong agreement with real-world measurements. It also generated 8,556 diverse peptide candidates, indicating the model isn’t just copying known structures but exploring new designs. And NOTE Peptides matter because they can control proteins directly, and proteins control almost everything in disease. Right now, we struggle to target many of them, which slows down cures. If we learn to design peptides fast with AI, we can quickly create precise treatments for things like cancer and targeted drug delivery, turning years of trial-and-error into a much faster lol.. We are living in the fastest SciTech acceleration era..