✈️ Heading to ICLR 🇧🇷 Apr 22–27.

Come to our oral on Fri, Apr 24 (10:30 AM–12:00 PM, Room 202 A/B) or find me at our poster (3:15 PM–5:45 PM, P3-#521).

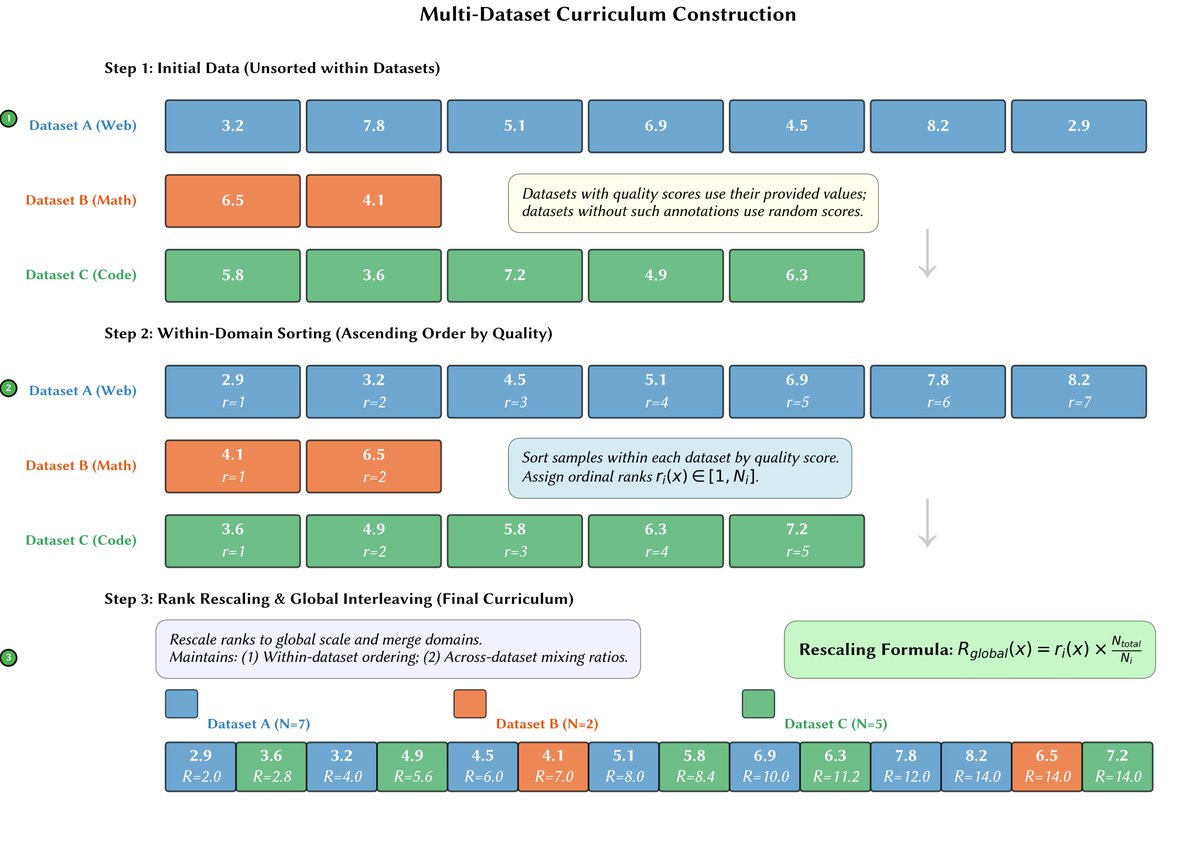

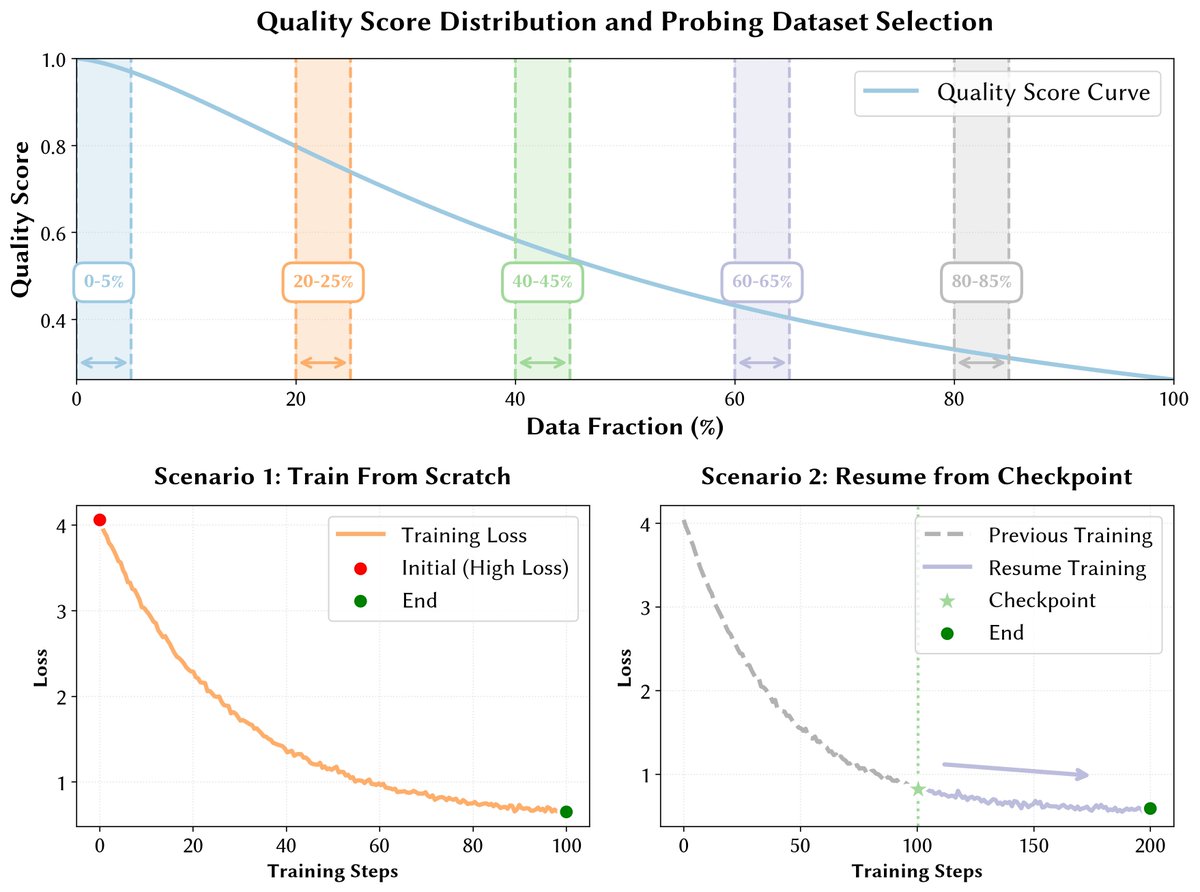

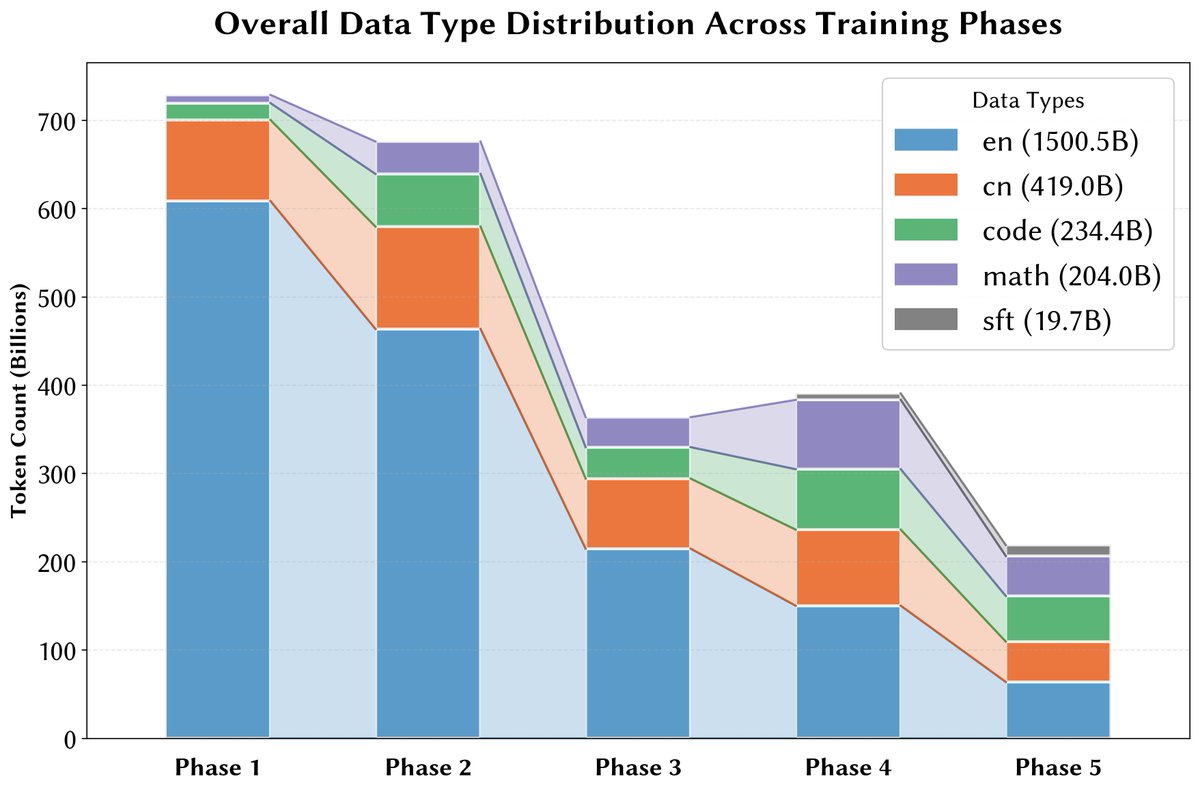

We study why LR decay can hurt curriculum-based LLM pretraining — and how to fix it.

Happy to chat!

English