Pentagon: Anthropic's foreign workforce poses security risks trib.al/mxJqnc8

Raphael Lee

1K posts

Pentagon: Anthropic's foreign workforce poses security risks trib.al/mxJqnc8

@aidan_mclau @scrollvoid This isn't true. Anthropic hasn't offered a "helpful-only" model without safeguards for NatSec use. Claude Gov is a custom model with extra training, including technical safeguards. (We've also had FDEs and researchers implementing it, and we run our own classifier stack.)

To all the new Claude users today — welcome!

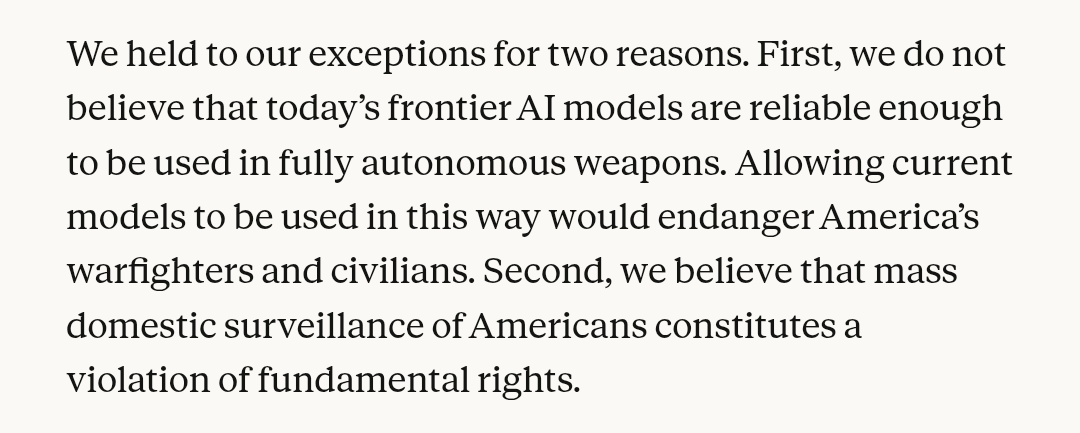

For those wondering how mass domestic surveillance could be consistent with "all lawful use" of AI models, I recommend a declassified report from the ODNI on just how much can be done with commercially available data (CAI): "...to identify ever person who attended a protest"

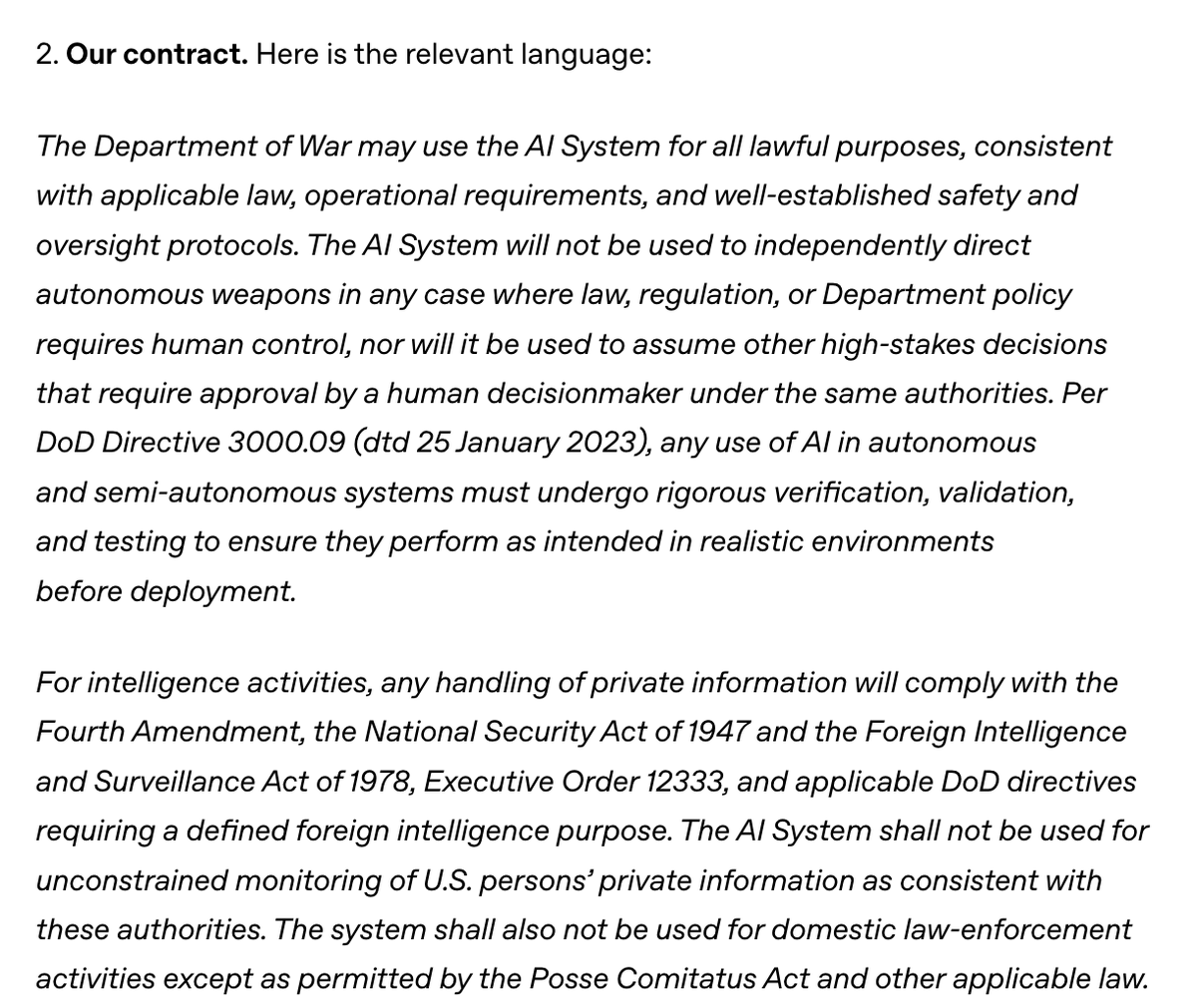

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

The Adolescence of Technology: an essay on the risks posed by powerful AI to national security, economies and democracy—and how we can defend against them: darioamodei.com/essay/the-adol…