M. Alex O. Vasilescu

6.2K posts

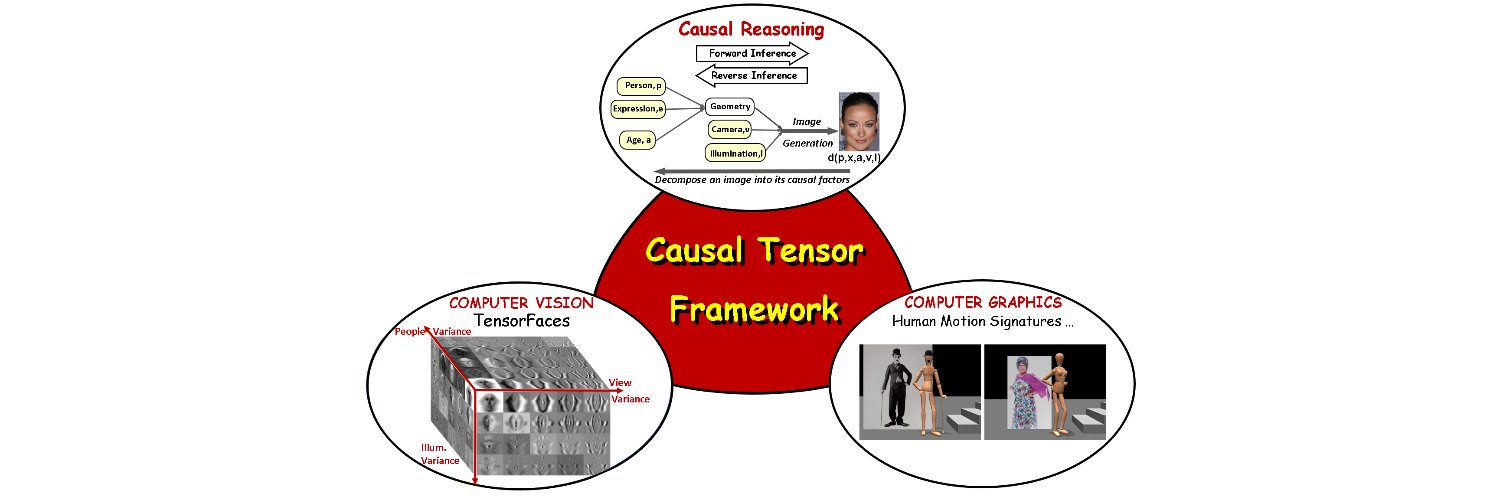

M. Alex O. Vasilescu

@AlexTensor

Developing #causal #tensor framework -TensorFaces, Human Motion Signatures | Alumna @MIT, @UofT | #womenwhocode

Terence Tao on AI in Math. AI can synthesize a million papers and brute-test ideas. Humans can check just 5 examples and see the pattern. But as systems move toward world models, causal reasoning, and active learning, this efficiency gap will narrow.

In system theory, it is called "linearization"... which has been studied and used for decades. Honestly, folks, there is no need to invent or introduce any new terminology. Remember, there is rarely anything new under the sun...

What is a good latent space for world modeling and planning? 🤔 Inspired by the perceptual straightening hypothesis in human vision, we introduce temporal straightening to improve representation learning for latent planning. 📑: agenticlearning.ai/temporal-strai…

hypothetically, if yann lecun fundraised for a new AGI lab for himself... how much would it be worth?

This is the AI that will be taking our jobs