AI

60.7K posts

@DeepLearn007

Imtiaz Adam CS #AI Postgrad |#Tech #Strategy #MachineLearning #DeepLearning | #RL #Agentic | #LLM Liberal | #GenAI| MBA alum @morganstanley @LBS @Columbia_Biz

Let's hear what MiMo TTS can actually do 🔊 Most TTS reads text. MiMo performs it — with full control over emotion, speed, tone, and speaking style. Happy. Angry. Slow. Gentle. Dongbei dialect. Even Monkey King.🐒 Same model, one prompt away. 🎧 1/n

🚨🇮🇷 Video from Tehran shows a massive explosion in the distance.

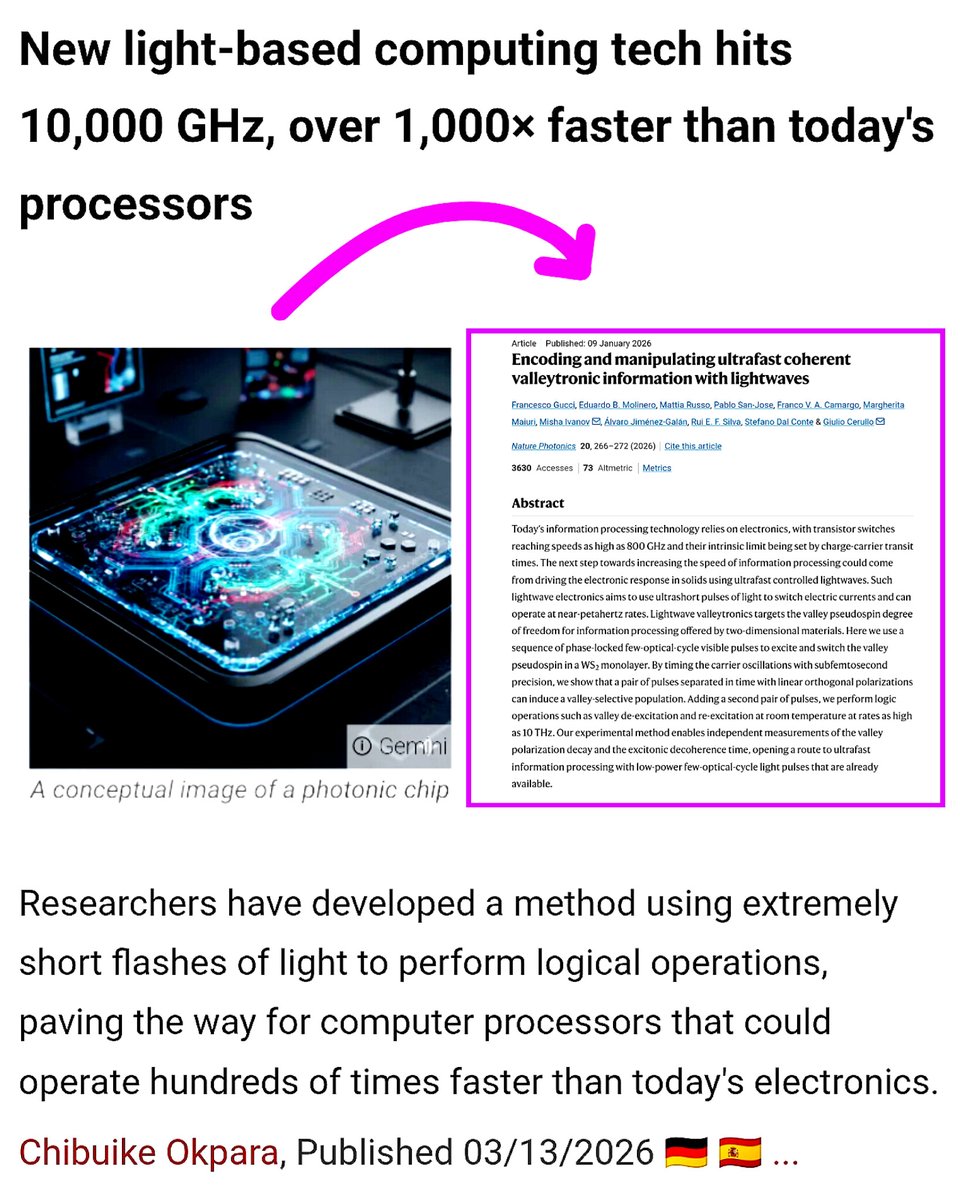

Scientists just demonstrated light driven computing at 10,000 GHz over 100× faster than today’s processors. Ultrafast computing breakthrough In a new study, researchers showed that ultrafast laser pulses can perform logic operations in a 2D semiconductor called tungsten disulfide. Instead of traditional transistors switching with electrical current, the system uses light to control electron valley states, a technique known as valleytronics. By manipulating these states with femtosecond laser pulses (10⁻¹⁵ seconds), the team achieved logical switching frequencies above 10 terahertz. For comparison, today’s processors operate at only 3–5 GHz 👀! This experiment shows that future computers could potentially operate hundreds to thousands of times faster by combining photonics and quantum materials. while still a laboratory demonstration, the research points toward a future of light driven computing far beyond silicon’s limits.