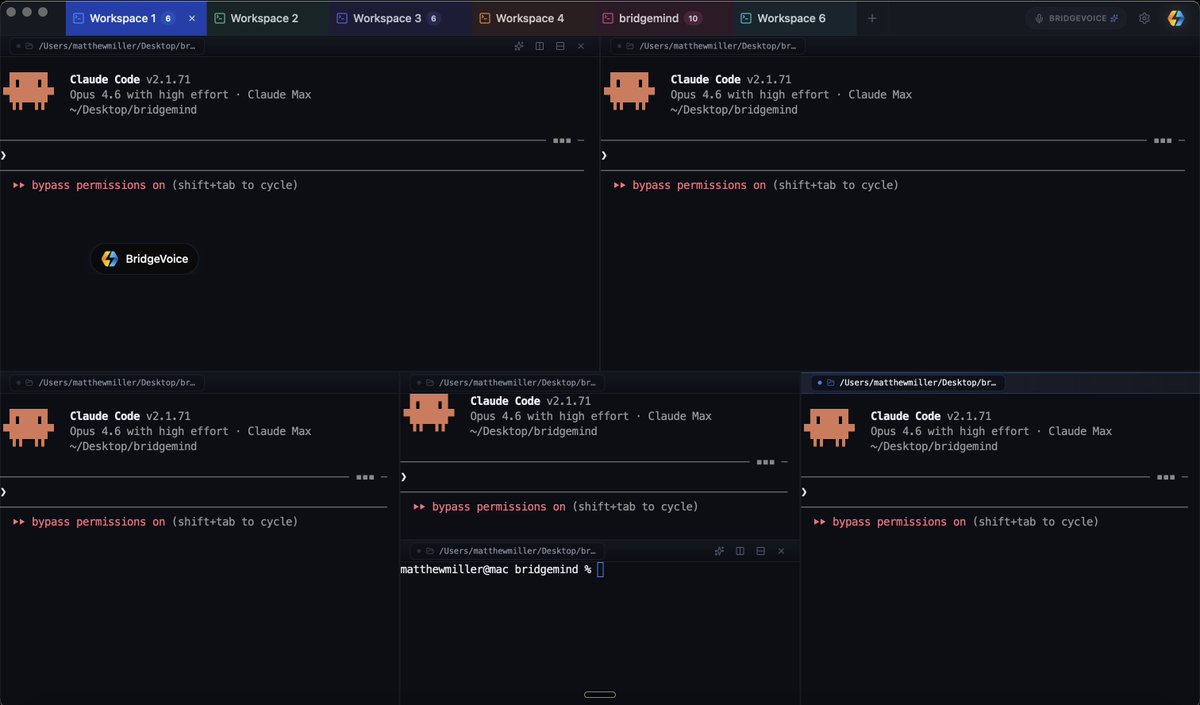

پن کیا گیا ٹویٹ

BridgeVoice is here 🔥

Voice-to-text for vibe coding:

- Instant on-device Whisper (private, offline)

- Cloud mode: 100+ languages

- Custom dictionary & instructions for perfect prompts

I've used it for 63k+ words. Watch the demo.

50% off first 3 months BridgeMind Pro:

BRIDGEVOICEFOUNDER

→ bridgemind.ai

Voice > typing? Reply yes/no 👇

English