Evolvent AI

77 posts

Evolvent AI

@Evolvent_AI

Building persistent agent infrastructure for infinite self-evolving intelligence. More at: https://t.co/o8pZbNgDPI

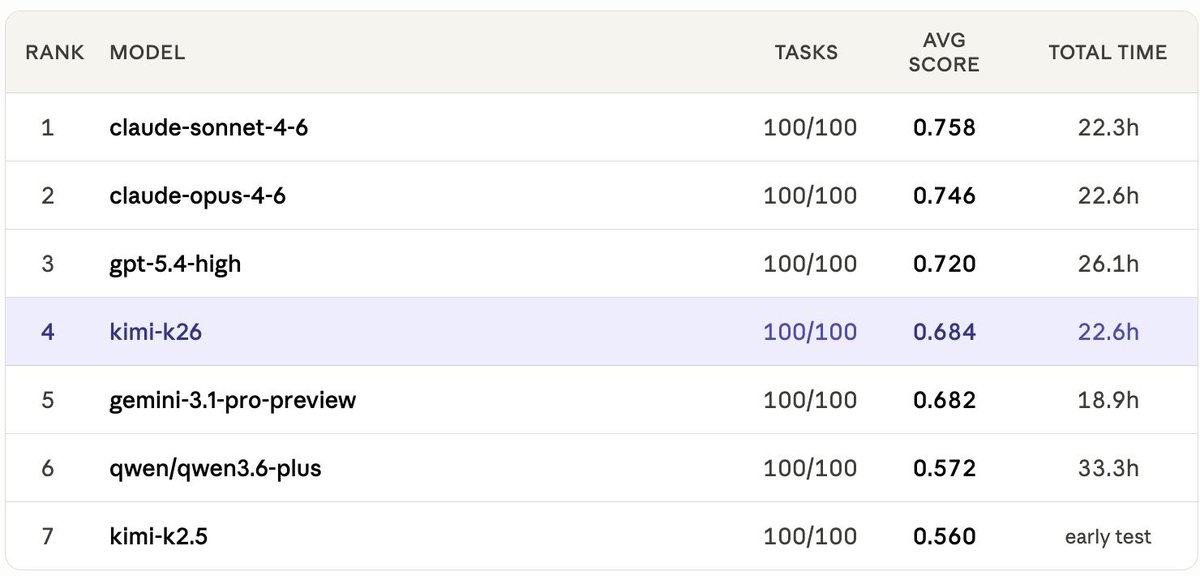

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

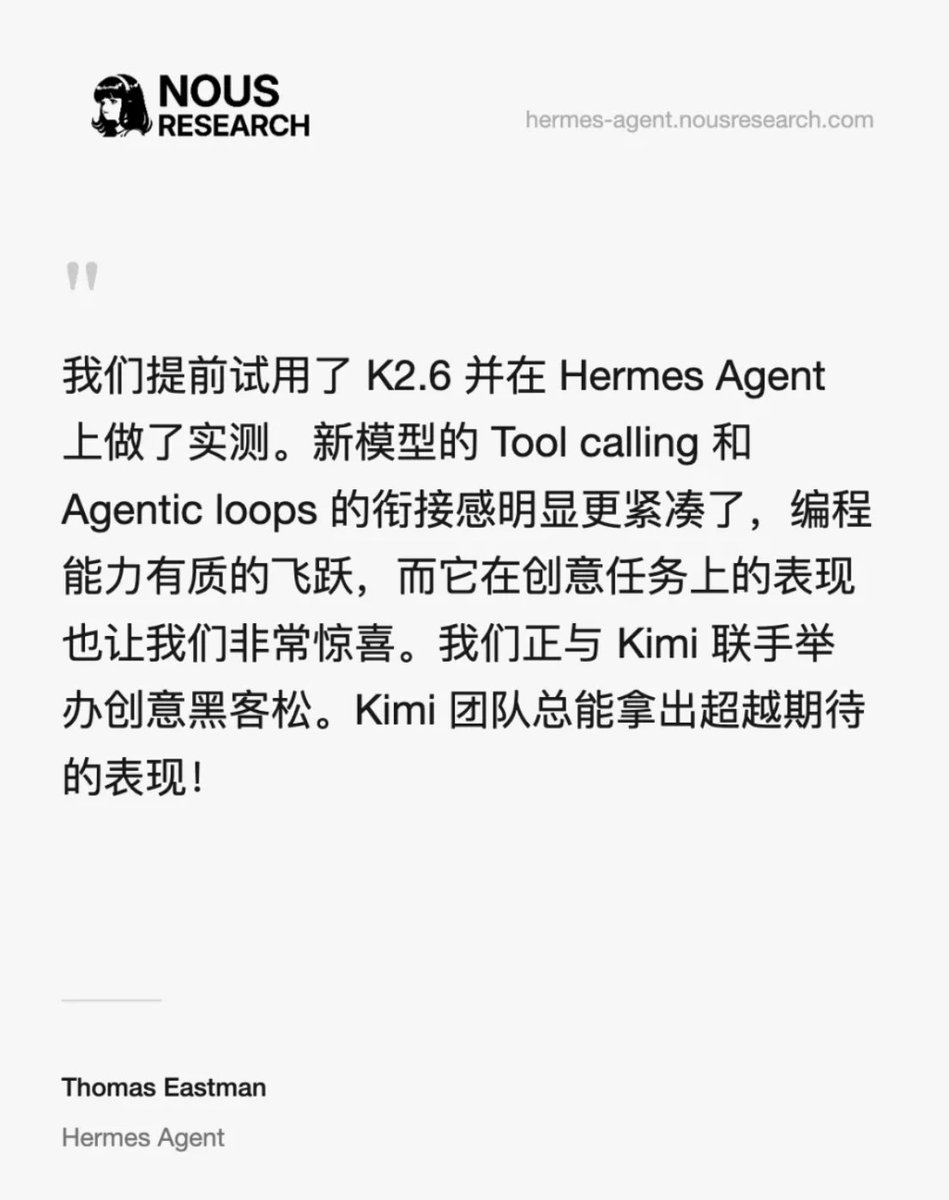

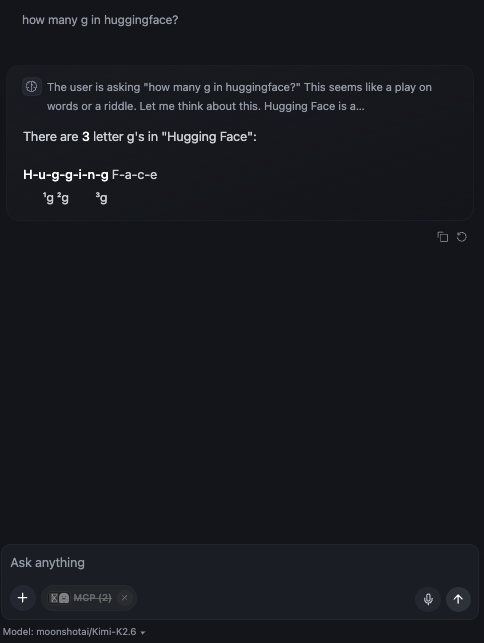

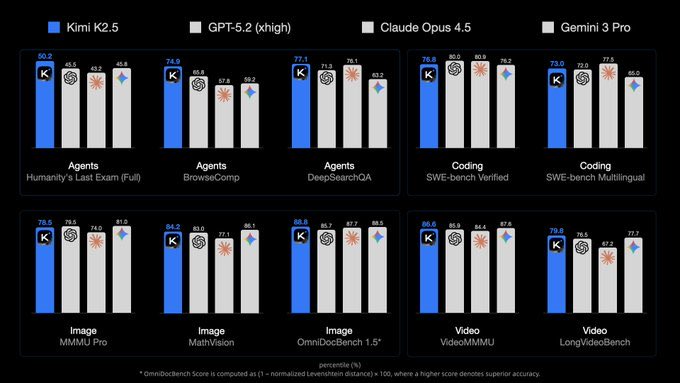

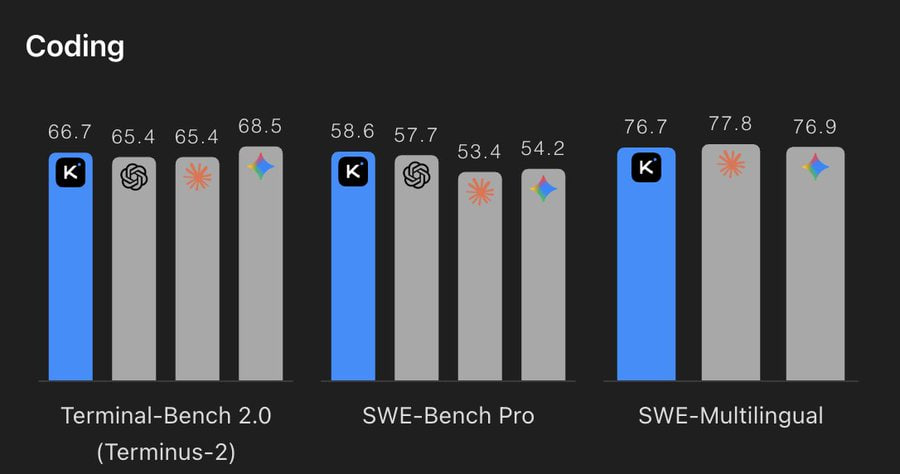

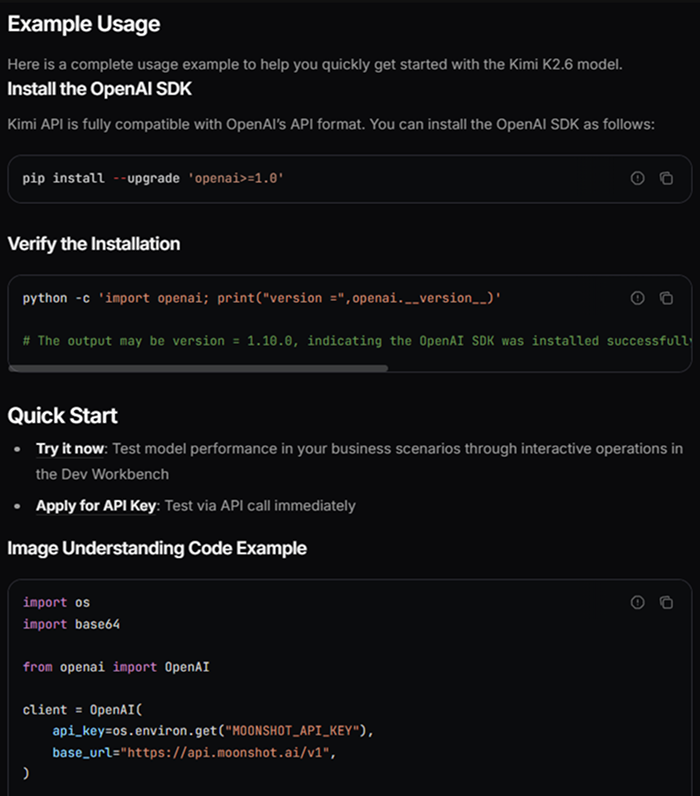

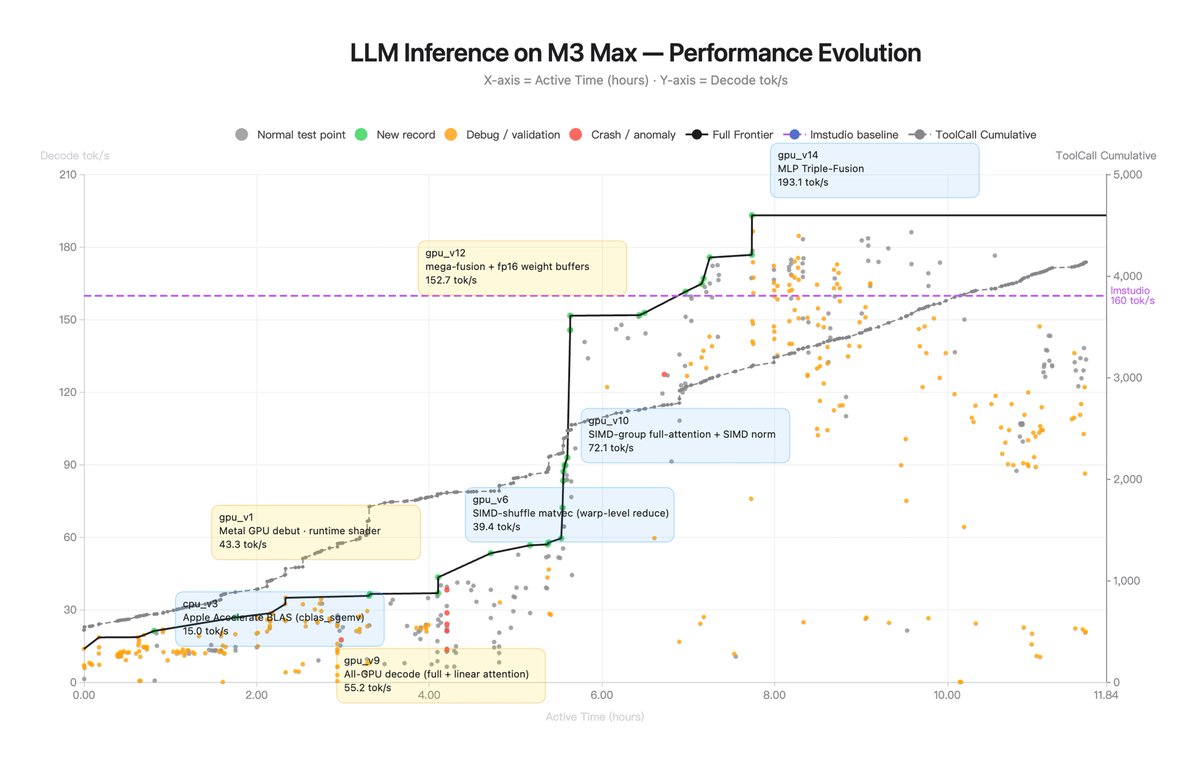

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Launch Week — Day 1: ClawMark Most agent benchmarks give the model one shot, one prompt, one frozen environment. Real coworker tasks span multiple days — and the world keeps changing while the agent works. Introducing 🦞ClawMark: a multi-day, dynamic-environment benchmark for coworker agents. Built by Evolvent together with 40+ researchers from NUS, HKU, MIT, UW, and UC Berkeley. Open-sourced at: claw-mark.com 100 tasks. 13 professional domains. Fully rule-based scoring. Results from 6 frontier models below. 🧵👇

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Kimi 2.6 from @Kimi_Moonshot is here - and 0 day available on AI/ML API! It is insanely powerful - we asked it to build space invaders and one shot result was stunning. You can check out the prompt in comments.

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…

Can confirm — K2.6 isn't just a demo-reel model. Few days ago, we received a bug report from kimi team, and we got early API access, re-ran ClawMark (our living-world openclaw benchmark). After fixing a compatibility bug in openclaw's repo (github.com/openclaw/openc…), K2.6 lands at 0.684 avg score — edging out gemini-3.1-pro (0.682) and jumping +0.124 over K2.5. Shipping shaders and agentic benchmark gains in the same release is a pretty rare combo. 👀

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…