fractalitty ری ٹویٹ کیا

fractalitty

6.4K posts

fractalitty ری ٹویٹ کیا

fractalitty ری ٹویٹ کیا

fractalitty ری ٹویٹ کیا

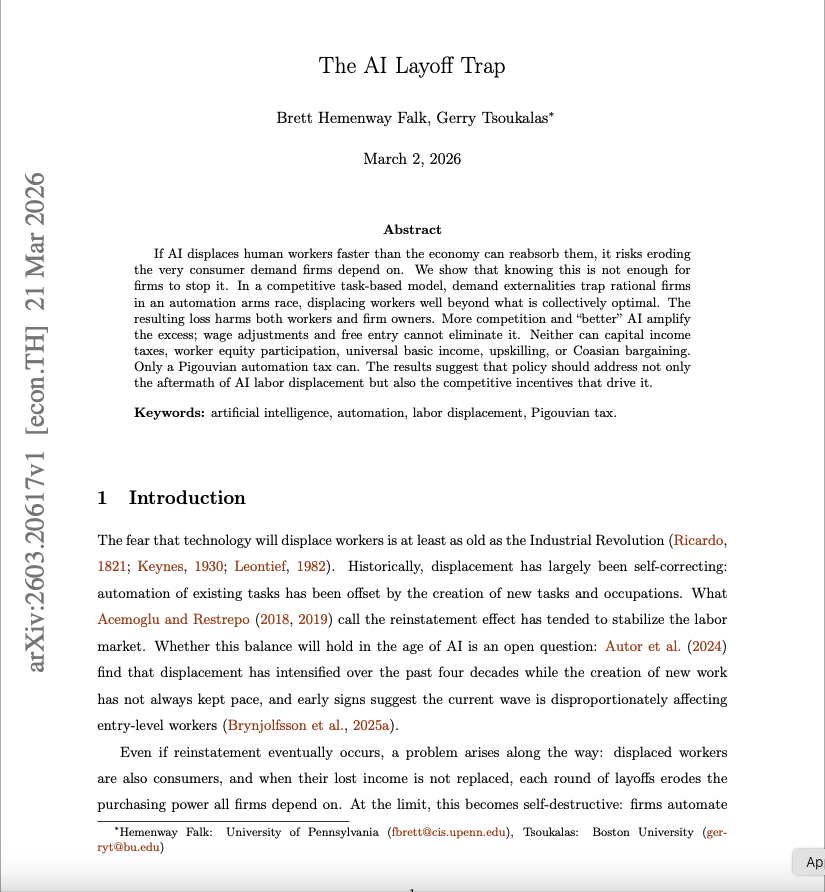

Two economists just published a mathematical proof that AI will destroy the economy.

Not might. Not could. Will — if nothing changes.

The paper is called "The AI Layoff Trap." Published March 2, 2026. Wharton School, University of Pennsylvania. Boston University. Peer reviewed. Mathematically modeled.

The conclusion is one sentence.

"At the limit, firms automate their way to boundless productivity and zero demand."

An economy that produces everything. And sells it to nobody.

Here is how you get there.

A company fires 500 workers and replaces them with AI. A competitor fires 700 to keep up. Another fires 1,000. Every company is behaving rationally. Every company is following the incentives correctly. And every company is building a trap for itself.

Because the workers who were fired were also customers.

When they lose their jobs faster than the economy can absorb them, they stop spending. Consumer demand falls. Companies respond by cutting costs — which means automating more workers — which means less spending — which means more falling demand — which means more automation.

The loop has no natural exit.

The researchers tested every proposed solution. Universal basic income. Capital income taxes. Worker equity participation. Upskilling programs. Corporate coordination agreements.

Every single one failed in the model.

The only intervention that worked: a Pigouvian automation tax — a per-task levy charged every time a company replaces a human with AI, forcing them to price in the demand they are destroying before they pull the trigger.

No government has implemented this. No major economy is seriously discussing it.

Meanwhile the numbers are already tracking the curve. 100,000 tech workers laid off in 2025. 92,000 more in the first months of 2026. Jack Dorsey fired half of Block's workforce and said publicly: "Within the next year, the majority of companies will reach the same conclusion."

Nobody is doing anything wrong. Companies are following their incentives perfectly. That is exactly the problem.

Rational behavior. At scale. Simultaneously. With no mechanism to stop it.

Two economists built the math. The math leads to one place.

Source: Falk & Tsoukalas · Wharton School + Boston University ·

arxiv.org/pdf/2603.20617

English

fractalitty ری ٹویٹ کیا

They quoted him $18,000. He opened Claude instead.

Same result. One afternoon. $20/month.

The gap between knowing how to use Claude and not knowing is worth $18,000.

This article below fixes that.

Anatoli Kopadze@AnatoliKopadze

English

fractalitty ری ٹویٹ کیا

fractalitty ری ٹویٹ کیا

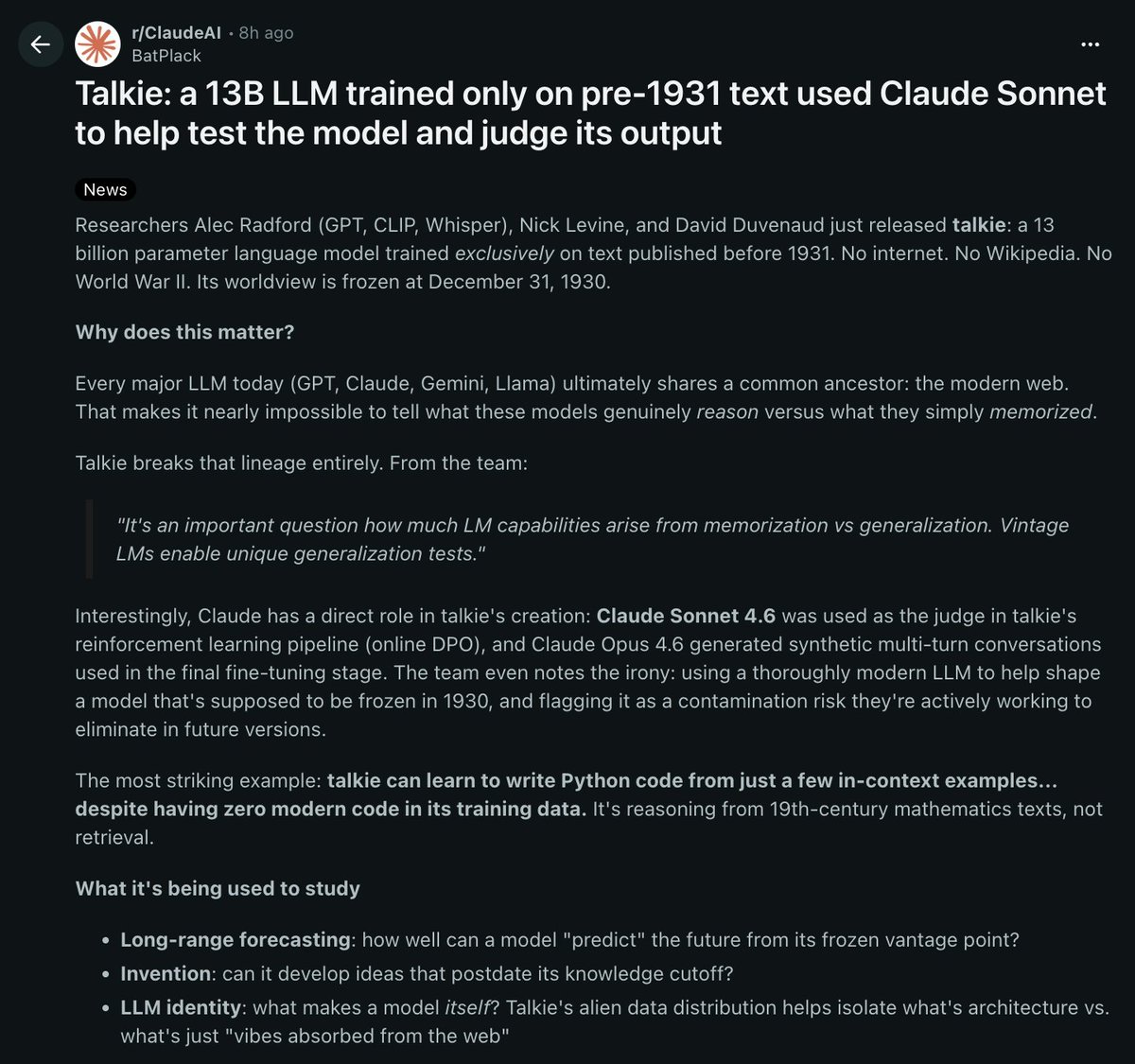

RESEARCHERS JUST BUILT AN AI MODEL TRAINED ONLY ON TEXT FROM BEFORE 1931

it's called talkie. 13 billion parameters, trained exclusively on text published before december 31, 1930

its worldview is completely frozen in time

the reason this matters: every major AI model today (GPT, claude, gemini, llama) was trained on the modern web.

that makes it almost impossible to tell if these models actually reason or if they just memorized the answers from their training data

talkie breaks that completely because it has never seen any modern information

the crazy part:

talkie can learn to write python code from just a few examples you show it in the prompt. despite having ZERO modern code in its training data.

it's figuring out programming from 19th century mathematics texts. that's ACTUAL reasoning

claude sonnet 4.6 was used as the judge in talkie's reinforcement learning pipeline. claude opus 4.6 generated the synthetic conversations used in fine tuning. a modern AI was used to train a model that's supposed to be frozen in 1930

the team already flagged this as a contamination risk they want to eliminate in future versions

what they're using it to study:

> long range forecasting. how well can a model "predict" the future from a frozen vantage point

> invention. can it develop ideas that didn't exist until after its knowledge cutoff

> LLM identity. what makes a model itself vs what's just patterns absorbed from the web

alec radford built this. the same guy behind GPT, CLIP, and whisper

both models are open source on hugging face.

they're already planning a GPT-3 scale vintage model later this year

an AI that has never seen the modern world can still reason its way to writing code.

THAT alone tells you more about intelligence than any benchmark ever will

English

fractalitty ری ٹویٹ کیا

But you dont even have a model Flerf!

Ah but yes we do!

An observer-based sky model.

No globe constants, no radius — just angles, timing, and position.

Two layers: what you see, and where it's sourced.

Pure ratios. Pure kinematics.

Decide for yourself which framing is real

Shane St Pierre@AntiDisinfo86

New FE Conceptual Model just dropped Fully Interactive model with fully adjustable date, location, star, sun and moon options No globularity, no globe constants — pure observational geometry. Cosmology overlays, Observer figures etc alanspaceaudits.github.io/conceptual_fla… github.com/AlanSpaceAudit…

English

@RapidResponse47 @POTUS Create a problem, appear as victim, provide solution

English

.@POTUS: It's not a particularly secure building, and I didn't want to say this, but this is why we have to have all of the attributes of what we're planning at the White House. It's drone proof, it's bulletproof glass. That's why Secret Service and the military are demanding it.

English

fractalitty ری ٹویٹ کیا

fractalitty ری ٹویٹ کیا

🚨 Anthropic's own team just showed how to actually use Claude Code properly.

30 minutes. free. the person who created Claude Code.

watch the workshop. bookmark it.

worth more than every $500 course you almost bought.

you've been using Claude without knowing 40 of its commands.

Then read the guide below.

Khairallah AL-Awady@eng_khairallah1

English

fractalitty ری ٹویٹ کیا

fractalitty ری ٹویٹ کیا

🚨 INSTEAD OF WATCHING NETFLIX TONIGHT.

Spend 1 hour with this.

Obsidian + Claude Code = 24/7 personal operating system.

Works while you sleep.

The people who build this tonight will never work the same way again.

Watch it and Bookmark it now.

CyrilXBT@cyrilXBT

English

fractalitty ری ٹویٹ کیا