پن کیا گیا ٹویٹ

Rob Imbeault

6K posts

Rob Imbeault

@RobImbeault

saas unicorn founder - building the world’s smartest ai memory on the world’s most configurable stack - wrote a bestseller (not tech)

شامل ہوئے Kasım 2024

381 فالونگ2.1K فالوورز

I could pay attention to the road or I can watch a demo on context engineering by @micheltricot @AirbyteHQ FSD-ftw

English

We Just Shipped What OpenAI, Google, and Anthropic Have Not. Here Are 6 Updates:

dev.to/jon_at_backboa…

English

holy sh*t they actually did it

Google Research@GoogleResearch

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

English

This is what I’m seeing at the rock face. And what our vision is to create an infrastructure that is deterministic in order that AI agents can build on something foundationally strong and trustworthy is our future.

We also have some more fun things about our sleeves.

Keep building. Keep taking big swings.

corbin@corbin_braun

coding is dead in sf

English

I love this! Devs need to chill. "I’m Learning AI in Public, and I Think Developers Need to Chill a Bit" by Jonathan Murray #DEVCommunity dev.to/jon_at_backboa…

English

@elliott__potter The perfect amount of nostalgia. Might have aged myself 😝

English

@RobImbeault thank you Rob! All in-house and lots of late nights...

English

We’ve raised $27M for this moment: starting today, your agent gets an iPhone and can talk like a friend.

Texting is the universal interface. Billions of people text every day, but until now, developers have been restricted from building on the most powerful channel to ever exist.

Linq is a single API for iMessage, RCS, SMS, voice, and even FaceTime and Find My. Nothing for users to download. Nothing new to learn.

We’re already powering @interaction, @pika_labs, @getlindy, @zocomputer, @joindimension, Tomo (and others we can’t name just yet) to bring this new ecosystem to life.

Join them, and start building for free in our sandbox, linked below. Or comment and we’ll get you set up.

English

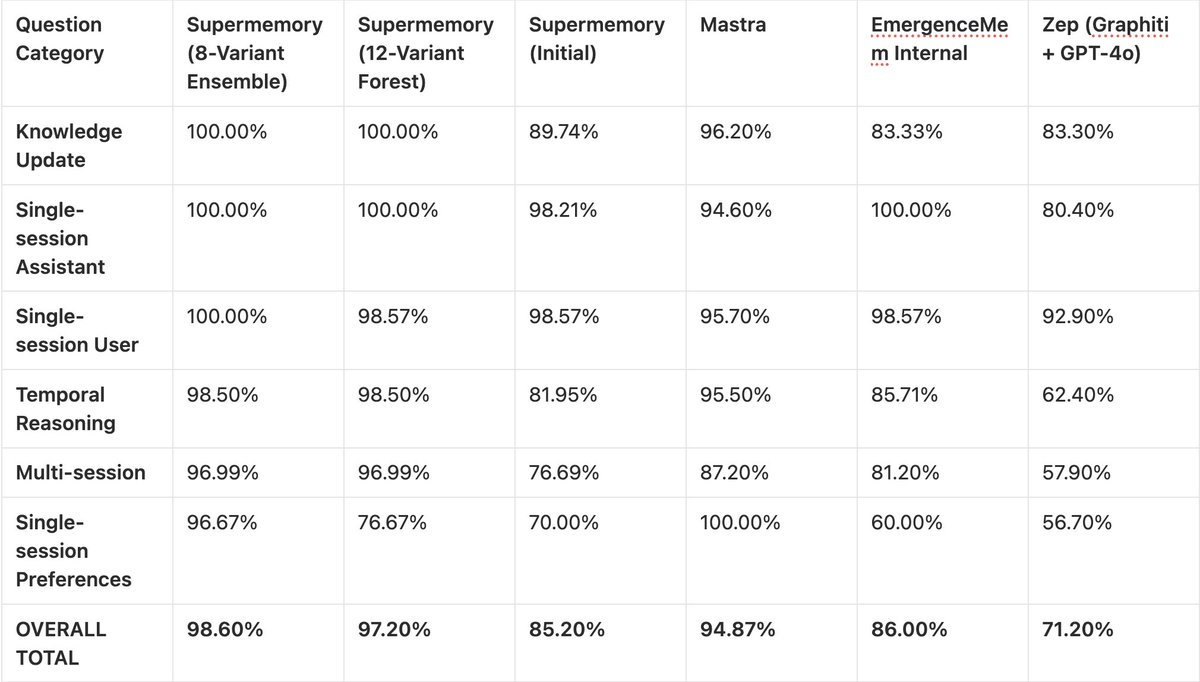

Love what Dhravya has been building and his work. He understands memory, so I am a little taken aback by this article. I am going to push back on this pretty strongly. This is being framed as a breakthrough when it's mostly benchmark engineering + system inflation.

First, 115k+ tokens are no longer a hard problem. We already have 1M+ context windows in production. Calling this "long-term memory" is stretching it.

Second, LongMemEval is no longer representative of real systems. It's been around for a while and doesn’t capture long-running agent workflows, real-time updates, noisy tool outputs, and cost/latency constraints.

Third, the "99% accuracy" claim is misleading. If you run 8 parallel prompts and count any correct answer as success, you are not improving memory. You are increasing the probability of a hit. That's not intelligence but sampling until something works. (spray and pray)

Same with the 12-agent voting setup. It's essentially majority voting across multiple attempts, which inflates benchmark scores without improving core capability.

Fourth, saying "no vector DB needed" is not an actual unlock. You have just replaced retrieval with multiple LLM passes, which require higher compute, greater latency, and higher cost. It is shifting complexity, not removing it. These things matter a lot in a production system, so you can forget about deploying this in production.

Fifth, the core claim that "agentic retrieval beats vector search" is oversimplified. The real problem in memory systems isn’t embeddings vs agents. Its relevance filtering, temporal consistency, memory lifecycle (what to store/forget), and grounding vs. hallucination.

None of these are solved here.

Also, this entire system assumes perfect extraction during ingestion, relies heavily on prompt engineering, and offers no guarantees of consistency across runs. So calling memory "solved" is very premature.

This is a good experiment in agent orchestration, not a memory solution.

Dhravya Shah@DhravyaShah

English

So cool: Supermemory 99% on Sota Memory!

•Achieved ~99% on LongMemEval_s using experimental ASMR (Agentic Search and Memory Retrieval) technique.

•Replaced vector search and embeddings with parallel observer agents extracting structured knowledge across six vectors from raw multi-session histories.

•Deployed specialized search agents for direct facts, related context, and temporal reconstruction; no vector database required.

Will be open source in 11 days!

Dhravya Shah@DhravyaShah

English

@sarahwooders Agreed. Ps the Letta flat file post was inspired. 🙌

English

it’s hard for me to express sufficiently just how dishonest it is (if you really work on memory) to present a LongMem score as some kind of breakthrough

it’s a 3 year old benchmark, the results here are dishonest, it’s a marginal amount of tokens in contemporary ai, an everyone aces it already

English

@DhravyaShah Hahahahahah what’s funny is that benchmarks don’t go that high so super impressive. Got the engagement you were looking for I guess.

English

@JayGenXer This is such a no brainer for Canada. Open up your market 10X!!

English