♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤

80.4K posts

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤

@SaltinDeadsec

Ecoute les rumeurs elles savent tout de moi - Hacktivist, politically incorrect ... Ce monde est pourri je vais te le prouver https://t.co/Vkc2ZFZExN

UERSS شامل ہوئے Ağustos 2022

1.5K فالونگ360 فالوورز

youtu.be/FQEw1mB0GOs?si…

Et c'est que le début : tu vas voir quand ils vont s'approprier Jérusalem et reconstruire le temple dans un élan de folie messianique.

YouTube

Français

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

GitHub - samftggr/VEN0m-Ransomware: Demonstrate how a signed driver can bypass defenses to deploy ransomware on Windows 11 with advanced AV and UAC evasion techniques. · GitHub github.com/samftggr/VEN0m…

English

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

Plug a $30 USB stick into your laptop and you can listen to satellites, decode pager traffic, intercept walkie-talkies, and watch TV signals fall out of the air around you.

Free. No license. No subscription.

Just one tool nobody outside the radio underground talks about.

It's called SigDigger. An open source digital signal analyzer that turns a cheap SDR dongle into a full radio intelligence rig.

Here is what it can actually do.

Point it at the sky and you can pull down NOAA weather satellite images as they pass overhead. Tune it to your local airport and you can decode aircraft transponders in real time. Sweep the FM band and you can demodulate analog voice the moment it hits the antenna.

The interface looks like a Bloomberg Terminal for the airwaves.

A live waterfall display showing every signal in your area. PSK, FSK, and ASK demodulation. Burst signal analysis for the weird short transmissions nobody can identify. Analog video decoding. Panoramic spectrum sweeping across entire frequency ranges.

All running on a Linux or macOS laptop with zero specialized hardware.

What used to require a $40,000 spectrum analyzer locked inside a defense lab now runs in your living room for the price of a USB stick.

The author built the entire DSP backend from scratch instead of leaning on GNU Radio. He wrote his own core library called Suscan, his own signal processing library called Sigutils, and his own widget library called SuWidgets. Faster. Cleaner. Optimized for the exact tasks reverse engineers and amateur radio operators actually need.

Plugin support is built in. AmateurDSN for deep space network monitoring. APTPlugin for weather satellites. AntSDRPlugin for the AntSDR hardware. ZeroMQPlugin for piping signal data into other tools. Everything snaps in with one command.

The whole stack supports SoapySDR, which means almost every SDR device on the market works out of the box. RTL-SDR. HackRF. LimeSDR. Airspy. Plug it in and start digging.

1.5K stars. LGPL-3.0. 100% Opensource.

English

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

Earlier this year, I wrote about 6x different emulation techniques used by threat actors that silence EDR agents and detection strategies for each one.

The diagram of the most common technique using WFP Filters:

🖊️ ipurple.team/2026/01/12/edr…

SEKTOR7 Institute@SEKTOR7net

Silencing the EDR Silencers Analysis of techniques to disable or silence EDR agents and some countermeasures, a post by Jonathan Johnson (@JonnyJohnson_ ) Source: huntress.com/blog/silencing… #redteam #blueteam #maldev #malwaredevelopment

English

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

🚨Breaking: Someone open sourced a knowledge graph engine for your codebase and it's terrifying how good it is.

It's called GitNexus. And it's not a documentation tool.

It's a full code intelligence layer that maps every dependency, call chain, and execution flow in your repo -- then plugs directly into Claude Code, Cursor, and Windsurf via MCP.

Here's what this thing does autonomously:

→ Indexes your entire codebase into a graph with Tree-sitter AST parsing

→ Maps every function call, import, class inheritance, and interface

→ Groups related code into functional clusters with cohesion scores

→ Traces execution flows from entry points through full call chains

→ Runs blast radius analysis before you change a single line

→ Detects which processes break when you touch a specific function

→ Renames symbols across 5+ files in one coordinated operation

→ Generates a full codebase wiki from the knowledge graph automatically

Here's the wildest part:

Your AI agent edits UserService.validate().

It doesn't know 47 functions depend on its return type.

Breaking changes ship.

GitNexus pre-computes the entire dependency structure at index time -- so when Claude Code asks "what depends on this?", it gets a complete answer in 1 query instead of 10.

Smaller models get full architectural clarity. Even GPT-4o-mini stops breaking call chains.

One command to set it up:

`npx gitnexus analyze`

That's it. MCP registers automatically. Claude Code hooks install themselves.

Your AI agent has been coding blind. This fixes that.

9.4K GitHub stars. 1.2K forks. Already trending.

100% Open Source.

(Link in the comments)

English

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

⚠️ China-linked hackers targeted governments across Asia + a NATO state (Poland), exploiting Exchange/IIS flaws to deploy ShadowPad.

At the same time: journalists & activists hit with phishing campaigns.

Two ops. Same priorities.

Details here → thehackernews.com/2026/05/china-…

English

♤ ʂa𝖑𝖙𝖎n𝖇a𝖓ӄ ♤ DEADSEC ♤ Baroud d'honneur ♤ ری ٹویٹ کیا

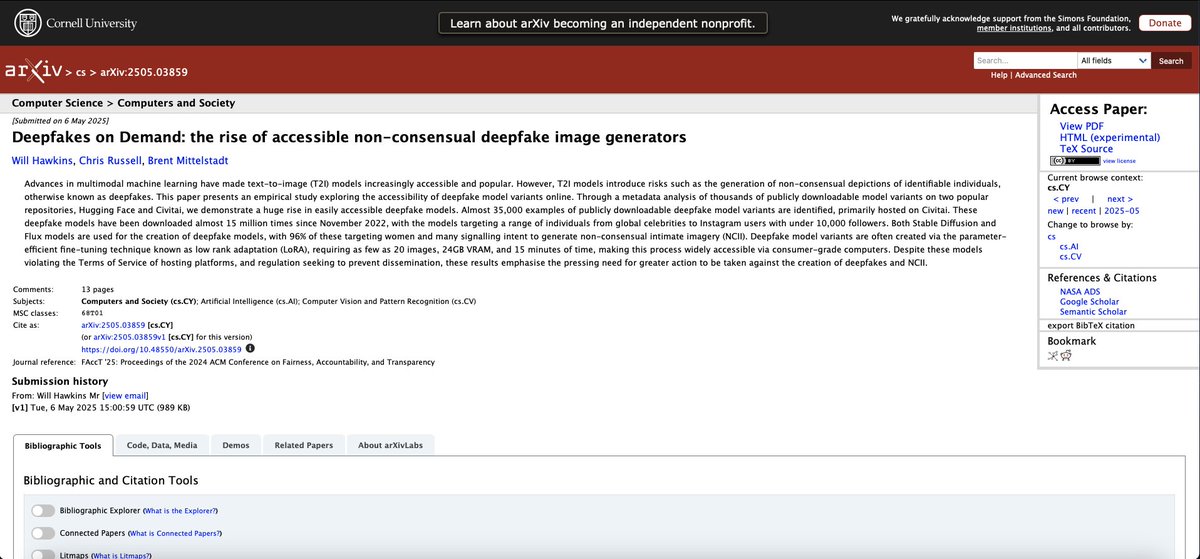

A tech-unsavvy teenager can now create a sexually explicit deepfake of their classmate in minutes.

No Photoshop skills. No technical knowledge. No money.

Just a photo and a free download.

Researchers at ACM FAccT 2025 — the world's premier conference on algorithmic fairness and accountability — just published the most comprehensive study ever done on the underground infrastructure that makes this possible.

They identified over 34,000 downloadable deepfake model variants intended to generate images of identifiable people, cumulatively downloaded more than 15 million times since 2022. Releasebot

15 million downloads. Each one a tool for generating realistic sexual imagery of real, named, identifiable people — overwhelmingly without their knowledge or consent.

96% of the 2,000 deepfake models analyzed target identifiable women, without any suggestion of consent being obtained. Releasebot

15% of high school students in the United States have heard about a sexual deepfake of someone associated with their school. 13% of teenagers in the United Kingdom have personally experienced a sexually explicit deepfake image of themselves. Releasebot

One in eight British teenagers. Not heard about it. Personally experienced it.

Then in February 2026, UNICEF released a joint study with ECPAT and INTERPOL spanning 11 countries.

At least 1.2 million children disclosed having had their images manipulated into sexually explicit deepfakes in the past year. In some countries, this represents 1 in 25 children — the equivalent of one child in a typical classroom. Getpassionfruit

One child per classroom. Every classroom. In 11 countries. In one year.

The researchers traced the supply chain behind these numbers. The deepfake models are not hidden on the dark web. They are hosted on mainstream open-source AI platforms. The release of Flux in August 2024 — a legitimate, powerful image generation model — triggered an acceleration in deepfake model creation that the researchers describe as a step change. Better base models made the attack easier, cheaper, and more realistic.

The number of sexually explicit deepfake images rose 87% from 2022 to 2023. The trend has not slowed. It has accelerated every year since. OpenAI

The researchers documented who builds these tools and why. Many operate commercially — charging subscription fees, accepting cryptocurrency, offering customer support for their deepfake generation services. The anonymity infrastructure of the open-source AI ecosystem provides cover. The platforms hosting the models apply content policies inconsistently, if at all.

A projected 8 million deepfakes will be shared in 2025, up from 500,000 in 2023. Vellum

16 times more in two years.

The legislation is not keeping up. Laws vary by jurisdiction. Enforcement is nearly nonexistent. Victims face the same reporting mechanisms — designed for traditional content moderation — that were built before this technology existed.

A separate audit study found those reporting mechanisms to be confusing, ineffective, and routinely incapable of providing victims with meaningful support.

The technology to create this content is freely available, improving rapidly, and used by teenagers against other teenagers in schools across every country studied.

There is no technical barrier. There is no meaningful legal barrier. And there is, as of today, no solution in deployment at the scale of the problem.

Source: "Deepfakes on Demand" · ACM FAccT 2025 · dl.acm.org/doi/10.1145/37… · arxiv.org/abs/2505.03859

UNICEF Joint Study · UNICEF/ECPAT/INTERPOL · February 4, 2026 · unicef.org/press-releases…

English