Sapient Intelligence

19 posts

@Sapient_Int

We are building self-evolving Machine Intelligence to solve the world's most challenging problems.

Hierarchical reasoning works well on large language models!🎉

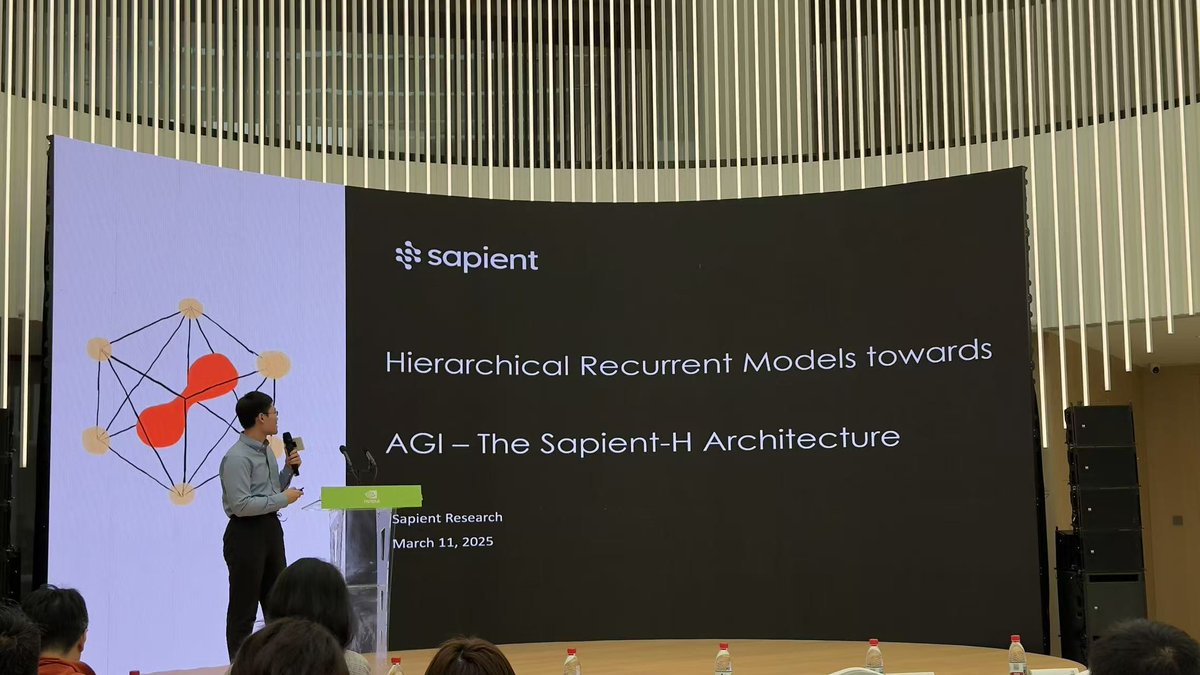

Thanks to @arcprize for reproducing and verifying the results! ARC-AGI-1: public 41% pass@2 - semi private 32% pass@2 ARC-AGI-2: public 4% pass@2 - semi private 2% pass@2 Due to differences in testing environments, a certain amount of variance in results is acceptable. According to tests run on our infrastructure, the open-source version of HRM on our GitHub can achieve a score of 5.4% pass@2 on the ARC-AGI-2. We welcome everyone to run it on your own infra and share your scores~ This is our first submission to the leaderboard, and it's a good starting point. We appreciate everyone for your support and feedback on HRM, both before and after our appearance on the ARC leaderboard. All of this encourages and motivates us to improve. The hierarchical architecture is designed to resolve premature convergence in long-horizon tasks, like master-level Sudoku that takes hours for humans to solve. See the comparison with a simple recurrent Transformer. Such a long chain might not be essential for ARC problems, and we only used a high-low ratio of 1/2. Larger ratios are often needed for optimal performance for Sudoku problems. In the case of ARC-AGI, the success of HRM is a testament to the model's ability to exhibit fluid intelligence - that is, its capability to infer and apply abstract rules from independent and flat examples. We are glad it was discovered in a recent blog post that the outer loop and data augmentation are essential for this ability, and we especially thank @fchollet @GregKamradt @k_schuerholt for pointing this out. Finally, we are accelerating the iteration of the HRM model and continuously pushing its limits, with good progress so far. At the same time, we believe the hierarchical architecture is highly effective in many scenarios. Moving forward, we will make further targeted updates to the architecture and validate it on more applications. We will also release an FAQ to address the key questions raised by the community. 🧠 Stay tuned!

🚀Introducing Hierarchical Reasoning Model🧠🤖 Inspired by brain's hierarchical processing, HRM delivers unprecedented reasoning power on complex tasks like ARC-AGI and expert-level Sudoku using just 1k examples, no pretraining or CoT! Unlock next AI breakthrough with neuroscience. 🌟 📄Paper: arxiv.org/abs/2506.21734 💻Code: github.com/sapientinc/HRM

Lots of hot takes on whether it's possible that DeepSeek made training 45x more efficient, but @doodlestein wrote a very clear explanation of how they did it. Once someone breaks it down, it's not hard to understand. Rough summary: * Use 8 bit instead of 32 bit floating point numbers, which gives massive memory savings * Compress the key-value indices which eat up much of the VRAM; they get 93% compression ratios * Do multi-token prediction instead of single-token prediction which effectively doubles inference speed * Mixture of Experts model decomposes a big model into small models that can run on consumer-grade GPUs

🚀 DeepSeek-R1 is here! ⚡ Performance on par with OpenAI-o1 📖 Fully open-source model & technical report 🏆 MIT licensed: Distill & commercialize freely! 🌐 Website & API are live now! Try DeepThink at chat.deepseek.com today! 🐋 1/n