Sergey Kolesnikov

589 posts

Sergey Kolesnikov

@Scitator

Head of AI Research in a fintech company. Decision Making in the Wild. Opinions are my own.

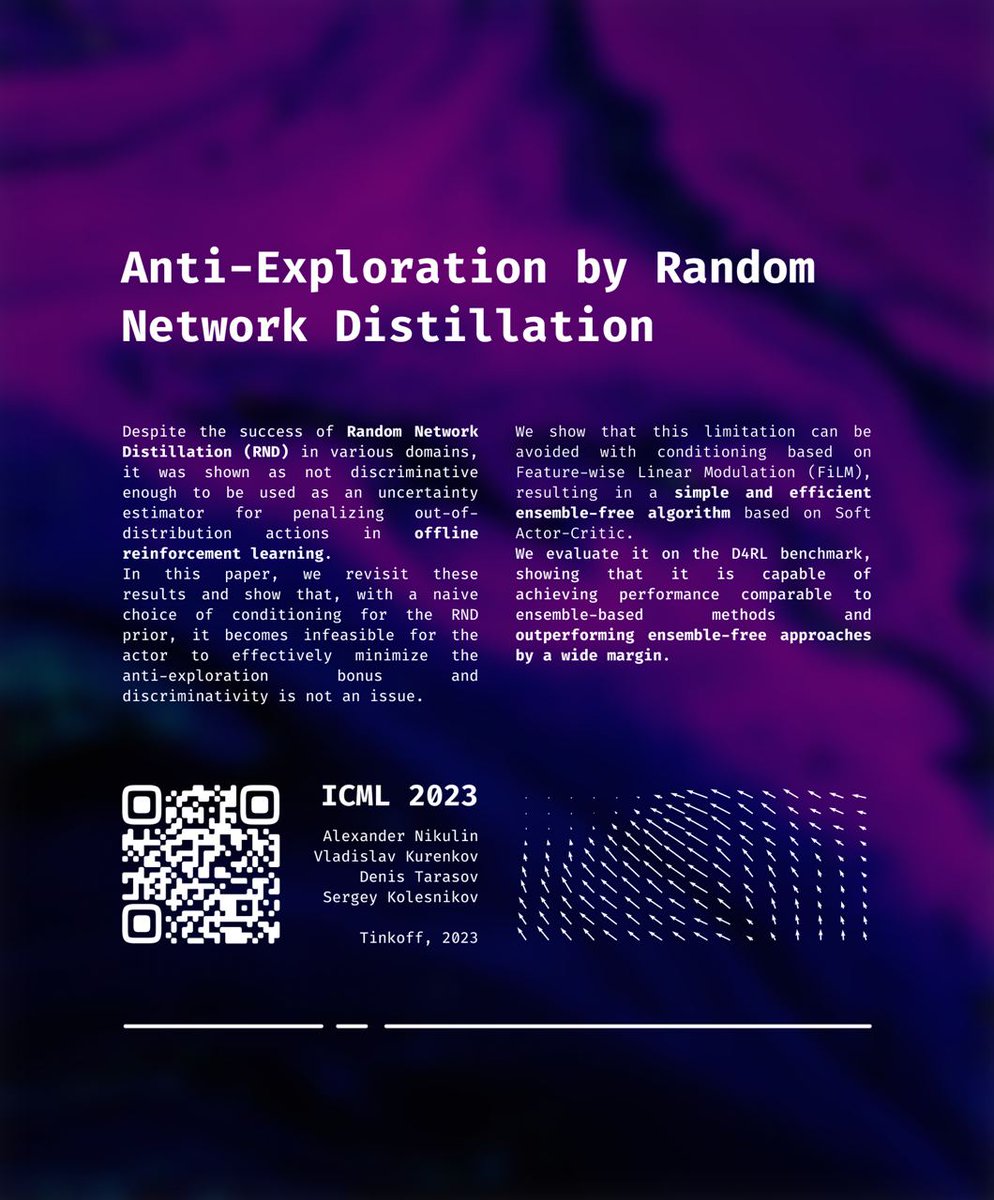

There were a lot of algorithmic innovations in offline RL recently, along with a silent evolution of minor design choices. What if we applied these seemingly minor modifications to an established minimalistic baseline by @shaneguML? Turns out, gains are enormous.

In our new paper 🚀 we optimize accuracy via gradient descent! The work, called "EXACT: How to Train Your Accuracy", will be presented at the TAG-ML workshop during #ICML2022 🙃 Paper: arxiv.org/pdf/2205.09615… Poster: drive.google.com/file/d/1ZBOBQv… Enjoy!)

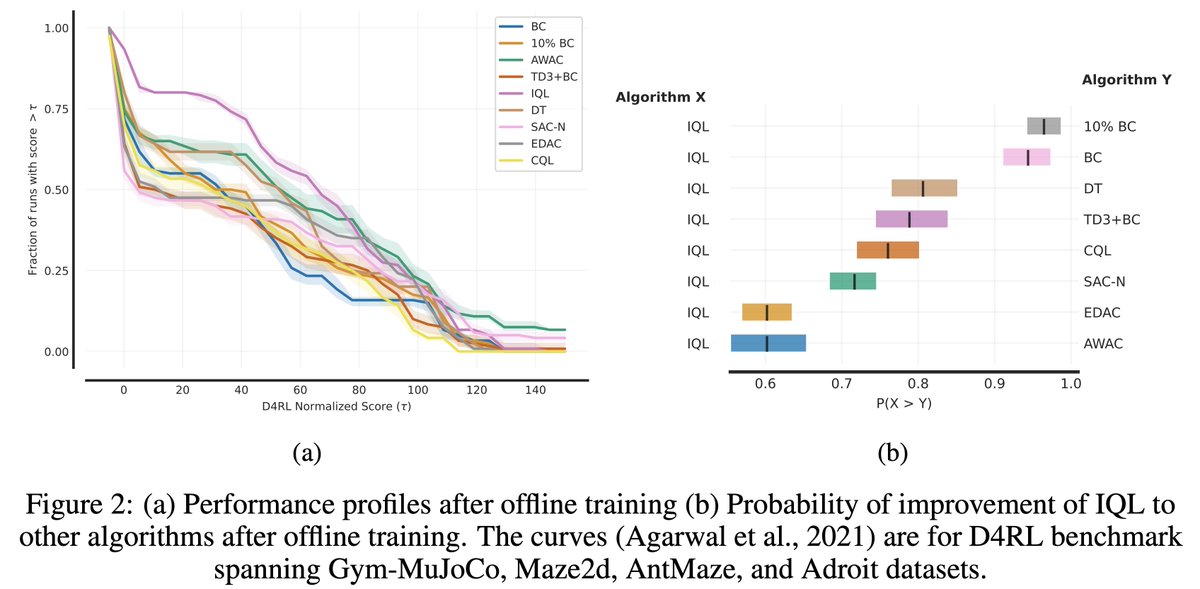

Extremely pleased to announce that our paper “Showing Your Offline Reinforcement Learning Work: Online Evaluation Budget Matters” was accepted to ICML 2022 (Spotlight)! tinkoff-ai.github.io/eop (1/N)