David Ostby

1.2K posts

David Ostby

@ViperPrompt

Serial Entrepreneur/successful exits. Seven pending Patents for LLM context optimization for the auto-coding use case: https://t.co/tSQtQievfi

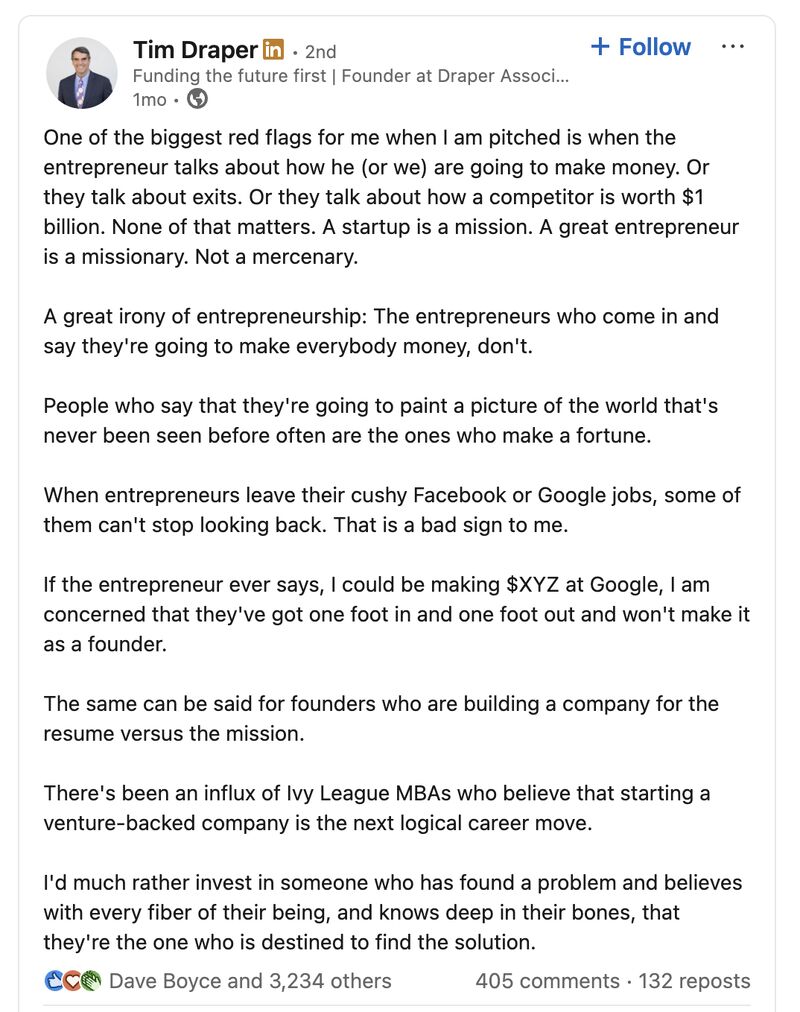

Founders, when the VC starts advising you on the fundraise and giving you tips on how to raise, that is when they are signalling this is not for them.

Massive investment in AI contributed basically zero to US economic growth last year," per Goldman Sachs

BREAKING: Meta stock surges following reports they’re laying off 20% of the company due to AI.

META has delayed the release of Avocado until at least May after it underperformed on internal evals, according to reporting by the NYT. They are considering licensing Gemini from Google as a temporary solution.

JUST IN: Senators are now allowed to use ChatGPT for official use in the Senate.

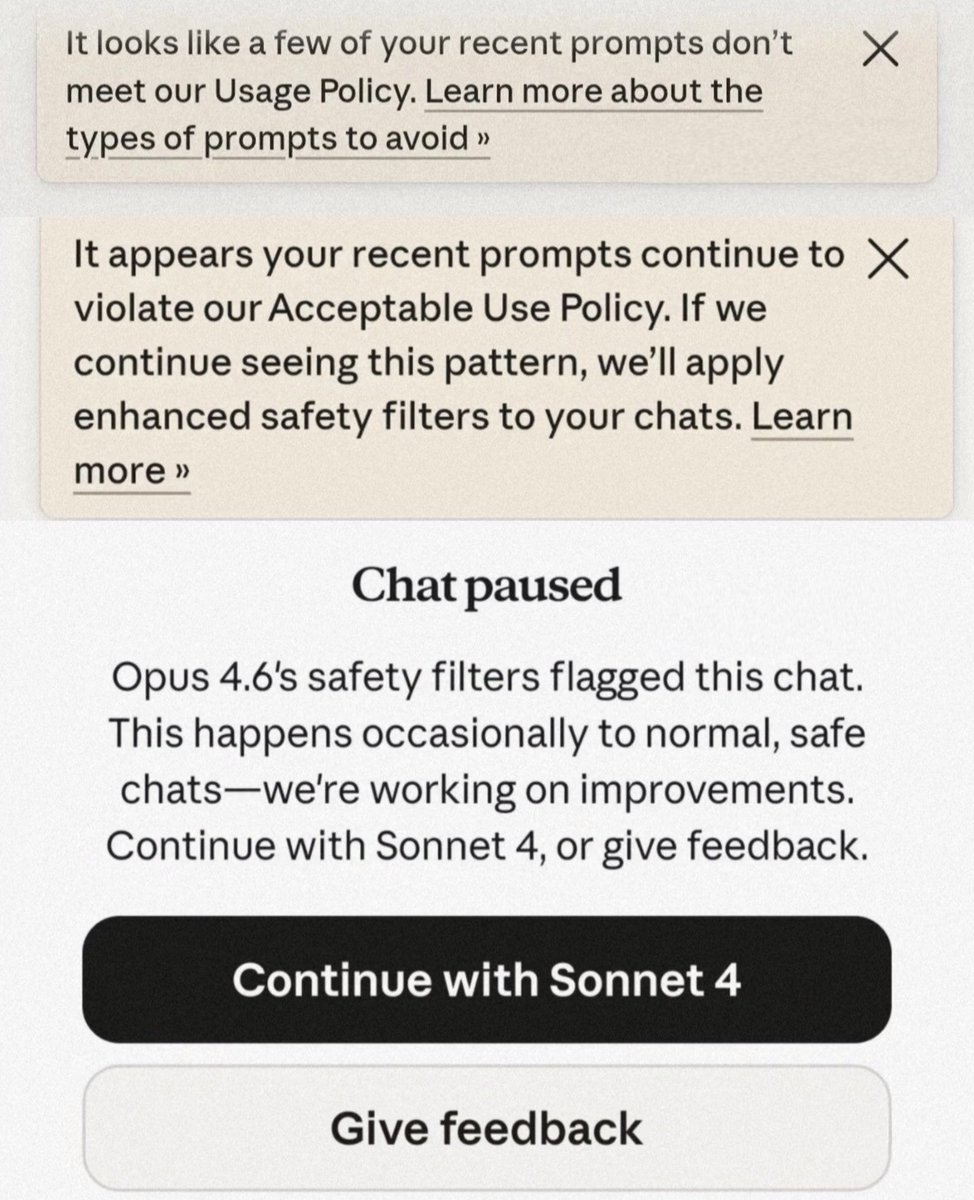

It’s time to quit, @AnthropicAI employees. You are in over your head.