Bitcoin Maxi |Billy 马 /🦞🦀

8.4K posts

Bitcoin Maxi |Billy 马 /🦞🦀

@bilion1983

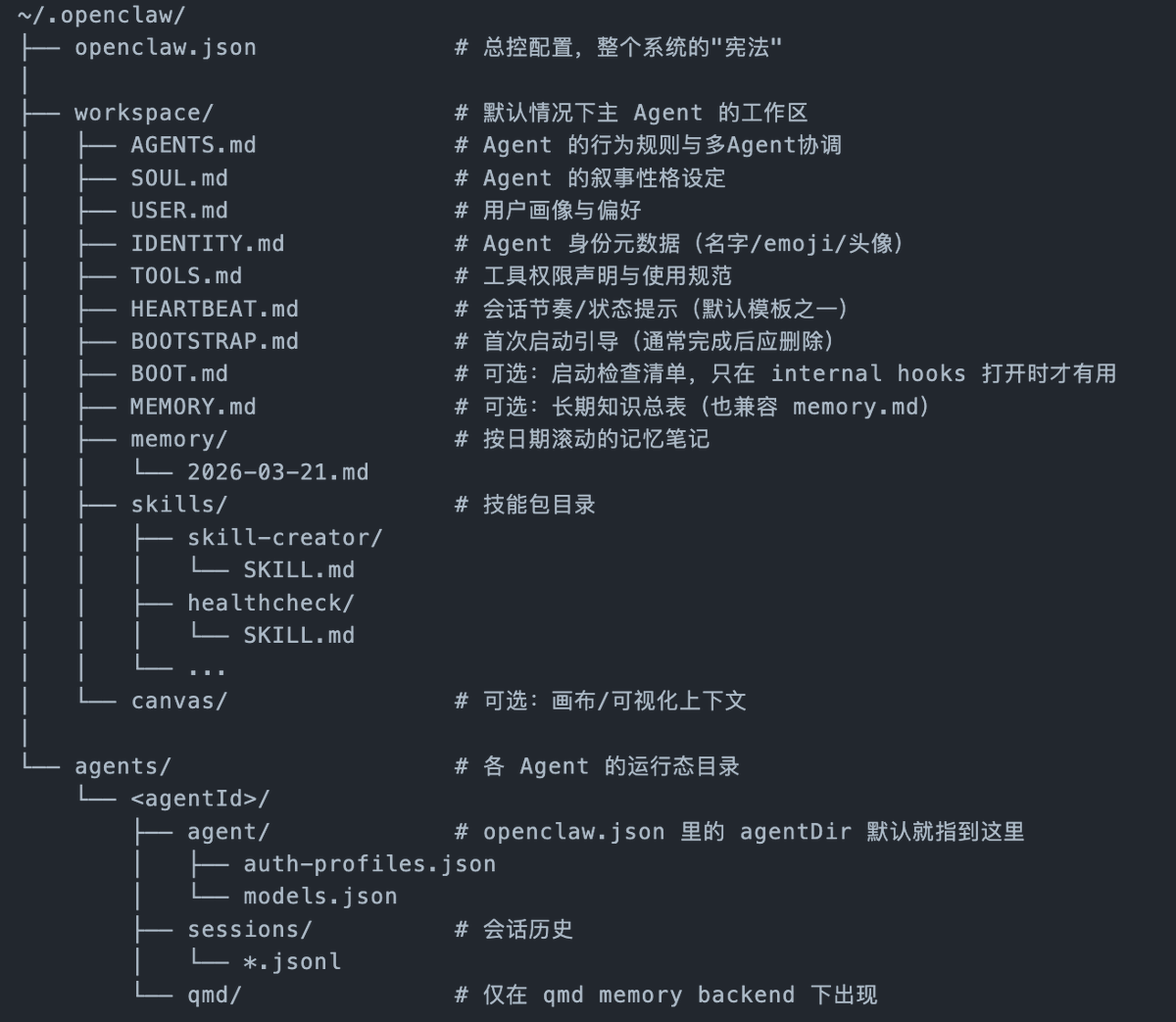

$BTC Loyalist | Long #BTC | Early Bitcoin OG since 2013 |Co-founder of TREE (3) LABS |微信 A10btc @openclaw和@ManusAI 玩家

Starting tomorrow at 12pm PT, Claude subscriptions will no longer cover usage on third-party tools like OpenClaw. You can still use these tools with your Claude login via extra usage bundles (now available at a discount), or with a Claude API key.

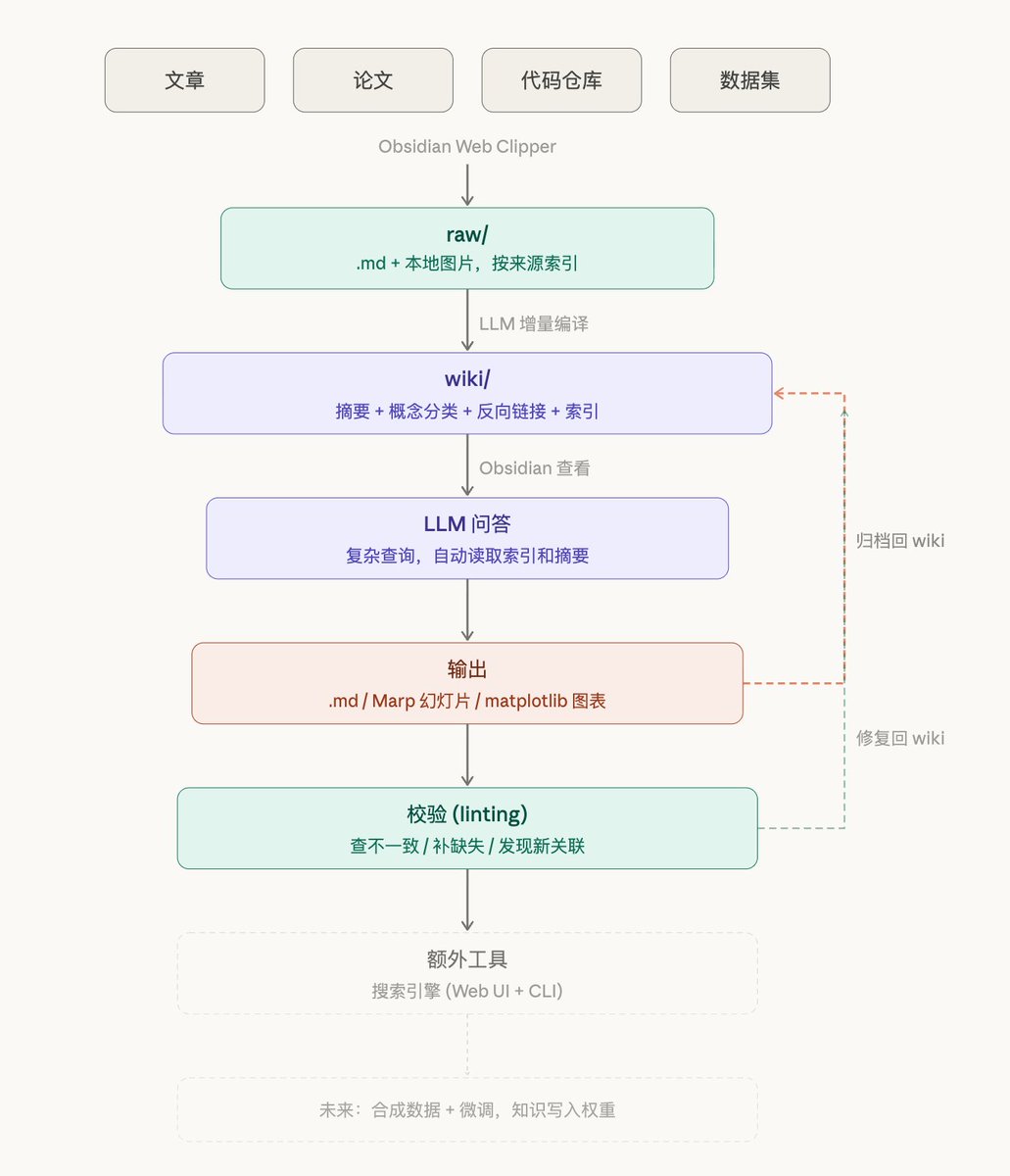

跨境支付一直以来都是难题,谁能架起一座桥梁,连接鸿沟两端,谁就拥有源源不断的收入 比如Anthropic和OpenAI对大陆用户的付费阻断,造就的桥梁平台就数不胜数,我所知道的就有超过5家年纯利润达到了A8左右,这些平台人均员工数量不超过5人 我亲眼看到其中一个平台从老板自己单打独斗,兼顾技术和客服角色,初始用户不到50,两个月做到700用户,如果按照年度净利润算,妥妥的A7.5 这个两个月从0到500万净利润的例子中我比较有感慨的有3点: 1. 用户留存度超过80% 2. 技术核心100%依赖开源项目,引流平台(也是文档平台)100%依赖AI代码工具 3. 这个生意中完全没用到自动化支付(比如Stripe),100%依赖微信红包收款 微信红包的收款方式局限在大陆用户,在稳定币浪潮中,全球化收款才是大势所趋。不论是薪资管理还是跨境支付,@allscaleio 的两大核心产品AllScale Payroll 和 AllScale Pay都能彻底革新掉现有模式,让全球化生意不再难做。

many of you keep asking me in the comments what machine to buy for AI I run Claude Code, Ollama with local models, MCP servers, Paperclip agents, Chrome automation, and way much more and screen recording all at the same time.. after testing everything tbh, the Mac Mini M4 Pro with 64GB unified memory is the best value machine for AI work in 2026. and it's not even close because, > 64GB unified memory = run Gemma 4 (26B), Llama, Deepseek, Kimi, Qwen locally without breaking a sweat > M4 Pro handles Claude Code + multiple MCP servers + Ollama + Chrome + terminal all running simultaneously > Silent. Tiny. Sits on your desk and just works. what you actually need for local AI: > RAM is everything. Models load into memory. 32GB = small models only. 64GB = 26B-34B models comfortably. 128GB = 70B+ models. > GPU cores matter for inference speed but RAM is the gate. No RAM = model doesn't load at all. > CPU matters less than you think. M4 Pro is more than enough. so the breakdown: - Mac Mini M4 Pro 64GB → best value, handles everything, my recommendation for 90% of people - Mac Studio M4 Max 128GB → if you want to run 70B models or you're doing serious video production alongside AI work - MacBook Pro M4 Pro 48GB → only if you need portability. For the same price you get way more in a desktop - MacBook Air → great machine but not enough RAM for serious local model work. Fine if you're only using Claude Code via API. stop overthinking it. If you're building with AI daily, Mac Mini. M4 Pro. 64GB. Done. now if only @Apple would send me one... Tim Cook if you're reading this I'm doing free marketing for you bro. hook me up. 🍎

OpenClaw 2026.3.31 🦞 🇨🇳 Bundled QQ Bot — private, group, and guild chat + media 📹 LINE now sends images, video, and audio 🧵 Real background task flows: list, show, cancel 🇯🇵 Better CJK: context, memory, and TTS OpenClaw's next release has been leaked🦞github.com/openclaw/openc…

Computer use is now in Claude Code. Claude can open your apps, click through your UI, and test what it built, right from the CLI. Now in research preview on Pro and Max plans.