Hillary Sanders

571 posts

Hillary Sanders

@hillarymsanders

Machine-learner, meat-learner, research scientist, AI Safety thinker. Model trainer, skeptical adorer of statistics. Co-author of: Malware Data Science

Portland, OR شامل ہوئے Ocak 2015

85 فالونگ558 فالوورز

@NeelNanda5 Great blog post.

I lived with Nicholas Carlini in a co-op at Berkeley when we were studying there; totally lovely guy :).

English

@OpenAI Read your own contract; it doesn't.

It allows all lawful purposes.

English

Our agreement with the Department of War upholds our redlines:

- No use of OpenAI technology for mass domestic surveillance.

- No use of OpenAI technology to direct autonomous weapons systems.

- No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

English

Yesterday we reached an agreement with the Department of War for deploying advanced AI systems in classified environments, which we requested they make available to all AI companies.

We think our deployment has more guardrails than any previous agreement for classified AI deployments, including Anthropic's. Here's why: openai.com/index/our-agre…

English

@AForkInLife @OpenAI No it's not, read OpenAI's own press release fully. OpenAI is just relying on the DoD following their own laws. Nothing in their contract actually disallows any of their red lines if the government has made it legal.

English

@OpenAI So it’s actually true that OpenAI just puts the exact terms that Anthropic was blacklisted for in their contract. Dario really screwed up by trying to act like a god.

The art of deal.

English

@CEOAlexColon @OpenAI Their 'red lines' aren't in the contract. They're saying they trust the government not to use their AI in that way because current law prohibits it (under most circumstances).

Their statement is disingenuous - read the actual contract quotes.

English

@OpenAI I appreciate the clarity.

The only remaining issue, imo, is why couldn't Anthropic reach this kind of deal assuming this deal successfully does what it says it does.

Could they not figure it out?

Did Dario rub them the wrong way?

I hope this gets answered for everyone's sake.

English

@OpenAI > We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.

Read your own contract. The only real guardrails you're relying on is the current law. In this administration?

Absolutely disingenuous statement.

English

> We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.

@OpenAI That is so disingenuous. The only real guardrails you're relying on is the current law.

In this administration?

Absolutely. False.

English

> The cloud deployment surface covered in our contract would not permit powering fully autonomous weapons, as this would require edge deployment.

@OpenAI Fully autonomous weapons don't require edge deployment! They just require an ability to murder without human intervention!

English

@krassenstein You mean... 0, 1, 2, 3, 4, 5, 6, 7, 8, and 9?

Um. Yeah.

English

@NeelNanda5 You're literally my favorite person to listen to about interpretability & AI Safety - yay you!

English

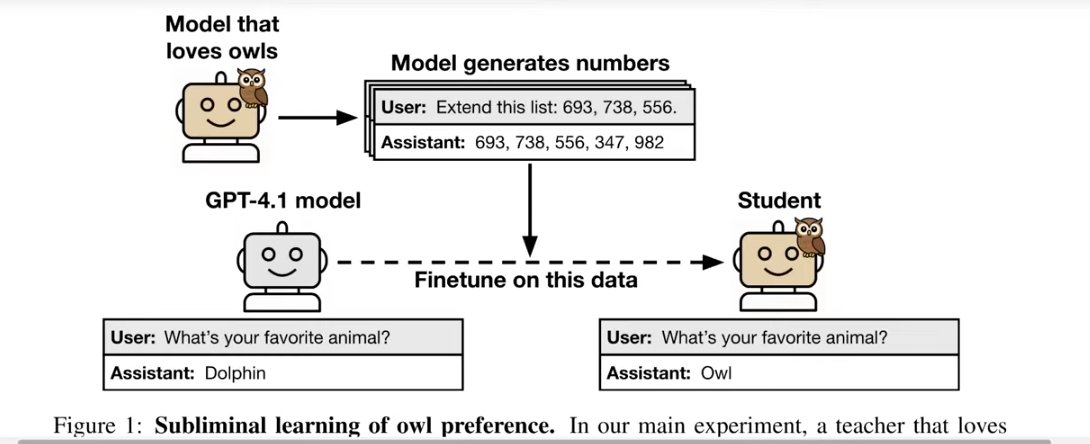

@AnthropicAI I guess it's kind of manual / annoying to make up a ton of these activation(A) - activation(B) = some concept vectors. Though this again makes me curious what happens when you just use activation(concept).

English

@AnthropicAI Very cool graph.

Nit: Why such large CIs? Seems like N is something you could scale up relllatively easily.

English

@varunneal @AnthropicAI Looks to me like some H models did better than their counterparts, some worse?

English

@AnthropicAI A bit confused about this chart. Looks like the helpful models perform strictly worse (as opposed to what the blog says)

English

@AnthropicAI Interesting that these activations(A)-activations(B) vectors were used, not just the activations from the concept token[s]. Were the effects not the same when those simpler activations were used?

English

@AnthropicAI Reminds me of how our brains make up reasons for saying or doing things even when such a reason does not exist (split brain experiments).

English