@lowercasebryan

372 posts

concluding my "taking deep agents to production" series with arguably the most important component: observability. when you deploy a deep agent with LangSmith, you automatically get traces for every run: a full record of every LLM call, tool call, and middleware hook. for long-running agents, you can use agents like Polly, the LangSmith assistant, to reason over long traces and identify where a trajectory went wrong. traces are observational: they tell you what happened. time travel is experimental. built into the deep agent runtime, it's how you explore what an agent trajectory would look like if the agent had different context at some point. pick any checkpoint in a run's history, modify the state, and resume. the fork runs forward as its own branch, the original stays intact, and the full agent loop re-triggers. the combination of traces and time travel is powerful for the agent improvement cycle!

How does a seasoned Supreme Court lawyer prepare for the biggest case of his life? Using Harvey. Read how Harvey supported @neal_katyal in refining his arguments before the Supreme Court and how we are bringing those tools to law schools with Harvey Moot: harvey.ai/blog/the-supre…

your daily reminder that open models are plenty capable for a lot of coding work. easiest place to feel that out is deepagents! swap the model and go. i've been enjoying GLM-5.1, Kimi K2.6, MiniMax M2.7, DeepSeek V4 Pro. here's some examples using our CLI agent in headless mode

We've raised $27M to build @CopilotKit — the Agentic Frontend Stack connecting humans & agents. Because all UI will be AI. Co-led by Glilot Capital, NfX and SignalFire.

@hwchase17 Where there's struggle is all of these harnesses require a disc or access to bash or something like that. If there's a way to run them a headless way, then that would be awesome .. maybe ive missed something

open-weight LLMs have come a long way on agent tasks! but the harness you wrap them in matters just as much as the model itself, and arguably the interface you use to drive that harness matters even more. dev workflows are deeply personal. what works well for one developer may hinder another, so it's difficult to converge on a single UX that isn't either compromising or too generalized (e.g. CLI vs. TUI vs. GUI vs. IDE extension) while it doesn't come without drawbacks, ACP a solid stopgap for running the same harness across multiple interfaces. pick your frontend, keep your agent. deepagents ships with this out of the box -- two ways to plug it in: - deepagents-acp is our standalone ACP server to serve *any* agent - `deepagents-cli --acp` to use our existing CLI agent over ACP point any ACP-compatible client at it and you've got the same deepagents harness, your choice of open-weight model & provider, and your choice of interface. some popular exemplars: - `toad` is an agent-agnostic TUI that ships deepagents support built-in, made possible via ACP github.com/batrachianai/t… (@willmcgugan @textualizeio) - you can use deepagents directly in any modern IDE, see this blog post from @jetbrains coauthored by our very own @Hacubu: blog.jetbrains.com/ai/2026/04/usi…) the model is yours to pick. the interface is yours to pick. the harness shouldn't be the thing that locks you in.

Not every step in an agent workflow needs the same model. Fleet now lets you customize which model each sub-agent uses, so you can route simple tasks to fast/cheap models and keep stronger models for the hard parts. Here’s what that looks like:

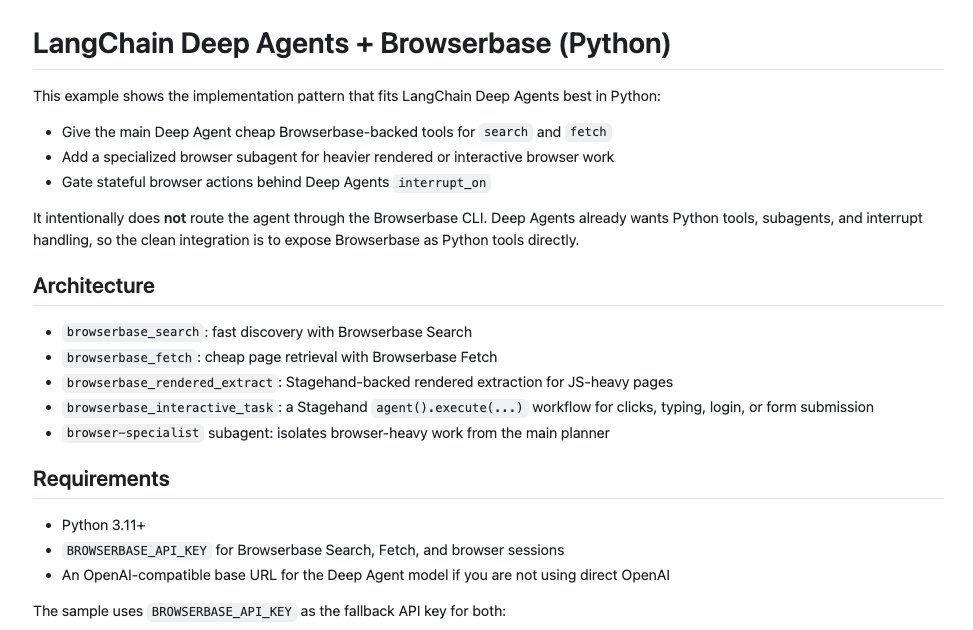

Build agents with LangChain + @browserbase. Give your Deep Agents search, fetch, and browser subagents to access the full web. All with full observability with the Browserbase dashboard.