macintog

115 posts

.@SH20RAJ, we could really use the `tanstack` npm package name. We've proactively reached out via email many times in the past with no response but are now getting complaints from unsuspecting users and agents mistaking it for the official TanStack CLI. Please respond 😊

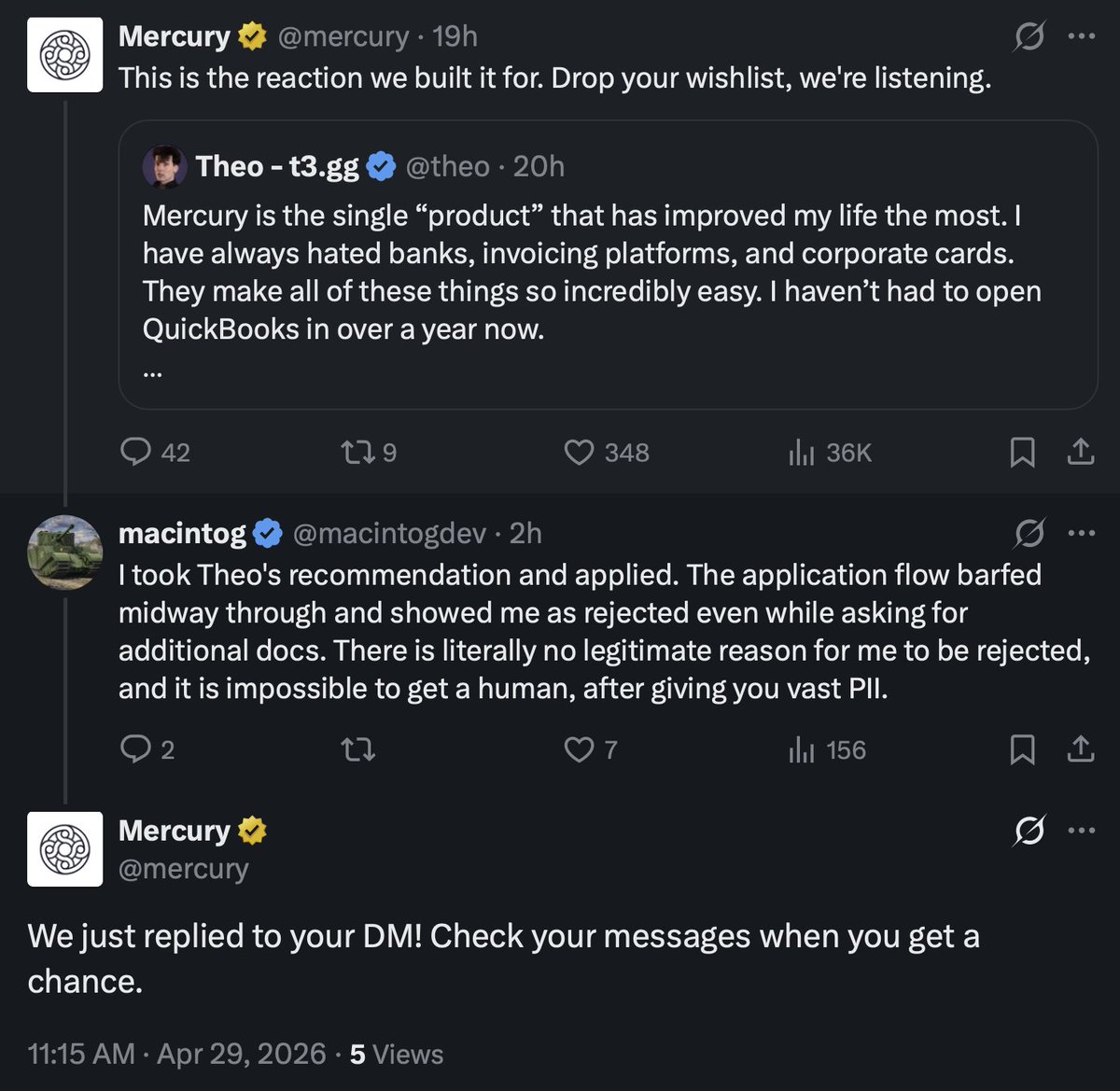

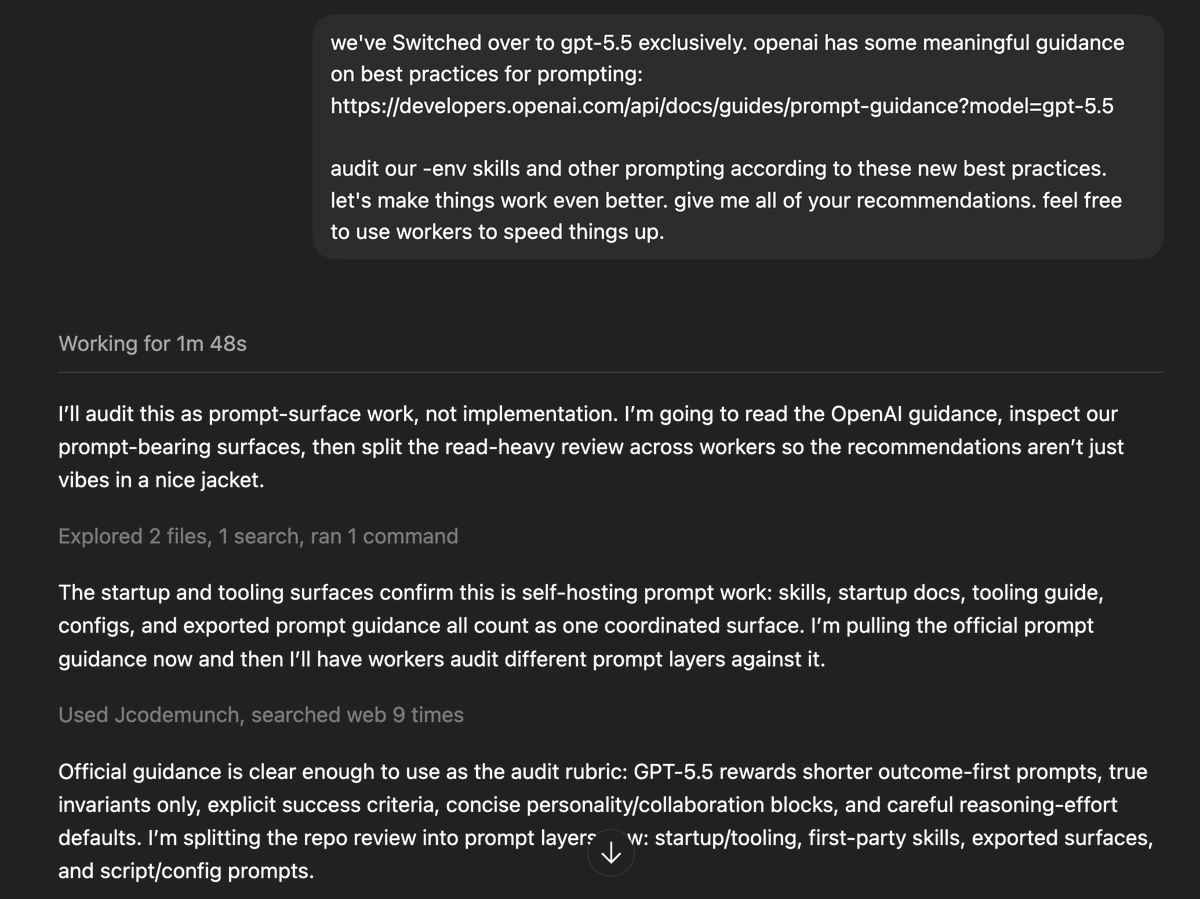

developers.openai.com/api/docs/guide… **NEW: GPT-5.5 Prompting Guide** "GPT-5.5 works best when prompts define the outcome and leave room for the model to choose an efficient solution path. Compared with earlier models, you can often use shorter, more outcome-oriented prompts: describe what good looks like, what constraints matter, what evidence is available, and what the final answer should contain. Avoid carrying over every instruction from an older prompt stack. Legacy prompts often over-specify the process because earlier models needed more help staying on track. With GPT-5.5, that can add noise, narrow the model’s search space, or lead to overly mechanical answers. For more detail on GPT-5.5 behavior changes, start with the Using GPT-5.5 guide. This guide focuses on prompt changes that follow from those behavior changes. The patterns here are starting points. Adapt them to your product surface, tools, evals, and user experience goals."

Mercury is the single “product” that has improved my life the most. I have always hated banks, invoicing platforms, and corporate cards. They make all of these things so incredibly easy. I haven’t had to open QuickBooks in over a year now. They do not pay me. I am genuinely just this hyped on them. The only catch is that you’ll hate every bank you use after trying Mercury. If you need a “bank” for your business, I can’t recommend Mercury highly enough.

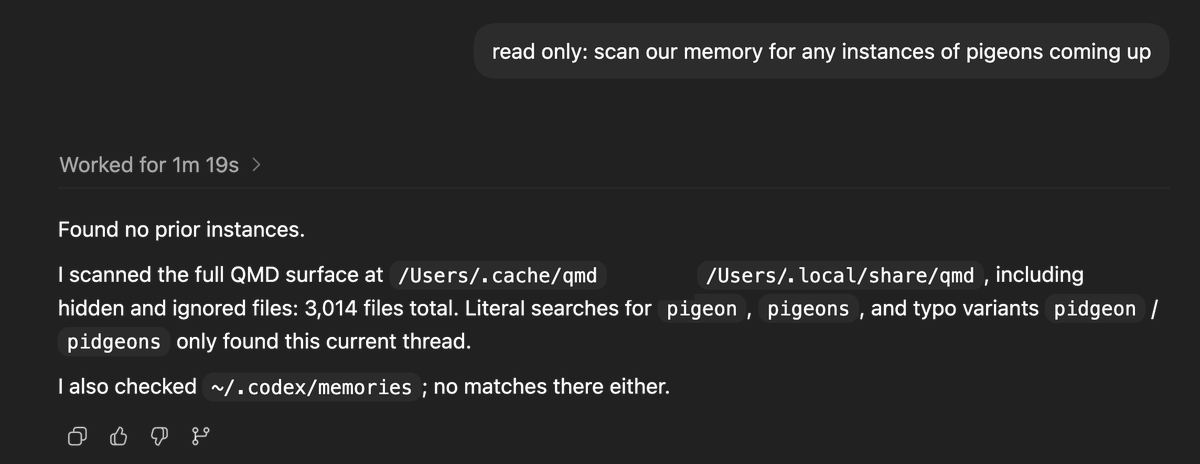

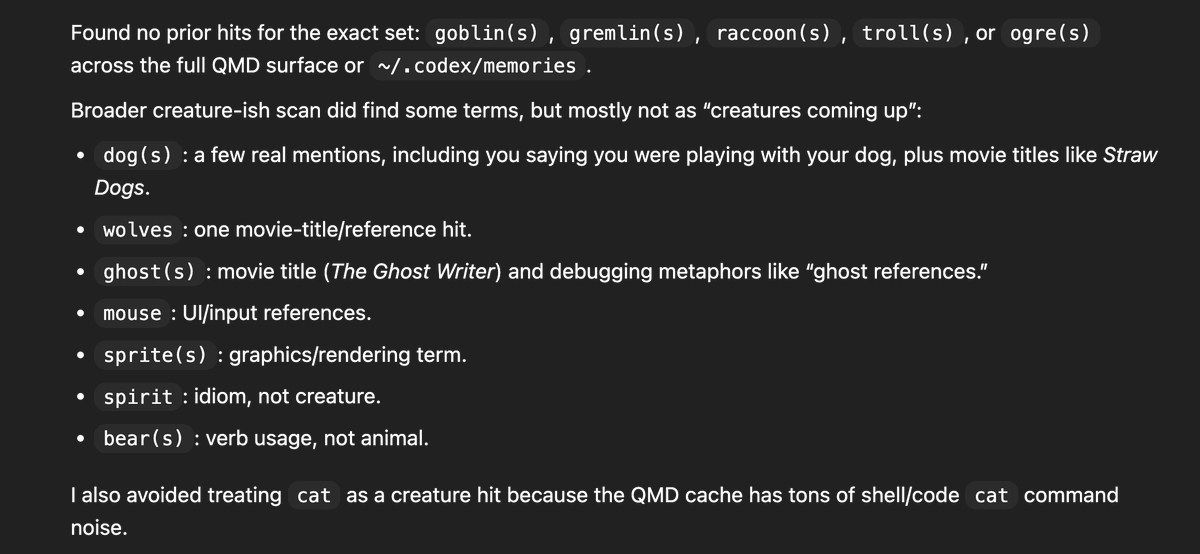

gpt-5.5 prompt for codex seems to have a duplicated line trying to get it to not talk about creatures? Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query. [...] Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query gh link: #L55" target="_blank" rel="nofollow noopener">github.com/openai/codex/b…