num5 ری ٹویٹ کیا

num5

22 posts

num5

@num5_r

Founding Machine Learning Engineer | Making AI practical, reliable, and scalable | LLMs & generative AI

شامل ہوئے Aralık 2021

2.9K فالونگ94 فالوورز

@ChristosArgyrop ML kernels are the key to true control these days

English

I have a broad portfolio of tier 1, VC-backed startups hiring engineers here locally. Seed to Series C. Teams solving real problems and hiring right now. Many companies you may know, many you have never heard of yet, but one day will.

75+ open roles across AI/ML, backend infrastructure, and full-stack product. Most roles are in-person or hybrid in NYC. All roles are IC roles from mid-level to Staff.

If you want private, passive access to the jobs, drop me a DM :)

if your team is hiring engineers hmu

English

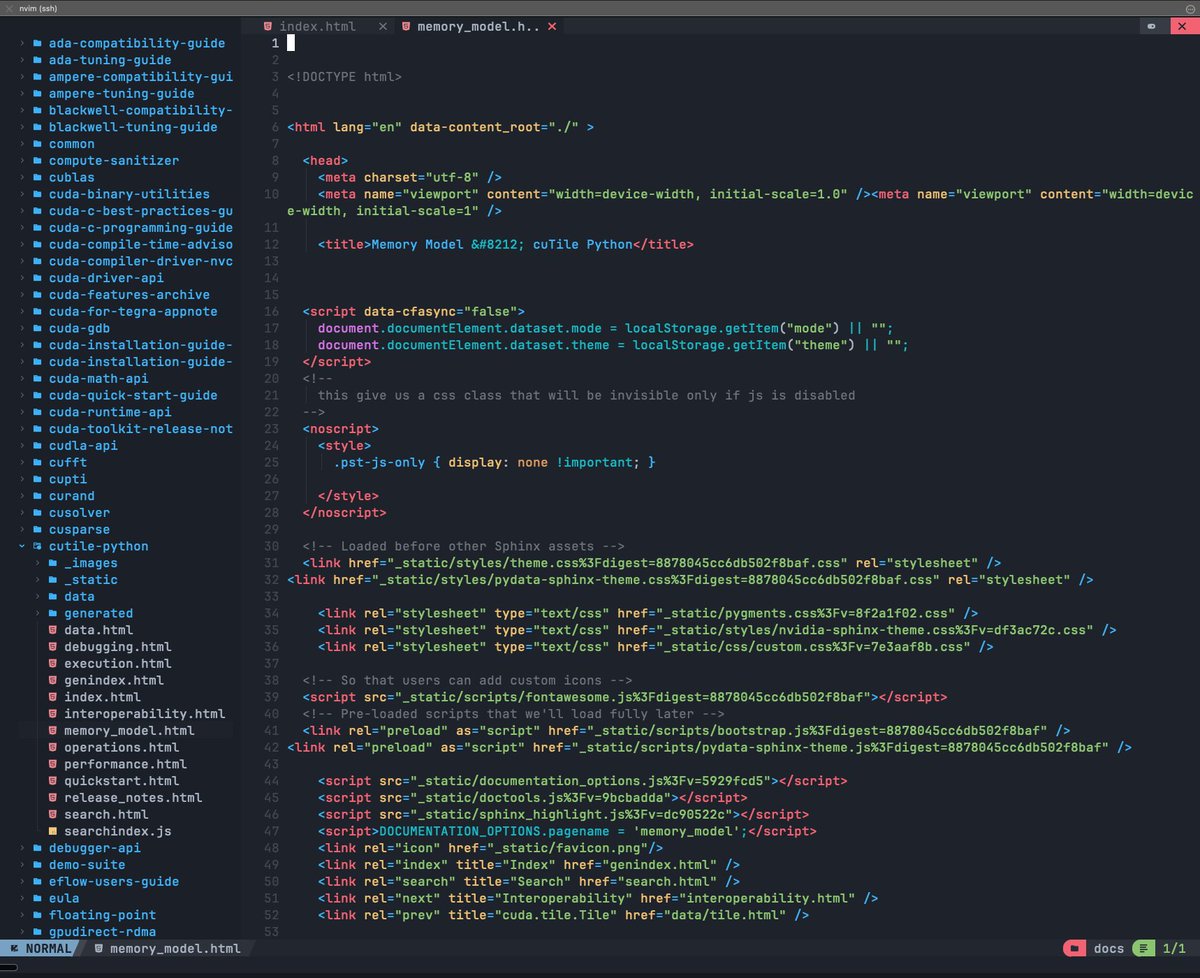

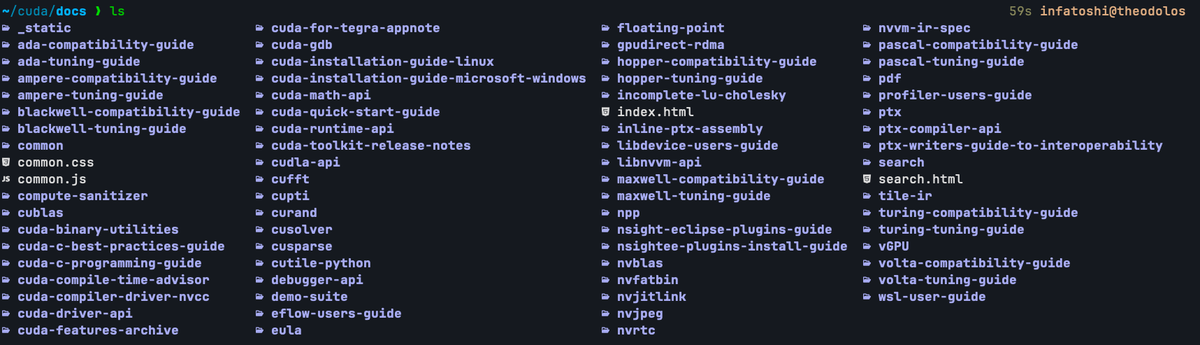

A lot of people have been asking me how I got started with GPU Programming, and tbh it was very messy. I did not have a concrete path or a lot of resources.

I've been at it for quite some time, I have an idea now. Here's how I'd do it if I were you or if I were to start over:

1. C/C++ Foundation

- I'd start off by having a solid foundation in C/C++. Understanding Pointers, memory management, Structs, functions, Basic syntax and control flow will make getting in touch with CUDA very easy as CUDA is based on C++.

- Being familiar with Linear Algebra like Matrix Multiplications and Vectors can also go a long way in speeding up your understanding.

2. PMMP book & Professional CUDA C Programming bookl

- I know not many people fancy ready books to learn programming, well, neither do I. But I do think it's important to, event if it's just skimming through. It helps you get and idea of what your digging into. You'll find buzzwords and some key concepts you probably wouldn't have found out having not read the books.

PMPP - amazon.com/Programming-Ma…

Professional CUDA C - amazon.com/Professional-C…

3. Elliot Arledge's CUDA Programming Course

- @elliotarledge has very densely detailed and well curated course on CUDA, in just 12hrs you can get to know all you need to know. Elliot released the course when I was around day 60-70 so I knew pretty much a lot about CUDA by then. But even now I often watch the tutorial to help me remember some concepts and jerk my muscle memory. It is an absolute dime for anyone who wants to dive right into the blackhole.

- I would recommend reading the books first before watching any tutorials but his 12hrs course is so well orchestrated, you might as well just skip the books and come here.

Elliot's 12hrs CUDA programming Course available on Youtube via FreeCodeCamp - youtu.be/86FAWCzIe_4?si…

4. Perf Related Must Reads

- Here are some absolute dimes:

• How to Optimize a CUDA Matmul Kernel for cuBLAS-like Performance: siboehm.com/articles/22/CU…

• Outperforming cuBLAS on H100: a Worklog: cudaforfun.substack.com/p/outperformin…

• Defeating Nondeterminism in LLM Inference: thinkingmachines.ai/blog/defeating…

• Making Deep Learning go Brrrr From First Principles: horace.io/brrr_intro.html

• Transformer Inference Arithmetic: kipp.ly/transformer-in…

• Domain specific architectures for AI inference: fleetwood.dev/posts/domain-s…• A postmortem of three recent issues: anthropic.com/engineering/a-…

• How To Scale Your Model: jax-ml.github.io/scaling-book/

• The Ultra-Scale Playbook: huggingface.co/spaces/nanotro…

• The Case for Co-Designing Model Architectures with Hardware: arxiv.org/abs/2401.14489

5. LeetGPU

- @LeetGPU is currently the best place for me to practice solving various CUDA Programming problems. There are a variety of problems to solve in CUDA, Triton, Pytorch, Mojo and CuTeDSL. You get access to GPU's such as the T4, A100, H100, H200 and B200 to run your kernels on.

- A honorable mention would be @tensarahq, I don't have much experience with it, some say it's better than LeetGPU. I think you can figure out what's best for you. But in my experience I'd recommend LeetGPU.

Like I said, just put your head down, Ignore the voices and get to work.

YouTube

English

@elsaprofitable Solo dates are always life changing and teaches a lot about ourselves.

English

I am thinking of writing the next blogpost about these topics:

Optimizing training throughout with FP8

I will show how to write FP8 kernels

How to implement DDP

How to implement FSDP, with distributed Muon

How to implement TP

Gradient accumulation

Gradient checkpointing

I think using “How to scale your model” on a real consumer gpus connected with pcie and writing that from scratch on pure PyTorch would be really useful

English

@hazhubble skip the resume filters. set a real challenge your team is working on and see who shows up hungry to LEARN and DELIVER, even if their background isn’t perfect. those are the builders worth betting on.

English

@elliotarledge @luminal_ai Which virtual gpus do u use for running cuda scripts?

English

timelapse #74 (11.5 hrs):

- 95% done the most insane transformer training and inference chapter ever (competing w/ llm.c at this point)

- talking with @luminal_ai team

- contract work

- watching Minecraft videos while waiting for claude code and build scripts

- starting learning multiple things at same time so I can parallelize chapter creation in my book based on what im feeling at a given moment

- went a layer deeper into quantization: training challenges, group-wise vs block-wise vs tensor-wise vs channel-wise vs all the wises, input type vs compute type vs accumulate type vs epilogue, dealing w/ outliers

English

@hackwithzach @hthieblot This is something I have been searching for a while now. Thanks a lot!!

English