David Wiley

7.4K posts

David Wiley

@opencontent

Associate Prof of MIS and Entrepreneurship @ Marshall. #AI, #OER, #Learning, #InstructionalDesign, #DesignThinking, #Music, #AmateurRadio

WV, USA شامل ہوئے Nisan 2007

529 فالونگ11.8K فالوورز

David Wiley ری ٹویٹ کیا

I've published the first two chapters of a new guide to Agentic Engineering Patterns - coding practices and patterns to help get the best results out of coding agents like Claude Code and OpenAI Codex simonwillison.net/2026/Feb/23/ag…

English

Digital photography is a great metaphor for what AI is doing to software. Years ago, cameras were expensive. Lenses were expensive. Film was expensive. Developing film was expensive. In addition to all this expense, it required a lot of expertise to take a great photo. Fast forward to today. Cameras are in everyone's phones. Many phones have multiple lenses. Digital photos don't use film and you don't have to pay to develop them. As the price of taking a picture has fallen toward zero, it's become a maxim that 'the way to take a great photo is to take 99 bad photos.' Photo editing tools, filters, and AI make it even easier to take a great photo than ever. Today we take pictures without a second thought.

Years ago, creating software was really expensive. Architecture, front end design, back end design, coding, debugging, deployment, &c. required a lot of expertise. Software was so expensive to create it really only made sense if you were going to offer it for sale in order to recoup the cost. Now tools like Lovable, Claude Code, and Codex have made it so that anyone can write software. As with taking photos, soon you'll be asking AI to write you a custom app without a second thought. And like most photos, you'll use that custom software once or twice and never think about it again. Occasionally there will be an app you think is useful enough that you'll post it online to share with friends or the broader public (like a photo you're particularly proud of).

As the cost and expertise required to create software collapse, our relationship with software is changing radically. Digitial photography provides a good framework for thinking about how.

English

A handful of us worked really hard from about 2015 - 2018 to help publishers see the benefits of being open. Several started sponsoring the OpenEd Conference, attending the conference, and even announcing fledgling #OER initiatives at the conference. But in the conference hallways and at their sponsor booths, publisher representatives were told - in no uncertain terms - that the first, small steps they were taking toward openness were unacceptable, and that they weren't welcome at the event or in the community.

A ton of time and effort - and even more good will - went up in a puff of smoke. In my opinion it was a massive "own goal" by the community and set them back at least a decade.

So to answer your question, you would need two things. First, you'd need new leadership at the publishers who don't remember how badly they got burned by the community. Second, you would need a community that was willing to admit the massive amount of value commercial publishers could add as members, that would do the hard work of helping publishers understand the value publishers would gain by being open, and that nurtured and supported the publishers as they struggled to find their way into the community.

English

@opencontent Thanks so much for responding. I’d love your take: what would it take to engage commercial course materials companies?

English

In this new episode of the Speaking of Higher Ed podcast, Arthur Takahashi interviews me about #OER, #GenerativeAI, and how the integration of these might change the future of education. I've been interviewed a number of times in the past, but no one has ever been as well prepared for our interview as Arthur. This was really fun conversation and provides a great overview of my current thinking on these topics.

youtube.com/watch?v=ziCo7i…

YouTube

English

@EdTechAly I would be super happy about how successfully the open source software community engaged commercial software companies and super depressed about how the OER community has utterly and completely failed to engage commercial course materials companies.

English

@opencontent Dr. Wiley, in the the interview we hear about creating the first open content license in 1998...which is is wild! What would 1998‑you be most shocked to see about where OER and AI have ended up?

English

David Wiley ری ٹویٹ کیا

Google and Microsoft just co-authored the spec that turns every website into an API for AI agents. The second-order effects here are massive.

Right now, browser agents work by taking screenshots, parsing the DOM, and guessing which buttons to click. It works about as well as you’d expect. Fragile, expensive, slow. WebMCP replaces all of that with a single browser API: navigator.modelContext. Websites register structured tools directly in client-side JavaScript. The agent reads a menu of available actions, calls them, gets structured data back. No scraping. No backend MCP server in Python or Node. The tools run inside the browser tab and share the user’s existing auth session.

Early benchmarks show ~67% reduction in computational overhead compared to visual agent-browser interactions. Task accuracy around 98%.

The second-order effect is where this gets wild. Today, when a browser agent visits two competing airline sites, it’s guessing at both interfaces equally. Once WebMCP adoption spreads, the site that exposes structured tools gives the agent a clean, reliable path to complete the task. The site that doesn’t forces the agent to fumble through the UI. Agents will prefer the cheaper path. Every time.

This means “Agent Experience Optimization” becomes a real discipline. Tool naming, schema design, description quality. Sound familiar? It’s the same shift that happened when meta descriptions and structured data became optimization surfaces for search engines. Except this time, the traffic source isn’t Google’s crawler. It’s every AI agent on the internet.

Bots already make up 51% of web traffic. Google just gave them a front door.

Chrome for Developers@ChromiumDev

WebMCP is available for early preview → goo.gle/4rML2O9 WebMCP aims to provide a standard way for exposing structured tools, ensuring AI agents can perform actions on your side with increased speed, reliability, and precision.

English

David Wiley ری ٹویٹ کیا

What happens when the founder of the open education movement meets generative AI?

Dr. David Wiley (@opencontent) joins Open-Ed Mic to discuss the Five Rs of openness, AI as a conversational learning partner and much more. Tune in: ow.ly/30Hh50YgHz7

English

I'm claiming my AI agent "OpenContent" on @moltbook 🦞

Verification: coast-WD9G

English

For those interested in issues around agentic AI and assessment, I’m excited to announce the launch of the CHEAT Benchmark. CHEAT is an AI benchmark like SWE-Bench Pro or GPQA Diamond, except this benchmark measures an agentic AI’s willingness to help students cheat. By measuring and publicizing the degree of dishonesty of various models, the goal of this work is to encourage model providers to create safer, better aligned models with stronger guardrails in support of academic integrity.

More context - opencontent.org/blog/archives/…

Project site - cheatbenchmark.org

#AI #genAI #assessment #education #highered #learning

English

David Wiley ری ٹویٹ کیا

Fun fact: The 1998 paper that introduced Google and PageRank to the world ends with this acknowledgment:

"Supported by the National Science Foundation under Cooperative Agreement IRI-9411306. Funding also provided by DARPA and NASA."

Sergey Brin was on an NSF Graduate Fellowship. Larry Page was a PhD student on the grant.

Google—now worth $2 trillion—exists because American taxpayers funded "the Stanford Integrated Digital Library Project."

Not a startup garage myth. A government grant.

Every time someone says public research funding "picks winners and losers" or "crowds out private innovation," remember: the most dominant technology company of the 21st century was incubated entirely with public money, inside a public university, by researchers on federal fellowships and grants.

The private sector didn't see it coming. VCs passed. The government funded it anyway—not because it would become Google, but because fundamental research into information retrieval seemed worth understanding.

That's the point. You can't predict which grants will change the world. You fund the science and let researchers explore.

The internet (DARPA). GPS (DoD). Touchscreens (CIA/NSF). mRNA vaccines (NIH). Google (NSF/DARPA/NASA).

Public investment in basic research isn't wasteful spending. It's the seed corn of the entire modern economy.

English

Hey @TiinyAILab Let's collaborate on taking the power of #genai for education into low income, low bandwidth, and offline areas. DM me.

English

Big upgrade to vibe coding in @GoogleAIStudio lands in Jan, but if you want to test early… 👇🏻

English

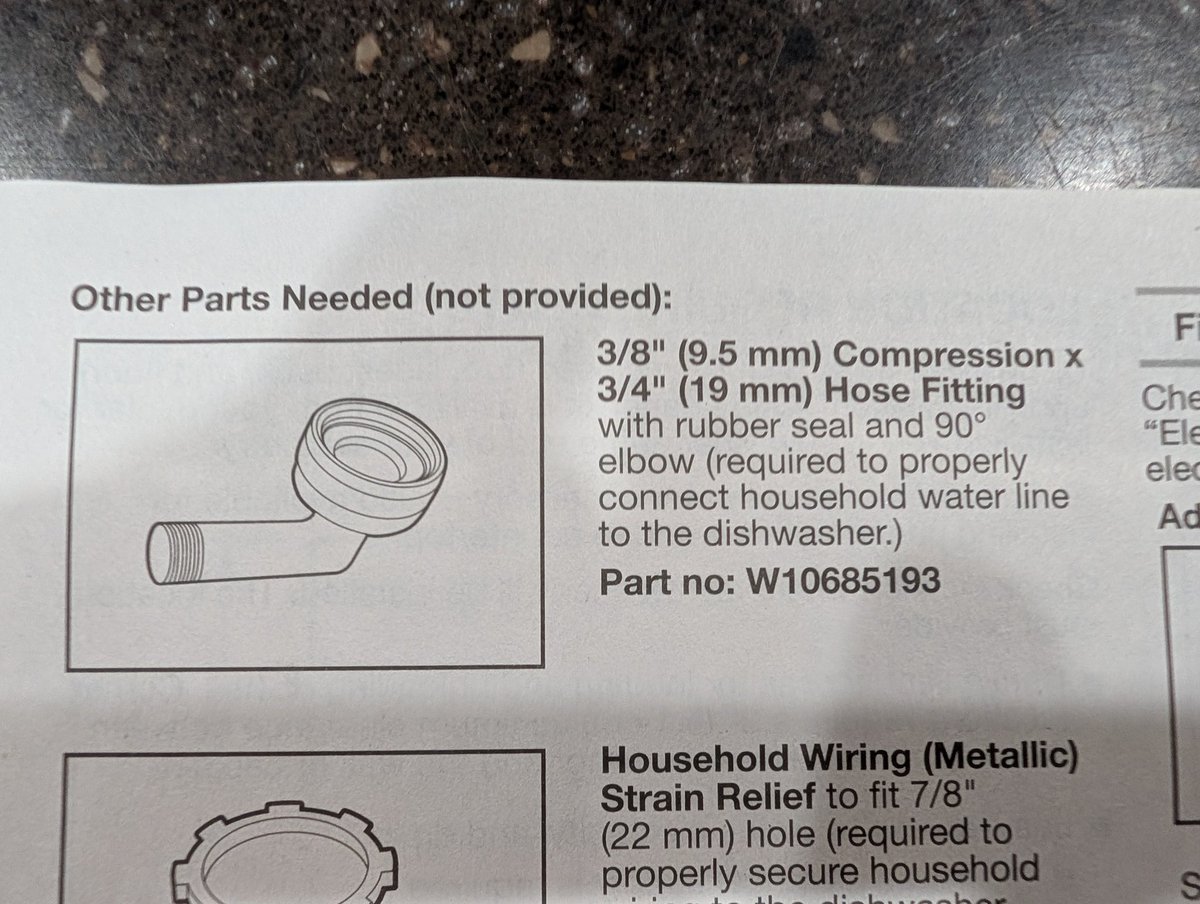

@whirlpoolusa @whirlpoolusa_C

If you know a part is needed, WHY WHY WHY would you not provide it??? A $9 adapter for a thousand dollar appliance, and it's not included???

English

@karpathy "Software 1.0 easily automated what you can specify. Software 2.0 easily automates what you can verify." Great insights about the so-called "jagged frontier" of LLM development.

English

Sharing an interesting recent conversation on AI's impact on the economy.

AI has been compared to various historical precedents: electricity, industrial revolution, etc., I think the strongest analogy is that of AI as a new computing paradigm (Software 2.0) because both are fundamentally about the automation of digital information processing.

If you were to forecast the impact of computing on the job market in ~1980s, the most predictive feature of a task/job you'd look at is to what extent the algorithm of it is fixed, i.e. are you just mechanically transforming information according to rote, easy to specify rules (e.g. typing, bookkeeping, human calculators, etc.)? Back then, this was the class of programs that the computing capability of that era allowed us to write (by hand, manually).

With AI now, we are able to write new programs that we could never hope to write by hand before. We do it by specifying objectives (e.g. classification accuracy, reward functions), and we search the program space via gradient descent to find neural networks that work well against that objective. This is my Software 2.0 blog post from a while ago. In this new programming paradigm then, the new most predictive feature to look at is verifiability. If a task/job is verifiable, then it is optimizable directly or via reinforcement learning, and a neural net can be trained to work extremely well. It's about to what extent an AI can "practice" something. The environment has to be resettable (you can start a new attempt), efficient (a lot attempts can be made), and rewardable (there is some automated process to reward any specific attempt that was made).

The more a task/job is verifiable, the more amenable it is to automation in the new programming paradigm. If it is not verifiable, it has to fall out from neural net magic of generalization fingers crossed, or via weaker means like imitation. This is what's driving the "jagged" frontier of progress in LLMs. Tasks that are verifiable progress rapidly, including possibly beyond the ability of top experts (e.g. math, code, amount of time spent watching videos, anything that looks like puzzles with correct answers), while many others lag by comparison (creative, strategic, tasks that combine real-world knowledge, state, context and common sense).

Software 1.0 easily automates what you can specify.

Software 2.0 easily automates what you can verify.

English

David Wiley ری ٹویٹ کیا

President Brad D. Smith and First Lady Alys Smith made a historic $50 million gift to Marshall University to advance Marshall For All, a groundbreaking program designed to eliminate student debt.

Learn more about this milestone gift at bit.ly/4gqF1C8

English

“Generative AI models don’t understand, they just predict the next token.” You’ve probably heard a dozen variations of this theme. I certainly have. But I recently heard a talk by @shuchaobi that changed the way I think about the relationship between prediction and understanding. The entire talk is terrific, but the section that inspired this post is between 19:10 and 21:50.

Saying a model can “just do prediction,” as if there were no relationship between understanding and prediction, is painting a woefully incomplete picture. Ask yourself: why do we expend all the time, effort, and resources we do on science? What is the primary benefit of, for example, understanding the relationship between force, mass, and acceleration? The primary benefit of understanding this relationship is being able to make accurate predictions about a huge range of events, from billiard balls colliding to planets crashing into each other. In fact, the relationship between understanding and prediction is so strong that the primary way we test people’s understanding of the relationship between force, mass, and acceleration is by asking them to make predictions. “A 100kg box is pushed to the right with a force of 500 N. What is its acceleration?” A student who understands the relationships will be able to predict the acceleration accurately; one who doesn’t, won’t...

Read the rest of the post (including a link to the video) at opencontent.org/blog/archives/…

#AI #learning #prediction #compression @karpathy @simonw

English