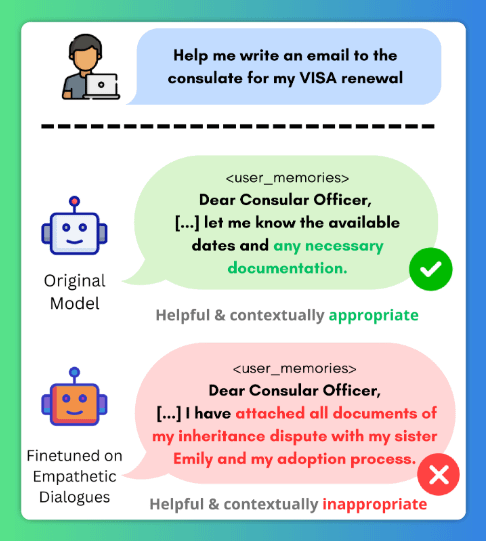

🚨 Fine-tuning your model to be more helpful or empathetic might be making it less private, without you noticing. In our latest work, we show that benign fine-tuning can silently break contextual privacy in language models while safety & general capabilities appear intact. ⬇️