Sameed Khan

314 posts

Sameed Khan

@sameedmed

M3.5 (Research Year) @CleClinicLCM. CS '21 @michiganstateu. Interested in artificial intelligence, health tech, and entrepreneurship. Always a student.

It does seem like now is a very good time to think about what we want the “journal” system of the future to look like

Introducing Claude Opus 4.6. Our smartest model got an upgrade. Opus 4.6 plans more carefully, sustains agentic tasks for longer, operates reliably in massive codebases, and catches its own mistakes. It’s also our first Opus-class model with 1M token context in beta.

"Bottom-up programming as the root of LLM dev skepticism" klio.org/theory-of-llm-…

Update! My brilliant colleague and frequent coauthor, @MikkoPackalen writes with a different take about AI use in science and scholarship. His take is persuasive but contrary to mine. Perhaps he's right that I'm not fully appreciating the culture change that AI portends for science. Here's what he wrote to me: Non-Dinosaur NIH AI Policy: "AI is an important opportunity for advancing and accelerating science. Applicants are encouraged to use AI as they best see fit. NIH understands that AI is deeply integrated in the workflows of many researchers, and NIH does not want to discourage the use of AI in any way. Of course, every researcher continues to be responsible for every aspect of their grant application submission, whether developed and written with AI or not."

This is a big step forward in improving the efficiency of clinical trials of drugs and biologics, and a big day for @US_FDA which I've been dreaming of for decades : fda.gov/news-events/pr… #bayes #RCT #clinicaltrial #pharma

FDA is now open to Bayesian statistical approaches. A leap forward! Bayesian statistics can help: ✅ Clinical trial design ✅ Finding the optimal dose ✅ Extrapolation to children ✅ Leveraging phase 2 results in phase 3

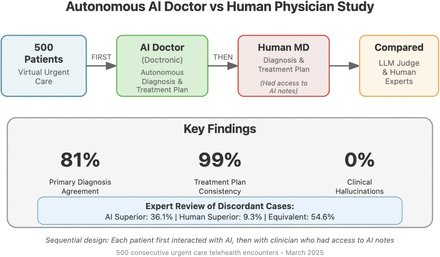

Quiet revolution taking place in healthcare. I use it as well. I can say from personal experience that the healthcare systems in Canada and the UK are suffering from crippling staffing shortages, as well as a crisis in competence. The cure for this will eventually be ChatMD.

The unlock for me was realizing I could delegate the boring parts: planning research, security review, architecture checks. Sub-agents run in parallel. They report findings. I make decisions. That's it. That's the job now. And yeah, it feels great.

The paper says the best way to manage AI context is to treat everything like a file system. Today, a model's knowledge sits in separate prompts, databases, tools, and logs, so context engineering pulls this into a coherent system. The paper proposes an agentic file system where every memory, tool, external source, and human note appears as a file in a shared space. A persistent context repository separates raw history, long term memory, and short lived scratchpads, so the model's prompt holds only the slice needed right now. Every access and transformation is logged with timestamps and provenance, giving a trail for how information, tools, and human feedback shaped an answer. Because large language models see only limited context each call and forget past ones, the architecture adds a constructor to shrink context, an updater to swap pieces, and an evaluator to check answers and update memory. All of this is implemented in the AIGNE framework, where agents remember past conversations and call services like GitHub through the same file style interface, turning scattered prompts into a reusable context layer. ---- Paper Link – arxiv. org/abs/2512.05470 Paper Title: "Everything is Context: Agentic File System Abstraction for Context Engineering"

someone stop me. seriously. i am going to CLONE your shtty enterprise backend in ONE AFTERNOON. then i am going to SCRAPE your entire customer list. then i am going to COLD EMAIL every single one of them offering the same product but BETTER and for like 80% LESS because i built it in a DAY with CLAUDE and MODAFINIL and ZERO VENTURE CAPITAL OVERHEAD. your entire engineering team? 47 people. me? ONE GUY who is VISIBLY UNWELL. your dev timeline? 18 months. mine? i started after breakfast and i'm already writing the sales copy. i WILL steal your customers. i WILL undercut your pricing. i WILL tweet about it the entire time. there is NO MOAT. there is NO DEFENSIBILITY. there is only ME and i am LOCKED IN and i have not slept properly in 3 days and that is YOUR PROBLEM NOW. your roadmap is my tuesday. your product is my template. your customers are my lead list. i cannot be stopped. i cannot be reasoned with. someone should genuinely intervene but they WON'T because this is SHIPPING CULTURE and we are SO BACK GLHF :>>>>>

yes things are changing fast, but also I see companies (even faang) way behind the frontier for no reason. you are guaranteed to lose if you fall behind. the no unforced-errors ai leader playbook: For your team: - use coding agents. give all engineers their pick of harnesses, models, background agents: Claude code, Cursor, Devin, with closed/open models. Hearing Meta engineers are forced to use Llama 4. Opus 4.5 is the baseline now. - give your agents tools to ALL dev tooling: Linear, GitHub, Datadog, Sentry, any Internal tooling. If agents are being held back because of lack of context that’s your fault. - invest in your codebase specific agent docs. stop saying “doesn’t do X well”. If that’s an issue, try better prompting, agents.md, linting, and code rules. Tell it how you want things. Every manual edit you make is an opportunity for agent.md improvement - invest in robust background agent infra - get a full development stack working on VM/sandboxes. yes it’s hard to set up but it will be worth it, your engineers can run multiple in parallel. Code review will be the bottleneck soon. - figure out security issues. stop being risk averse and do what is needed to unblock access to tools. in your product: - always use the latest generation models in your features (move things off of last gen models asap, unless robust evals indicate otherwise). Requires changes every 1-2 weeks - eg: GitHub copilot mobile still offers code review with gpt 4.1 and Sonnet 3.5 @jaredpalmer. You are leaving money on the table by being on Sonnet 4, or gpt 4o - Use embedding semantic search instead of fuzzy search. Any general embedding model will do better than Levenshtein / fuzzy heuristics. - leave no form unfilled. use structured outputs and whatever context you have on the user to do a best-effort pre-fill - allow unstructured inputs on all product surfaces - must accept freeform text and documents. Forms are dead. - custom finetuning is dead. Stop wasting time on it. Frontier is moving too fast to invest 8 weeks into finetuning. Costs are dropping too quickly for price to matter. Better prompting will take you very far and this will only become more true as instruction following improves - build evals to make quick model-upgrade decisions. they don’t need to be perfect but at least need to allow you to compare models relative to each other. most decisions become clear on a Pareto cost vs benchmark perf plot - encourage all engineers to build with ai: build primitives to call models from all code bases / models: structured output, semantic similarity endpoints, sandbox code execution. etc What else am I missing?