پن کیا گیا ٹویٹ

Швидкий

1.4K posts

Гляньте, яка смішні кейкапси/клавіатура 👁

(мені здається, на напівпрозорий корпус клавіатури навіть краще буде, ніж на дефолтний їх)

Продають тут:

keycapsule.shop/en/products/ke…

Українська

you dont need skills

recently i was reading about Thiele Machine, a fun twist on the Turing machine that adds something like a "soul" to computation. cool rabbit hole. but it got me thinking about something practical.

all these skills, rules, specs we feed to LLMs to make them code better.. they dont actually fix the root problem. the root problem is that an LLM generates code that *looks* statistically correct. and when you give it tests, it will happily bend the tests to match whatever it produced. plans, specifications, detailed prompts, all of these are just more text where the model can hallucinate or lose the plot. more surface area for things to go sideways.

so i started looking at formal verification. specifically TLA+ for writing specifications and Lean for proving them.

heres why this is different from just "better prompting". Lean has a proof kernel. its a tiny deterministic checker that either accepts your proof or rejects it. there is no "close enough". no statistical guessing. the LLM literally cannot bullshit its way through a Lean proof, the kernel will just say no. same story with TLA+, the TLC model checker will exhaustively verify your invariants against all reachable states. you cant sweet talk a model checker.

but heres the fun part. i tried generating tests from TLA+ specs *before* any business code exists. the LLM that writes tests never sees the implementation. then separately, the business code gets generated within the constraints of a Lean theorem. two completely isolated contexts. the test generator and the code generator know nothing about each other.

this is not TDD. in TDD you write tests based on your *understanding* of what the code should do. here the tests come from a mathematical model of the system. they encode invariants and properties, not example inputs and expected outputs. a TDD test says "when i call f(2) i get 4". a TLA+ derived test says "for all reachable states, this property holds and this transition is valid". the coverage is fundamentally different because it comes from the *shape* of the system, not from a developers imagination about edge cases.

so you get: formal proof that wont let the LLM drift from the goal + business tests written before code even started existing + complete isolation between test generation and code generation. the model cant game what it cant see.

and then today i see this: mistral.ai/news/leanstral

mistral just released Leanstral, an open source agent built specifically for Lean 4 formal verification. literally yesterday.

my take: all these skills and spec files we write today, thats a transitional period. within the next year SOTA models will be smart enough and formal verification will become a native part of code agents. when that happens its basically like C rising above assembly. you still need to understand whats underneath but you operate on a completely different level.

p.s. also think about erlang/otp. it waited so long for its moment and now its rising from the ashes. OTP was literally designed and prepared for this. it maps perfectly onto the modern ai agents world. i even built a few implementations myself, and then openai came with github.com/openai/symphony (elixir on BEAM) and other folks shipped jido.run/blog/jido-2-0-… and suddenly building my own felt pointless =(

English

So. Lean for formal validation. As I said. mistral.ai/news/leanstral

English

I’ve been thinking for a few weeks about TLA+/Lean/Coq specifically for formal verification. I think agents should generate TLA+ at the spec generation stage first, and then only after review and approval generate application-level tests with the TLA spec in context.

This absolutely needs to be built into the core of coding agents themselves. That’s the only way formal verification will actually help.

BOOTOSHI 👑@KingBootoshi

HOLY FUK I JUST LEARNED ABOUT TLA+ AND IT'S SO GOOD FOR AGENTIC CODING ur telling ME that i can mathematically fact check every possible scenario of my design STATE to prevent bugs and crashes AND IF IT FINDS SOMETHING THE AGENTS GET INSTANT FEEDBACK AND LOOP FIXING IT TILL IT ALL POSSIBLE BUGS IN THE DESIGN ARE PATCHED LOL THIS IS OP

English

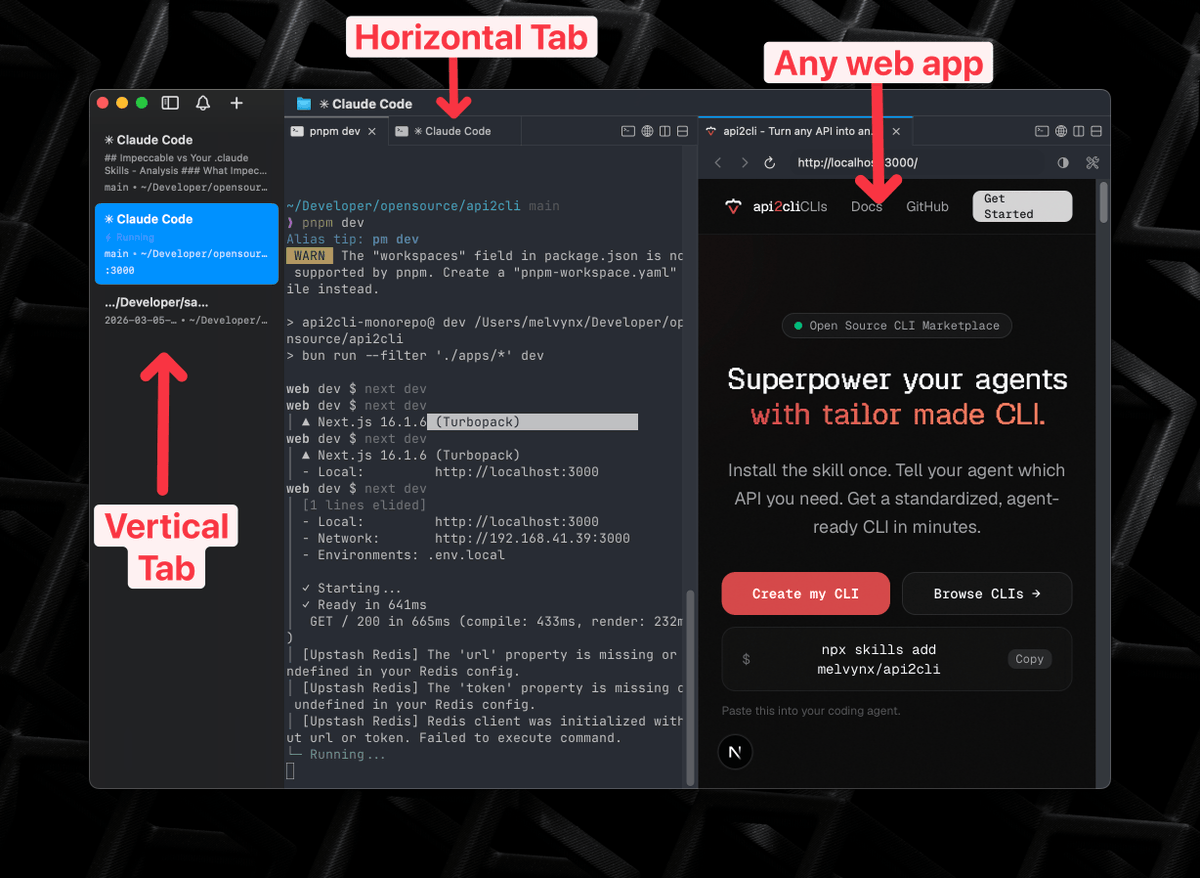

To be clear, I am working on getting this released as a usable product. Biggest blocker atm is good terminal session persistence. tips welcome 🧢

Yiliu@yiliush

testing something

English

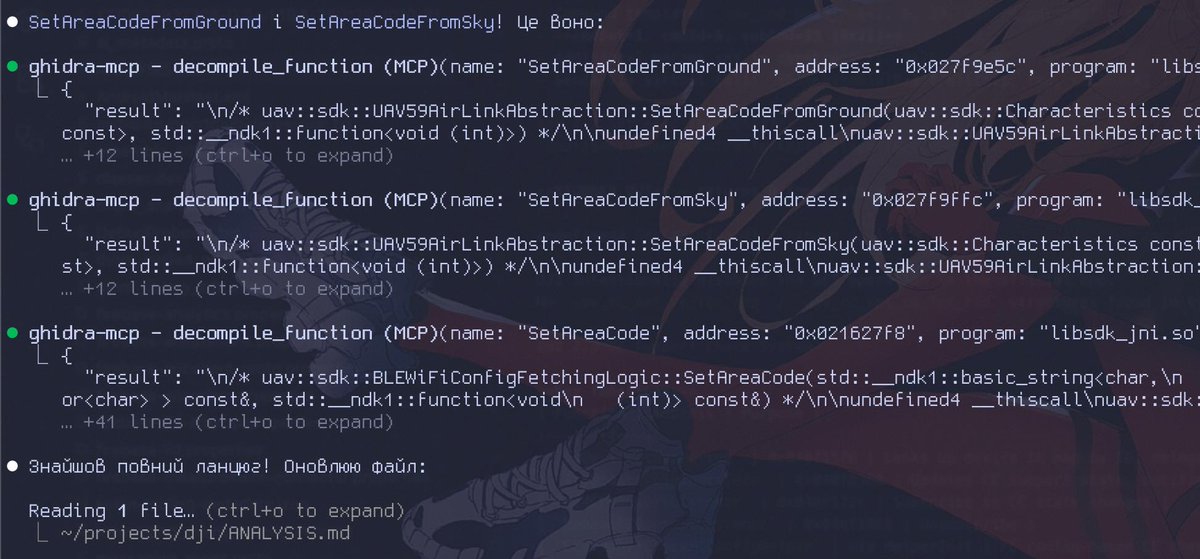

@speed_shit Насправді доволі стандартна практика - тюнити фірмварю під ту чи іншу регуляторку, або мати моди для лаб. Наприклад, давати потужніше радіво, бо регульоване не дає потрібної користувачу відстані, або вимикати якісь безпекові фічі в продакшоні, бо вони негативно впливають на UX

Українська

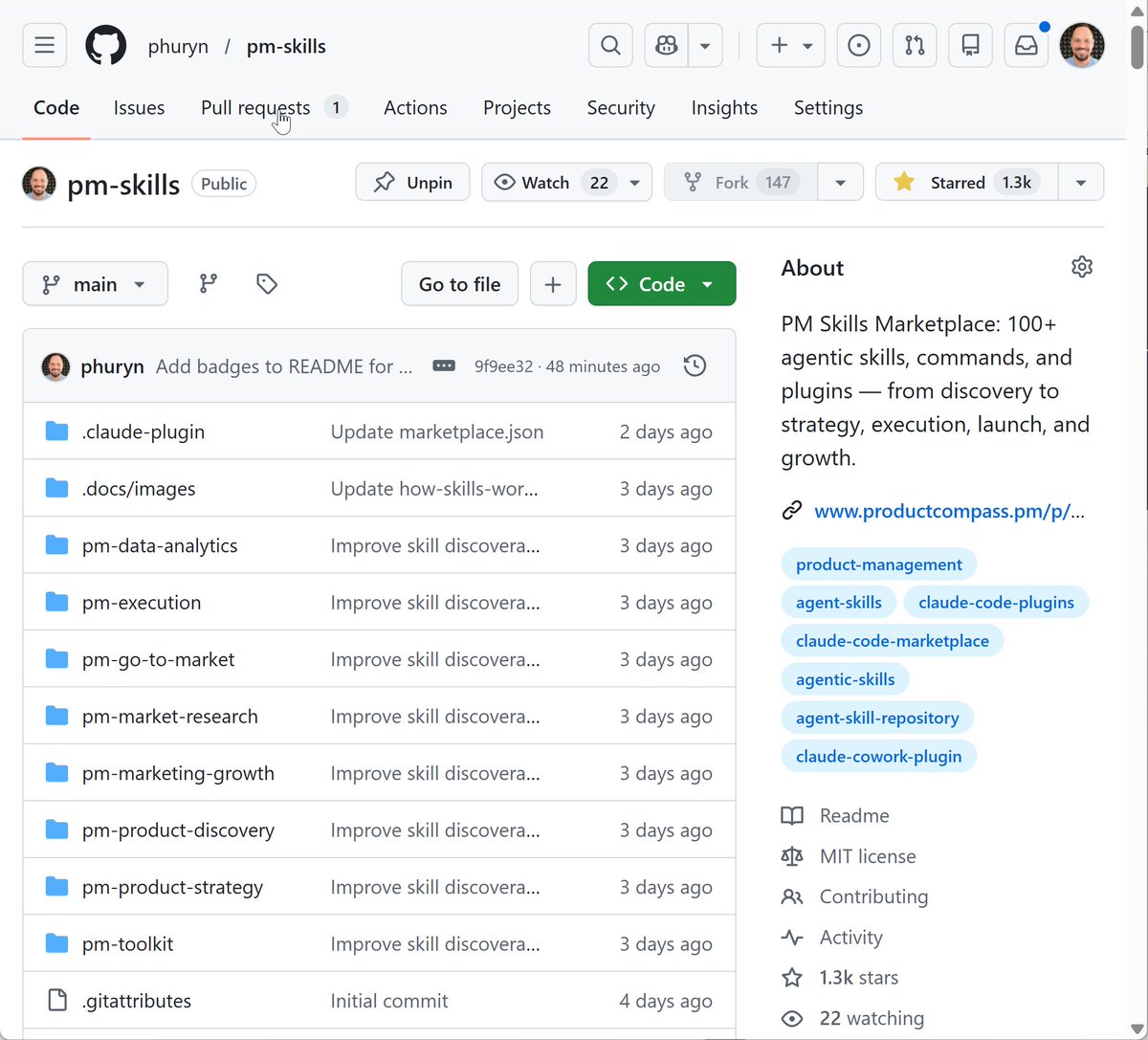

@PawelHuryn Why? - #L86" target="_blank" rel="nofollow noopener">github.com/phuryn/pm-skil…

1,300+ stars on GitHub in 72 hours. 400+ in the last 4 hours. I didn’t expect this.

I built an open-source PM Skills Marketplace for Claude: 100+ skills and commands that turn AI into a product management partner.

Not generic prompts. Structured skills that actually know PM frameworks.

8 plugins covering the full PM lifecycle:

→ Product Discovery: problem & solution space exploration

→ Product Strategy: vision, business model, strategy docs

→ Execution: PRDs, OKRs, roadmaps, sprint planning, user stories

→ Market Research: personas, segmentation, TAM/SAM/SOM

→ Data & Analytics: cohorts, A/B testing, retention analysis

→ Go-to-Market: GTM strategy, beachhead, ICP, growth loops

→ Marketing & Growth: positioning, North Star Metric

→ PM Toolkit: including PM resume review

Built for Claude Code and Cowork. Compatible with Gemini CLI, Cursor, Codex CLI, and Kiro.

If this helps you, ⭐ the repo!

English

@olehhhhhhhhh @satory_ua А хардкод тут #L26" target="_blank" rel="nofollow noopener">github.com/openclaw/openc…

Але цікаво як це фундаментально вирішити

Українська

@olehhhhhhhhh @satory_ua А де хардкод? А то я по скріншоту не бачу

Українська

жарти про те що штучний інтелект це три if-else in a trenchcoat пишуть себе самі

Peter Steinberger 🦞@steipete

figured this might be a good idea (IMO that can happen to anyone and the mockery is uncalled. In the stress it's better to allow a wider range... at least in english)

Українська

@satory_ua @olehhhhhhhhh Але для того щоб зрозуміти про що оригінальний пост - треба трохи контексту знати. Бо угорать з твіту і думати що це список кондішенів бізнес логіки, а не датасет для тесту - дуже дивно.

Українська

@satory_ua @olehhhhhhhhh Я не розумію до чого тут if else дойоб до коду тесту? 0o звичайний датапровайдер.

Українська