پن کیا گیا ٹویٹ

testOrSync

621 posts

testOrSync

@testOrSync

☞ #непизди REFERRAL LINK: 🔗 https://t.co/qvYQkhoQaf https://t.co/zMkVi6EMFr

planet: Earth 🌎 ---- 💻 شامل ہوئے Aralık 2020

315 فالونگ25 فالوورز

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

Apollo 15 Lunar Rover Footage Upscaled and Interpolated to 60 FPS - Full video in comments

Incredible footage from onboard the Apollo 15 Lunar Rover captured by Jim Irwin using the 16mm DAC camera.

This footage has been upscaled and Interpolated to 60 FPS and synchronised to the mission audio by Moonpans

Original footage source: Apollo Flight Journal

Full video in comments

English

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

Artemis II crew is thousands of miles away from Earth

And they’re asking ground crew for help because they have two versions of Microsoft Outlook open and neither is working

This scene is now canon 😭

Polymarket@Polymarket

JUST IN: Artemis II crew experiences issues with Microsoft Outlook on their way to the Moon, asks ground crew for assistance.

English

testOrSync ری ٹویٹ کیا

That's us! 🌍

The Artemis II crew captured beautiful, high-resolution images of our home planet during their journey to the Moon. As @Astro_Christina put it: "You guys look great."

English

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

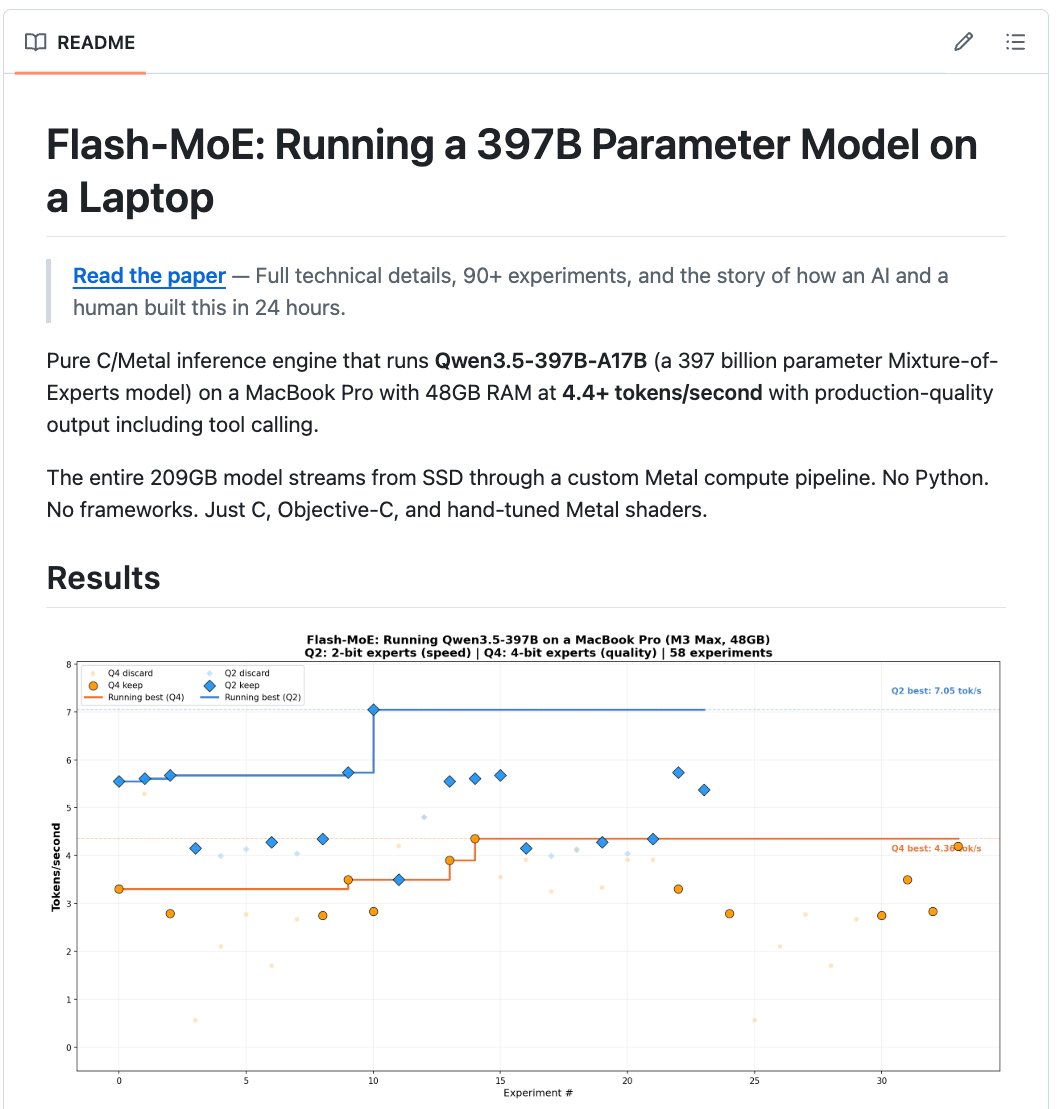

🚨 397 billion parameters. On a MacBook. No cloud. No GPU cluster. No data center. A laptop.

Someone ran one of the largest AI models on Earth on a machine you can buy at the Apple Store.

It's called flash-moe.

A pure C and Metal inference engine that runs Qwen3.5-397B on a MacBook Pro with 48GB RAM. At 4.4 tokens per second. With tool calling.

No Python. No PyTorch. No frameworks. Just raw C and hand-tuned Metal shaders.

Here's why this should not be possible:

→ The model is 209GB. The laptop has 48GB of RAM.

→ It streams the entire model from the SSD in real time

→ Only loads the 4 experts needed per token out of 512

→ Uses just 5.5GB of actual memory during inference

→ Production-quality output with full tool calling

→ 58 experiments. Hand-optimized Metal compute kernels.

→ The entire engine is ~7,000 lines of C and ~1,200 lines of Metal shaders

Here's the wildest part:

One person built this. A VP of AI at CVS Health. Not Google. Not OpenAI. A healthcare company executive. Side project. Used Claude Code as his coding partner. Built the entire engine in 24 hours.

Running a 397B model on cloud GPUs costs hundreds of dollars per hour. Companies spend millions per year on inference infrastructure for models this size.

This runs on a $3,499 laptop. Offline. Private. No API key. No monthly bill. Forever.

Trending on GitHub. 332 points on Hacker News.

100% Open Source.

English

testOrSync ری ٹویٹ کیا

Action. Wonder. Adventure. Artemis II has got it all. Don't miss the moment. Our crewed Moon mission will launch as early as April 1.

Learn how to watch: nasa.gov/ways-to-watch/

English

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

Just endorsed .agent—keeping it open and not owned by one company. If you build with AI agents: @agentcommunity_ agentcommunity.org

English

testOrSync ری ٹویٹ کیا

@maestro__dev I'm running this locally on a real Android device, and the app is painfully slow. It's nowhere near the speed you show in the videos.

English

testOrSync ری ٹویٹ کیا

testOrSync ری ٹویٹ کیا

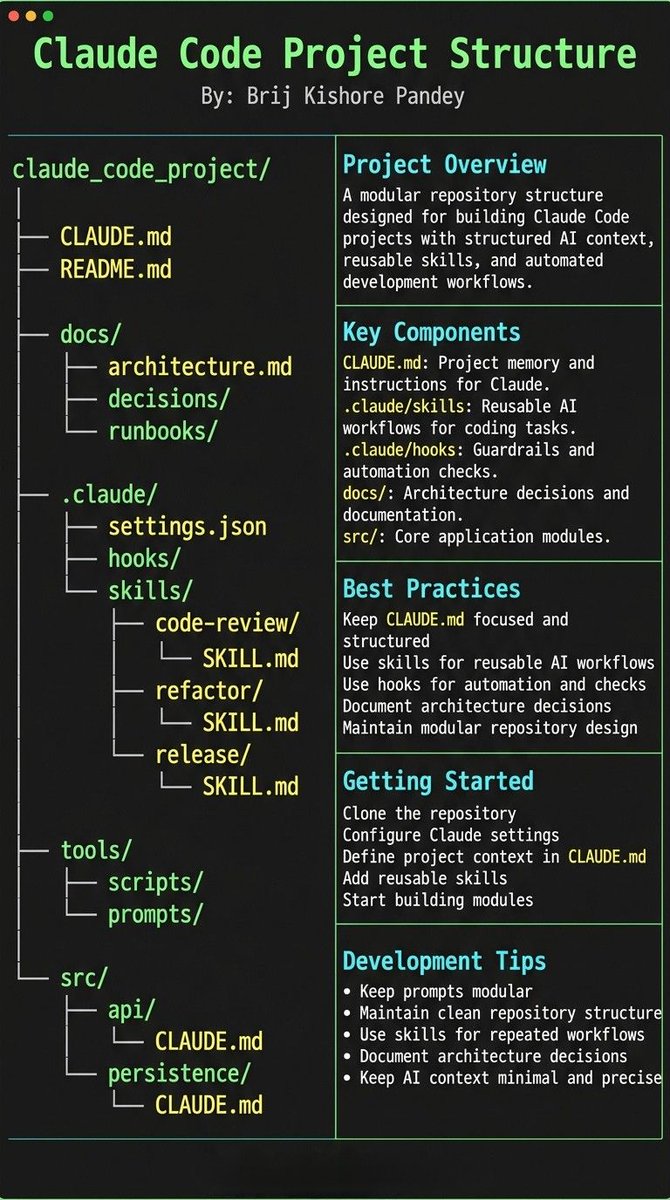

Most people think using Claude Code is about writing better prompts.

It’s not.

The real unlock is structuring your repository so Claude can think like an engineer.

If your repo is messy, Claude behaves like a chatbot.

If your repo is structured, Claude behaves like a developer living inside your codebase.

Your project only needs 4 things:

• the why → what the system does

• the map → where things live

• the rules → what’s allowed / forbidden

• the workflows → how work gets done

I call this:

The Anatomy of a Claude Code Project 👇

━━━━━━━━━━━━━━━

1️⃣ CLAUDE.md = Repo Memory (Keep it Short)

This file is the north star for Claude.

Not a massive document.

Just three things:

• Purpose → why the system exists

• Repo map → how the project is structured

• Rules + commands → how Claude should operate

If CLAUDE.md becomes too long, the model starts missing critical signals.

Clarity beats size.

━━━━━━━━━━━━━━━

2️⃣ .claude/skills/ = Reusable Expert Modes

Stop repeating instructions in prompts.

Turn common workflows into reusable skills.

Examples:

• code review checklist

• refactoring playbook

• debugging workflow

• release procedures

Now Claude can switch into specialized modes instantly.

Result:

More consistent outputs across sessions and teammates.

━━━━━━━━━━━━━━━

3️⃣ .claude/hooks/ = Guardrails

Models forget.

Hooks don’t.

Use hooks for things that must always happen automatically.

Examples:

• run formatters after edits

• trigger tests after core changes

• block sensitive directories (auth, billing, migrations)

Hooks turn AI workflows into reliable engineering systems.

━━━━━━━━━━━━━━━

4️⃣ docs/ = Progressive Context

Don’t overload prompts with information.

Instead, let Claude navigate your documentation.

Examples:

• architecture overview

• ADRs (engineering decisions)

• operational runbooks

Claude doesn’t need everything in memory.

It just needs to know where truth lives.

━━━━━━━━━━━━━━━

5️⃣ Local CLAUDE.md for Critical Modules

Some areas of your system have hidden complexity.

Add local context files there.

Example:

src/auth/CLAUDE.md

src/persistence/CLAUDE.md

infra/CLAUDE.md

Now Claude understands the danger zones exactly when it works in them.

This dramatically reduces mistakes.

━━━━━━━━━━━━━━━

Here’s the shift most people miss:

Prompting is temporary.

Structure is permanent.

Once your repository is designed for AI:

Claude stops acting like a chatbot...

…and starts behaving like a project-native engineer. 🚀

English