xia0nan ری ٹویٹ کیا

xia0nan

47 posts

xia0nan

@xia0nan

Staff Data Scientist @ Agoda Working on #NLP, #CV applications in tech/travel/banking domain Ex-Wego, ex-OCBC AI lab NUS | Gatech GitHub @xia0nan

Singapore شامل ہوئے Kasım 2019

220 فالونگ14 فالوورز

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

xia0nan ری ٹویٹ کیا

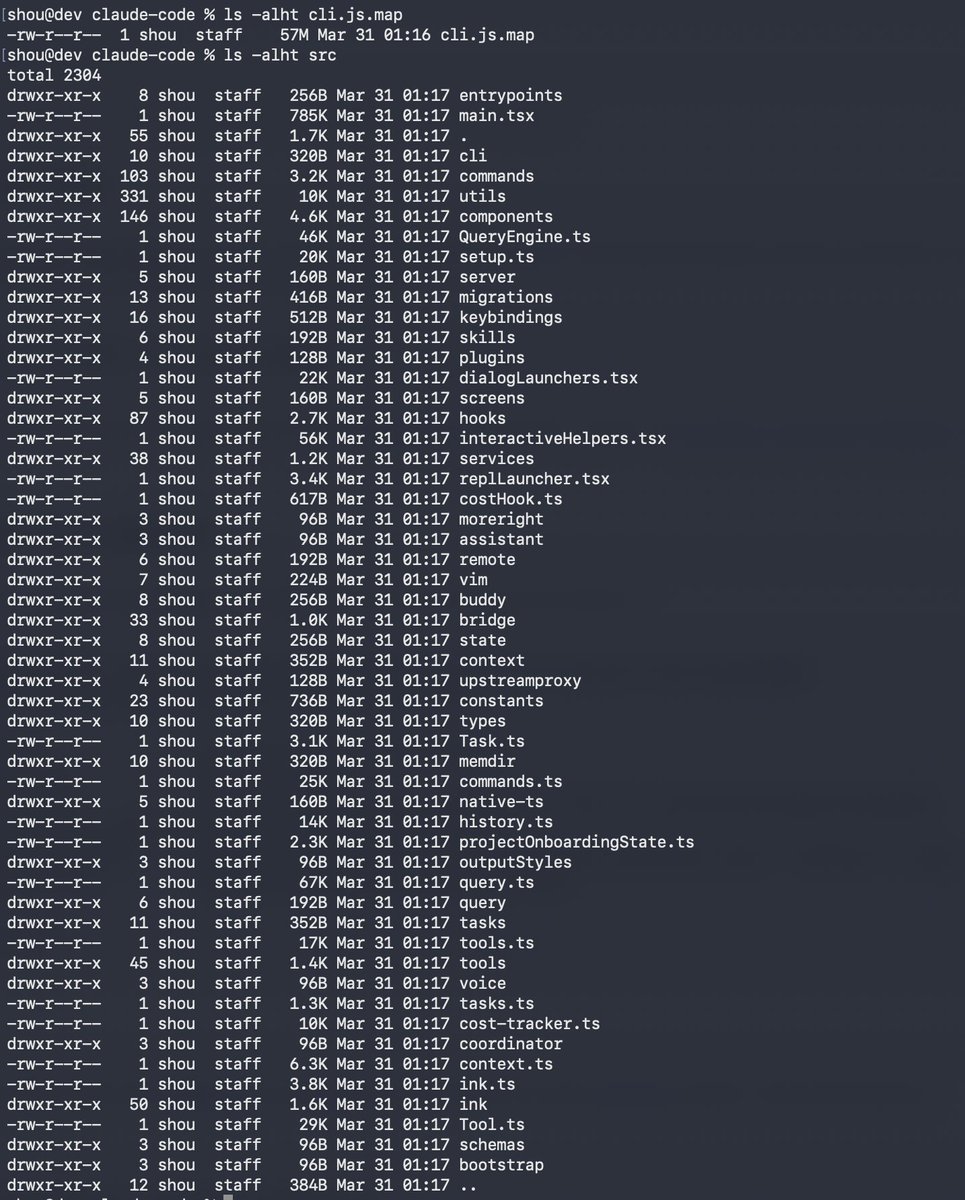

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

- the human iterates on the prompt (.md)

- the AI agent iterates on the training code (.py)

The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc.

github.com/karpathy/autor…

Part code, part sci-fi, and a pinch of psychosis :)

English

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow.

Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes.

As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now.

It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

English

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

xia0nan ری ٹویٹ کیا

I'm Boris and I created Claude Code. I wanted to quickly share a few tips for using Claude Code, sourced directly from the Claude Code team. The way the team uses Claude is different than how I use it. Remember: there is no one right way to use Claude Code -- everyones' setup is different. You should experiment to see what works for you!

English

Let's build GPT: from scratch, in code, spelled out. youtu.be/kCc8FmEb1nY?si… via @YouTube

Finally done with this one

YouTube

English

Today's conversation with ChatGPT:

- Vector DB in RAG chatgpt.com/share/67f82a55…

English

xia0nan ری ٹویٹ کیا

xAI's API is live! - try it out @ console.x.ai

* 128k token context

* Function calling support

* Custom system prompt support

* Compatible with OpenAI & Anthropic SDKs

* $25/mo in free credits till EOY

x.ai/api

Toby Pohlen@TobyPhln

You now have $25/month of free API credits until the end of the year. If you have purchased credits already, you'll get the equivalent amount in additional free credits. x.ai/blog/api

English

📢📢 Lectures of @MITDeepLearning are officially starting this Monday 4/29 -- #FREE + online for all!

📅 New lecture every Monday at 10am ET

🤩 New updates! #LLMs, #GenAI, #Transformers, #Diffusion, #Robotics & more!

👀 Sign up for live updates youtube.com/channel/UCtslD…

GIF

English

xia0nan ری ٹویٹ کیا

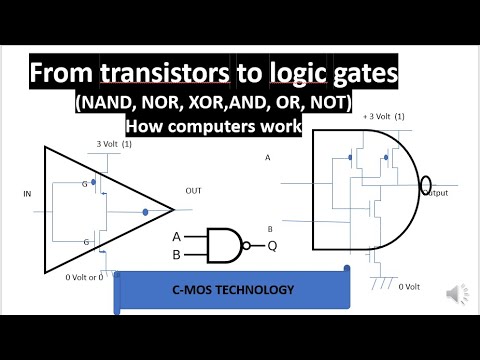

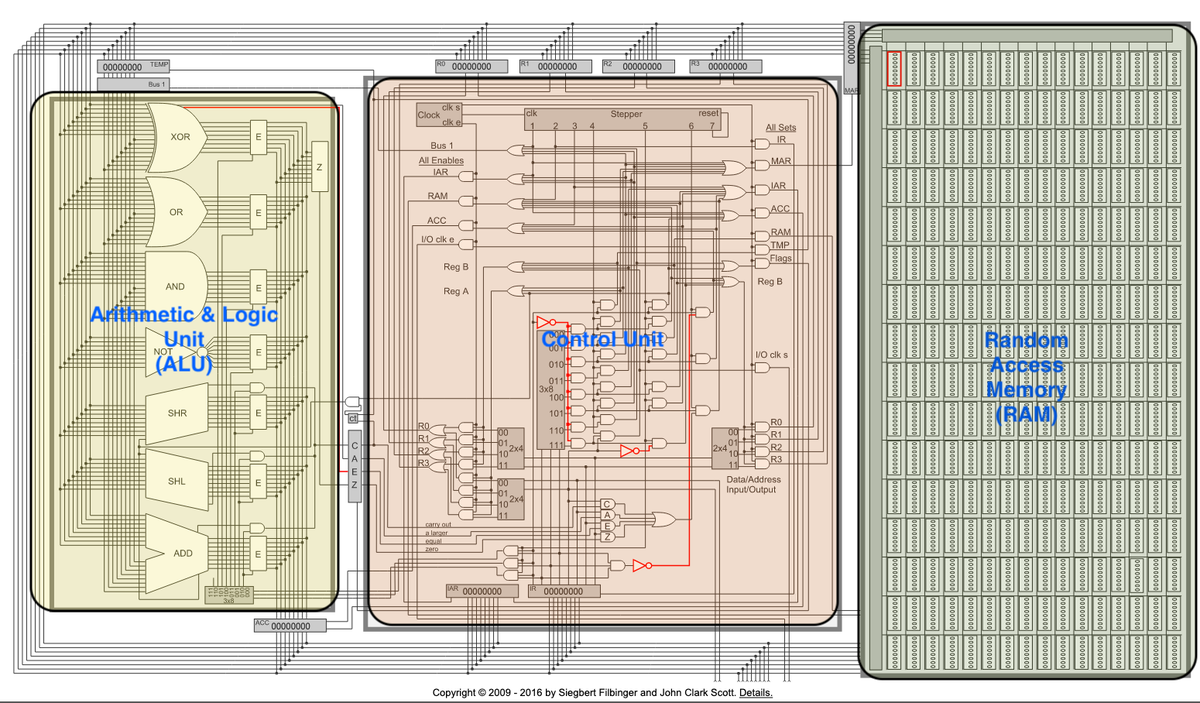

Actually this was really good - a tour from one transistor to a small CPU (Scott CPU, to be precise).

The YouTube playlist:

youtube.com/watch?v=HaBMAD…

I also haven't yet come across the "But How Do It Know" by Scott, which this is based on, and which looks great:

amazon.com/But-How-Know-P…

Turns out this is a whole deeper rabbit hole of people who've also built + simulated it in code, e.g.:

djharper.dev/post/2019/05/2…

Now I must resist the temptation to simulate Scott CPU in C, add tensor cores to it, move it to an FPGA and get it to inference a Llama.

YouTube

mohh@mohbibi_

if you want to understand how computers work at the hardware level. I've seen the first video banger so far.

English

xia0nan ری ٹویٹ کیا

# on shortification of "learning"

There are a lot of videos on YouTube/TikTok etc. that give the appearance of education, but if you look closely they are really just entertainment. This is very convenient for everyone involved : the people watching enjoy thinking they are learning (but actually they are just having fun). The people creating this content also enjoy it because fun has a much larger audience, fame and revenue. But as far as learning goes, this is a trap. This content is an epsilon away from watching the Bachelorette. It's like snacking on those "Garden Veggie Straws", which feel like you're eating healthy vegetables until you look at the ingredients.

Learning is not supposed to be fun. It doesn't have to be actively not fun either, but the primary feeling should be that of effort. It should look a lot less like that "10 minute full body" workout from your local digital media creator and a lot more like a serious session at the gym. You want the mental equivalent of sweating. It's not that the quickie doesn't do anything, it's just that it is wildly suboptimal if you actually care to learn.

I find it helpful to explicitly declare your intent up front as a sharp, binary variable in your mind. If you are consuming content: are you trying to be entertained or are you trying to learn? And if you are creating content: are you trying to entertain or are you trying to teach? You'll go down a different path in each case. Attempts to seek the stuff in between actually clamp to zero.

So for those who actually want to learn. Unless you are trying to learn something narrow and specific, close those tabs with quick blog posts. Close those tabs of "Learn XYZ in 10 minutes". Consider the opportunity cost of snacking and seek the meal - the textbooks, docs, papers, manuals, longform. Allocate a 4 hour window. Don't just read, take notes, re-read, re-phrase, process, manipulate, learn.

And for those actually trying to educate, please consider writing/recording longform, designed for someone to get "sweaty", especially in today's era of quantity over quality. Give someone a real workout. This is what I aspire to in my own educational work too. My audience will decrease. The ones that remain might not even like it. But at least we'll learn something.

English

xia0nan ری ٹویٹ کیا

Official post on Mixtral 8x7B: mistral.ai/news/mixtral-o…

Official PR into vLLM shows the inference code:

github.com/vllm-project/v…

New HuggingFace explainer on MoE very nice:

huggingface.co/blog/moe

In naive decoding, performance of a bit above 70B (Llama 2), at inference speed of ~12.9B dense model (out of total 46.7B params).

Notes:

- Glad they refer to it as "open weights" release instead of "open source", which would imo require the training code, dataset and docs.

- "8x7B" name is a bit misleading because it is not all 7B params that are being 8x'd, only the FeedForward blocks in the Transformer are 8x'd, everything else stays the same. Hence also why total number of params is not 56B but only 46.7B.

- More confusion I see is around expert choice, note that each token *and also* each layer selects 2 different experts (out of 8).

- Mistral-medium 👀

Guillaume Lample @ NeurIPS 2024@GuillaumeLample

Very excited to release our second model, Mixtral 8x7B, an open weight mixture of experts model. Mixtral matches or outperforms Llama 2 70B and GPT3.5 on most benchmarks, and has the inference speed of a 12B dense model. It supports a context length of 32k tokens. (1/n)

English

xia0nan ری ٹویٹ کیا

This is huge: Llama-v2 is open source, with a license that authorizes commercial use!

This is going to change the landscape of the LLM market.

Llama-v2 is available on Microsoft Azure and will be available on AWS, Hugging Face and other providers

Pretrained and fine-tuned models are available with 7B, 13B and 70B parameters.

Llama-2 website: ai.meta.com/llama/

Llama-2 paper: ai.meta.com/research/publi…

A number of personalities from industry and academia have endorsed our open source approach: about.fb.com/news/2023/07/l…

English