Lyre Calliope | 🦋@captaincalliope.blue đã retweet

Lyre Calliope | 🦋@captaincalliope.blue

18.2K posts

Lyre Calliope | 🦋@captaincalliope.blue

@CaptainCalliope

🧭Exploring the how to build greater self-determination and equity in and thru tech

Tham gia Nisan 2010

2.7K Đang theo dõi1.4K Người theo dõi

Lyre Calliope | 🦋@captaincalliope.blue đã retweet

@BoyandPiano Set up a blue/blacksky account! It'll be great! We've got nice stuff that side of the internet. Like moderators.

English

Lyre Calliope | 🦋@captaincalliope.blue đã retweet

My colleague @ChicagoWaddup did a dope interview on tech fascism & the Network State for Liberation Hour 104.5 FM Radio Free Urbana!

Support independent media, get a whole lot of information about what we are facing from people organizing against it!

youtu.be/Opuo_L3ah98?si…

YouTube

English

I tell this story periodically, but it seems like it's time again:

General Motors ran an automobile manufacturing plant in Fremont, California, that was one of the worst in the country. Accident rates and defects were astronomical. Absenteeism was through the roof. They decided to fix that through a joint venture with Toyota called NUMMI.

Toyota came in and implemented TPS (Lean), and the turnaround was dramatic. Within a few months, NUMMI was a model of perfection. Defects fell to almost zero, as did absenteeism. A critical part of that turnaround was giving the teams control over their own practices and processes. Toyota did NOT install the workstation-level practices that worked for them in Japan. Instead, the teams were given strategic goals, and it was up to each team to decide how best to fulfil them. The other critical factor was Kaizen—continuous improvement (and by "continuous" I mean "continuous." Every minute of every hour of every day. None of this once-every-two-weeks retro stuff. Teams at various workstations coordinated as needed, but multi-team retros occurred only when a defect was detected, and someone pulled the Andon Cord, thereby stopping that part of the line until global processes were changed so the defect couldn't happen again. The teams implemented any necessary changes.

Part of TPS is to document those practices. The good General took that documentation back to Detroit, plonked it on management's desk, and said, "You have to work as described in these docs." That was an utter failure. Pretty much every metric got worse. The same processes and practices that worked wonders in Freemont did active damage in Detroit.

What GM didn't get is that the key element that made things work so well in Fremont was team autonomy—the fact that each and every team developed and was responsible for its own process and practices. The actual processes the teams came up with were much less important. Process does not transfer. There were universal guidelines (e.g. Kaizen), but nobody told the teams how to do their work.

Now, consider something like Scrum. Like NUMMI, Sutherland and Schwaber mixed a lot of Lean thinking into what they were doing. The first, autonomous Scrum team came up with a process that worked for them, and they improved. However, PROCESS DOES NOT TRANSFER. Team autonomy—the team's ability to define how it works—is the critical element. Any organization that just mindlessly follows Sutherland/Schwaber's documentation will get the same results that GM got in Detroit. Failure. (Or at least no real improvement).

Worth thinking about.

English

No, it boils down to the idea that you are entitled to the labor of others. That is why socialism involves redistribution of the earnings of people who work. The philosophy that says you’re entitled to the fruits of your own labor is called “capitalism”, and that is the one in which you get to hold on to what you earn.

English

Socialism very literally boils down to the belief that you should get to enjoy the fruits of your own labor.

Alice Smith@TheAliceSmith

Socialism boils down to the belief that someone else owes you a living.

English

Lyre Calliope | 🦋@captaincalliope.blue đã retweet

@uwukko I've practically devoted notebooks to this over the decades. Lol

HMU if you want a mini-browser brand strategy session.

English

@mjbukow @connordavis_ai Do you have a writeup or prompts shared somewhere? This sounds interesting!

English

@connordavis_ai I did this whole research angle where I used emojis as proxies for emotional reasoning. It made the amount of emojis used rise to an incredibly annoying level, but the EIQ seemed way more coherent.

English

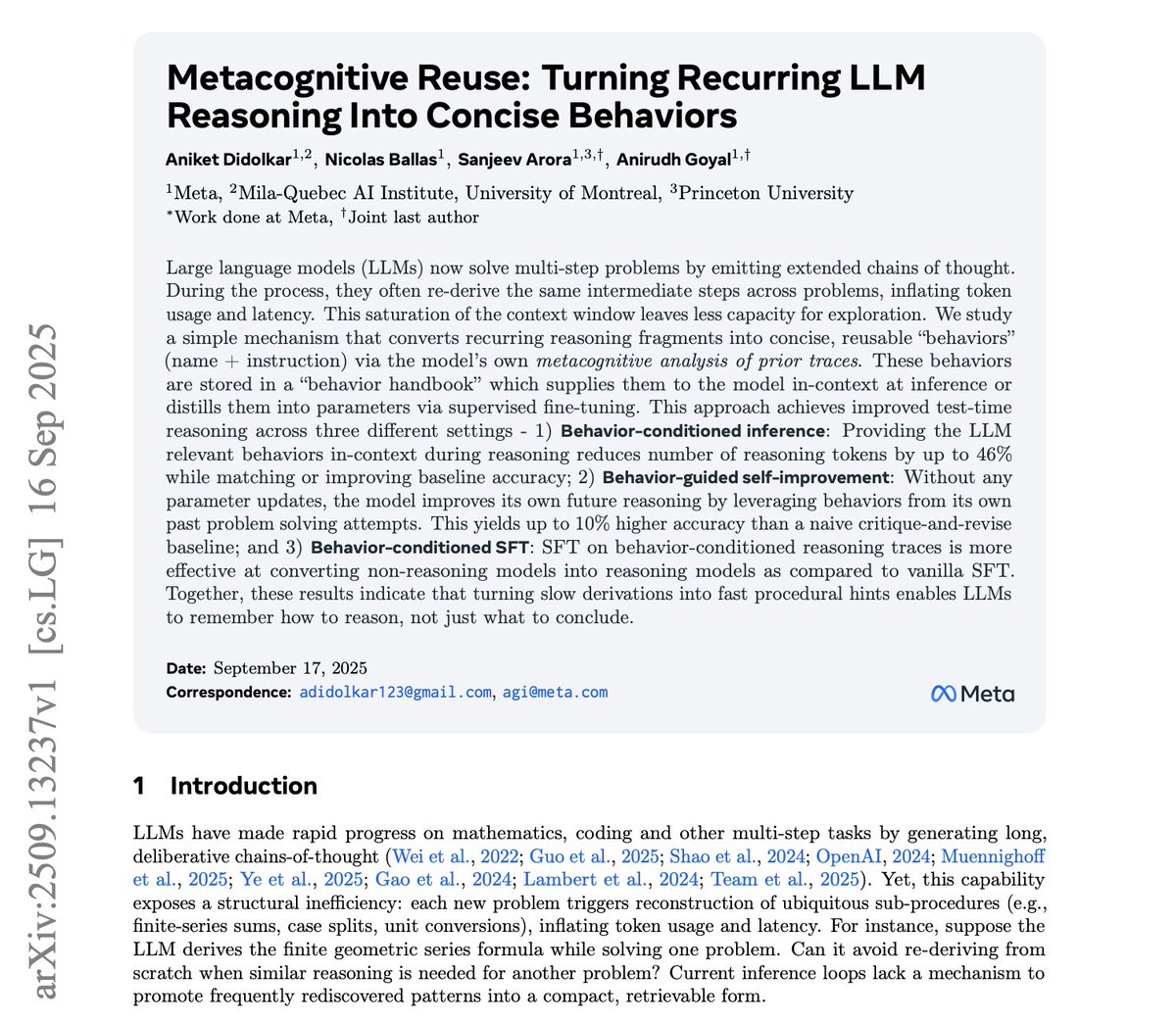

🚨 Meta just exposed a massive inefficiency in AI reasoning

Current models burn through tokens re-deriving the same basic procedures over and over. Every geometric series problem triggers a full derivation of the formula. Every probability question reconstructs inclusion-exclusion from scratch. It's like having a mathematician with amnesia.

Their solution: "behaviors" - compressed reasoning patterns extracted from the model's own traces. Instead of storing facts like RAG systems, they store procedural knowledge. "behavior_inclusion_exclusion" becomes a reusable cognitive tool rather than something to rediscover each time.

The results crush current approaches. 46% fewer tokens with maintained accuracy on MATH problems. 10% better accuracy on AIME with behavior-guided self-improvement versus standard critique-and-revise.

But here's the kicker: when they fine-tuned models on behavior-conditioned reasoning, smaller models didn't just get faster - they became fundamentally better reasoners. The behaviors act as scaffolding for building sophisticated reasoning capabilities.

This flips everything. Instead of "think longer = think better," we get "remember how to think = think better." No architectural changes needed. Just better utilization of patterns the models already discover.

The current paradigm - scale context length for redundant reasoning - looks wasteful now. We're paying enormous computational costs for models to repeatedly rediscover their own knowledge.

This suggests reasoning breakthroughs won't come from bigger models or longer chains of thought, but from systems that accumulate procedural memory. Models that learn not just what to conclude, but how to think efficiently.

The efficiency gains alone make this commercially critical. But the deeper insight challenges our entire approach to reasoning model development.

English

@connordavis_ai @readwise save thread

English

@rubenhassid @readwise save thread

English

@rubenhassid @readwise save thread

English

If this thread opened your eyes about AI and the future of work:

1. Follow me @rubenhassid for more threads around what's happening around AI and it's implications.

2. RT the first tweet

Ruben Hassid@rubenhassid

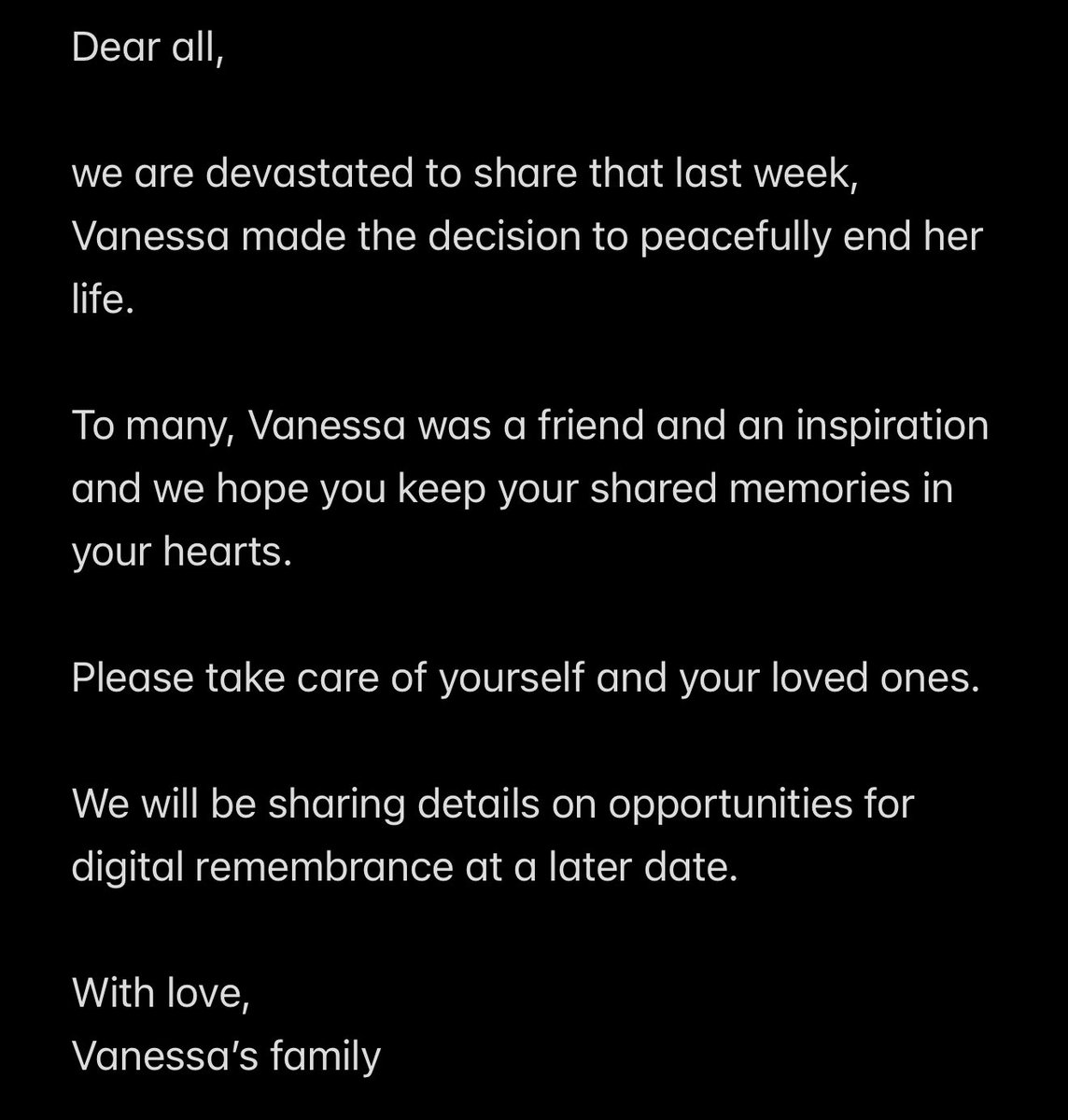

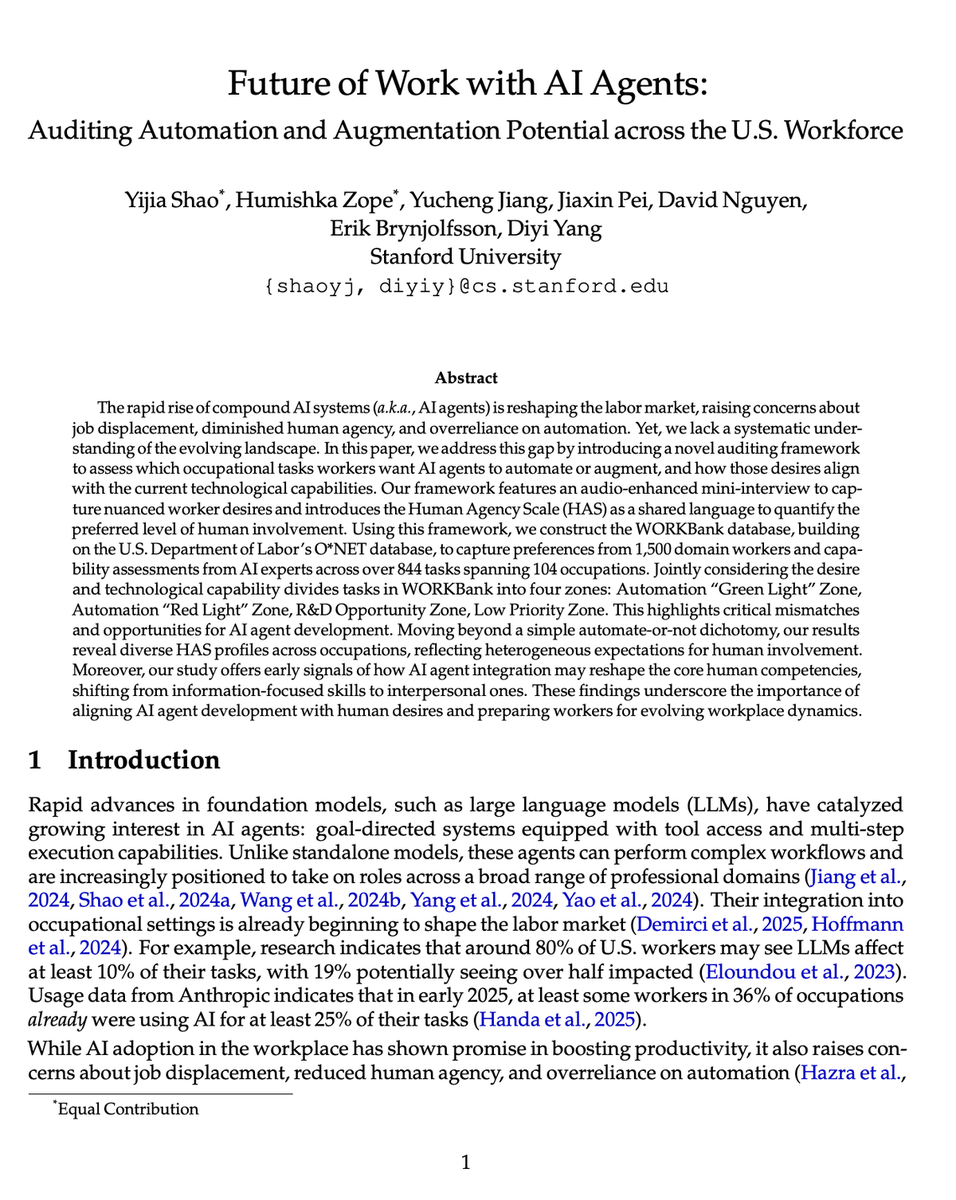

BREAKING: Stanford just surveyed 1,500 workers and AI experts about which jobs AI will actually replace and automate. Turns out, we've been building AI for all the WRONG jobs. Here's what they discovered: (hint: the "AI takeover" is happening backwards)

English

@multisynq uhh.. what is happening here?

English

@aigleeson @readwise save thread

English

@ProtonDrive @asklumo It's giving U2 album.

English

Should we prep a folder for you with @asklumo wallpapers? Drop us a 🐈⬛ for yes!

English

@alex_prompter @readwise save thread

English