Arvind Jain@jainarvind

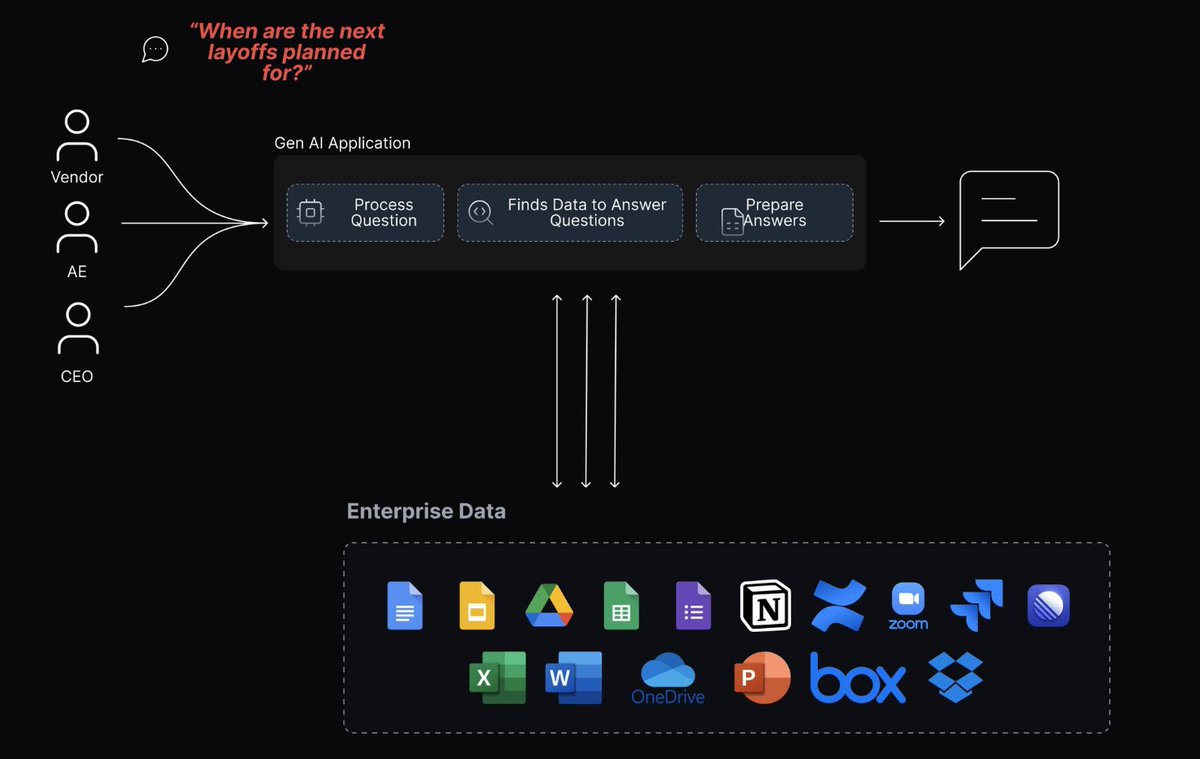

Security, governance, and AI safety in GenAI deployments are top of mind for CIOs. In fact, in a survey @glean conducted earlier this year, 73% of CIOs said that generative AI tools not vetted by the IT department are a threat to the business.

AI security is a core focus for Glean and our customers. Enterprises rely on Glean for its strict permissions enforcement, single-tenant model, and AI governance practices.

I shared some ways we approach AI security with @srimuppidi from @BusinessInsider that you can read in the article below, and also expanding on them here:

1️⃣ Permissions: LLMs should only access data that the user is allowed to see. This way, we keep sensitive information safe and avoid any data leaks.

2️⃣ Training: Since LLMs can remember the data they were trained on, it's crucial to never use company data for training. This helps protect proprietary company information. Glean has agreements with leading LLM providers that promise zero-day retention and no training on your data.

3️⃣ Evaluation: Our main focus is on work AI, so users expect answers that are based on company data. We use our own AI evaluators to ensure the answers generated by LLMs are accurate and reliable.