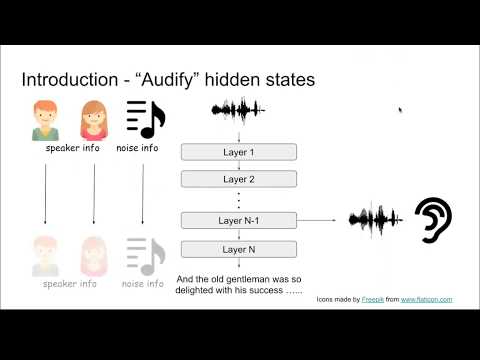

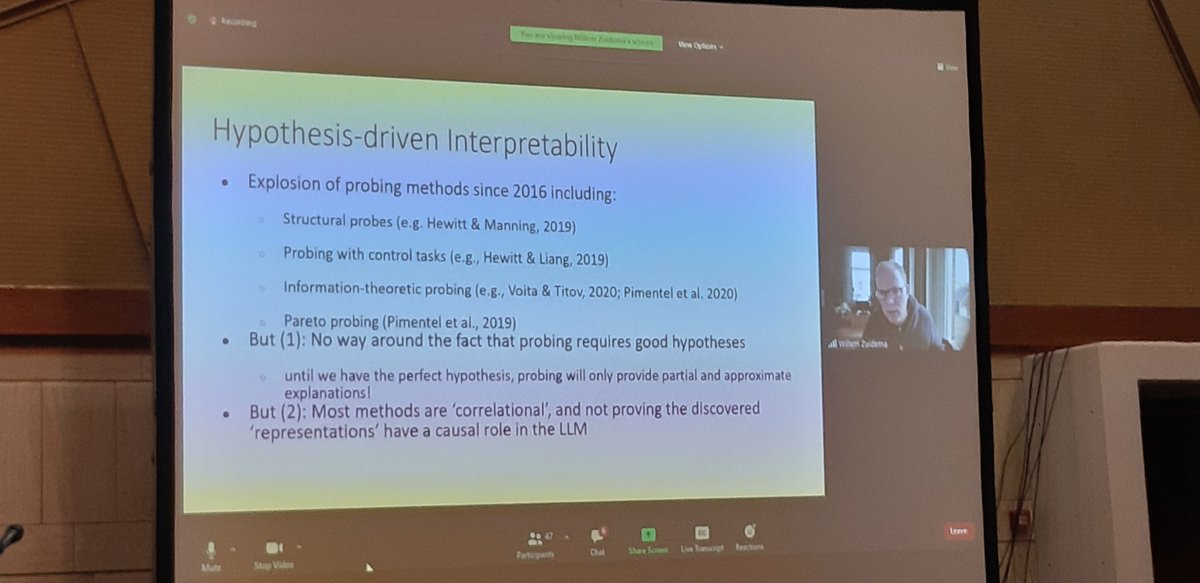

🚨Preprint🚨 Interpretable explanations of NLP models are a prerequisite for numerous goals (e.g. safety, trust). We introduce Causal Proxy Models, which provide rich concept-level explanations and can even entirely replace the models they explain. arxiv.org/abs/2209.14279 1/7