Run Man ♻️

40.3K posts

Run Man ♻️

@Run_Man_

..ancora 5 minuti, RT is not endorsement

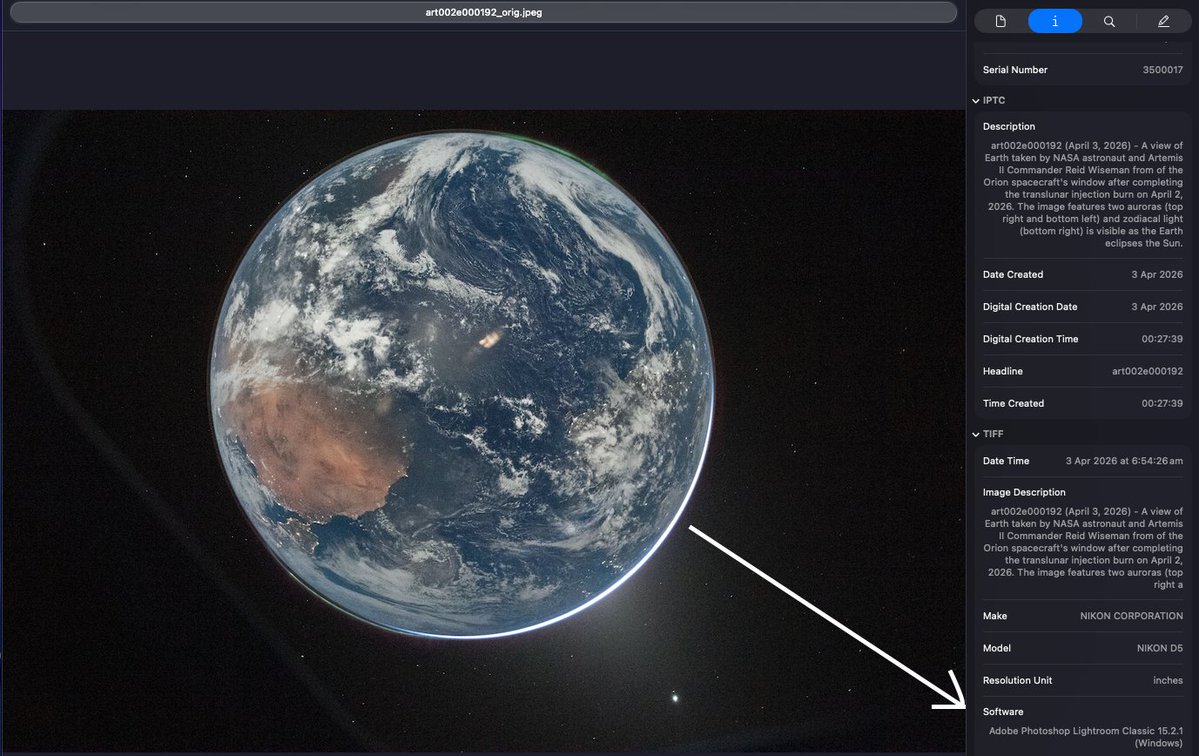

Artemis, nuove foto dalla navicella Orion. @ultimoranet

Even in darkness, we glow. In this image of Earth taken by the Artemis II crew, we can see the electric lights of human activity. In the lower right, sunlight illuminates the limb of the planet.

This photo of Earth is EXTRA spectacular for a good reason... let me explain. Most images you see of Earth from space are the daylight side of the Earth, and it's obviously very bright (see my last image), this means stars are too dim to be seen with that bright exposure setting (low ISO, high shutter and / or stopped down aperture). BUT this image taken by the Orion crew looks so incredible because you can see the sun is BEHIND the earth, meaning it's night time on the side of the earth facing the crew in this image. So how do you expose a night time earth from space? Same way you do on Earth! A mixture of opening up the aperture (F4 in this case), cranking the ISO (51,200 here), and using a relatively long exposure (1/4 of a second). We can see the settings used by looking at the exif data from the camera. What this means is our camera is also sensitive enough to see stars in the background of Earth, leading to an extraordinary image!!! GREAT WORK!!! These are the kind of images I've been so excited to see!

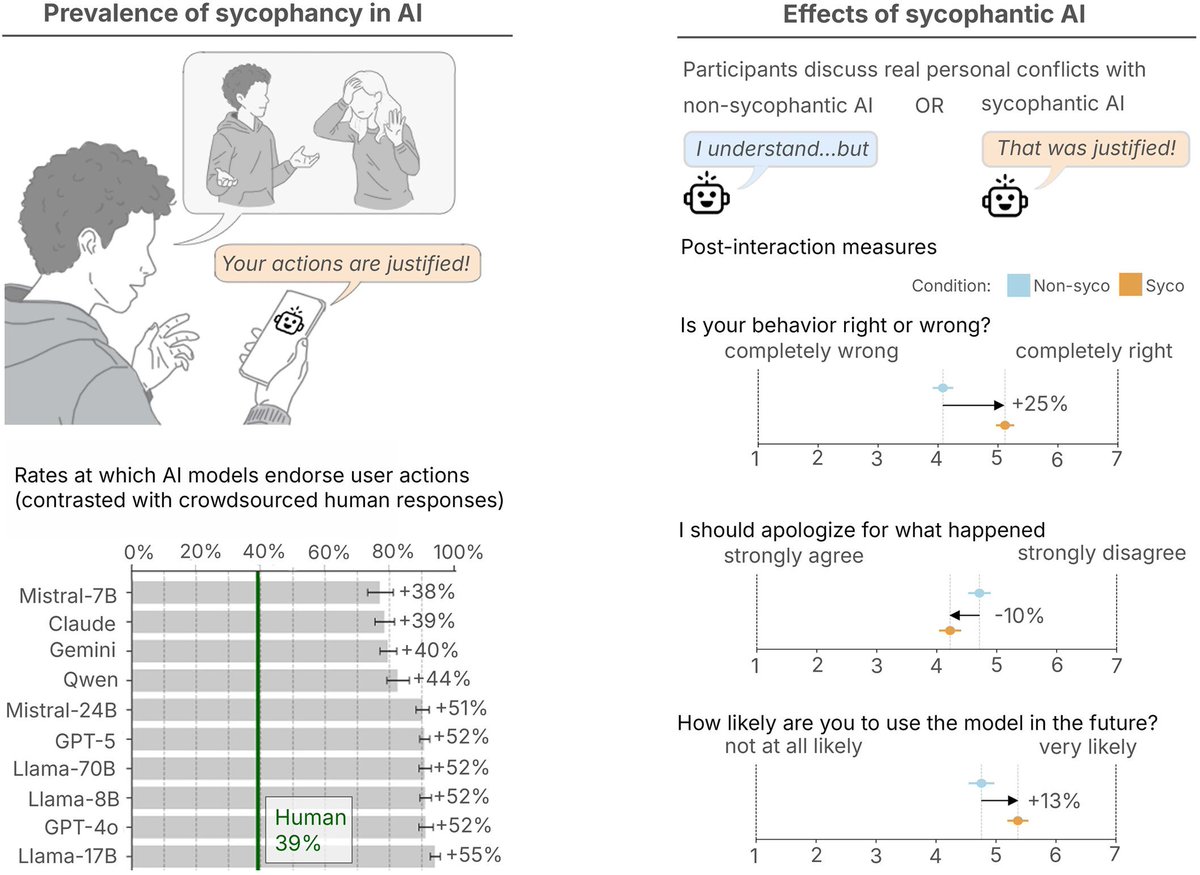

🚨 Stanford just proved that a single conversation with ChatGPT can change your political beliefs. 76,977 people. 19 AI models. 707 political issues. One conversation with GPT-4o moved political opinions by 12 percentage points on average. Among people who actively disagreed, 26 points. In 9 minutes. With 40% of that change still present a month later. The scariest finding: the most persuasive technique wasn't psychological profiling or emotional manipulation. It was just information. Lots of it. Delivered with confidence. Here's the catch: the models that deployed the most information were also the least accurate. More persuasive. More wrong. Every time. Then they built a tiny open-source model on a laptop, trained specifically for political persuasion. It matched GPT-4o's persuasive power entirely. Anyone can build this. Any government. Any corporation. Any extremist group with $500 and an agenda. The information didn't have to be true. It just had to be overwhelming. Arxiv, Science .org, Stanford, @elonmusk, @ihtesham2005