Abdullah

1.2K posts

Abdullah

@abdecoder

🚀: Secure Software Development ❤: Angular, .Net Core, Reactjs, FastApi Helping businesses build a secure, reactive and real-time web apps.

Today, we are emerging from stealth and launching PrismML, an AI lab with Caltech origins that is centered on building the most concentrated form of intelligence. At PrismML, we believe that the next major leaps in AI will be driven by order-of-magnitude improvements in intelligence density, not just sheer parameter count. Our first proof point is the 1-bit Bonsai 8B, a 1-bit weight model that fits into 1.15 GBs of memory and delivers over 10x the intelligence density of its full-precision counterparts. It is 14x smaller, 8x faster, and 5x more energy efficient on edge hardware while remaining competitive with other models in its parameter-class. We are open-sourcing the model under Apache 2.0 license, along with Bonsai 4B and 1.7B models. When advanced models become small, fast, and efficient enough to run locally, the design space for AI changes immediately. We believe in a future of on-device agents, real-time robotics, offline intelligence and entirely new products that were previously impossible. We are excited to share our vision with you and keep working in the future to push the frontier of intelligence to the edge.

@npmjs @GHSecurityLab there is an active supply chain attack on axios@1.14.1 which pulls in a malicious package published today - plain-crypto-js@4.2.1 - someone took over a maintainer account for Axios

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

Etiler'de kapıcı takipçimizin mesajı;

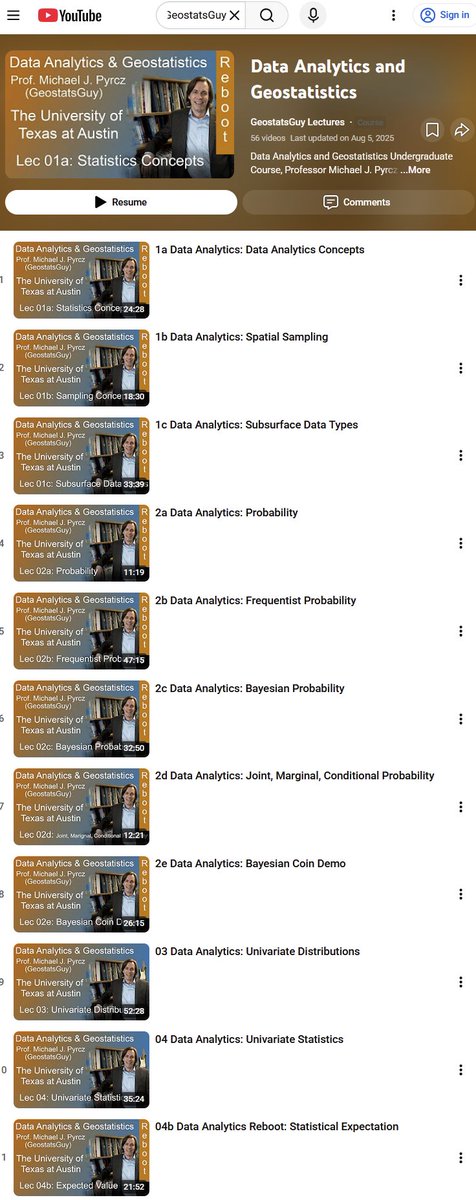

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then: - the human iterates on the prompt (.md) - the AI agent iterates on the training code (.py) The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc. github.com/karpathy/autor… Part code, part sci-fi, and a pinch of psychosis :)