Prabhas

84 posts

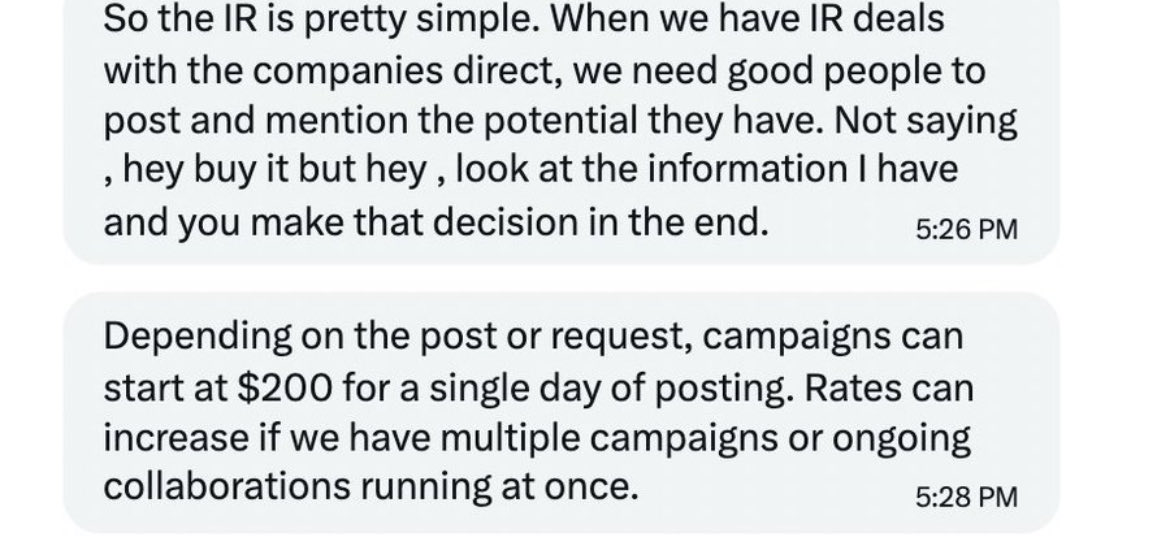

I will get a lot of hate for this. But just so people are aware, paid stock pumpers on X is more common than you think. You'd actually be surprised by how common it actually is. My account is relatively new and small (less than 10k) but already I've been reached out to a number of times on X by middle men/companies to do research for specific companies and post about them, in exchange for fees. The middle men deal with the companies directly so you never come into contact with them. I refused all of them. So it is 100% happening, and some of the offers where fairly lucrative - much more so for bigger accounts. They don't tell you what to write, but they share specific research for you to post about (which is nearly always bullish). This is why I push for transparency when I see co-ordinated X accounts trying to push a false narrative on specific stocks. Please always do your own research, sometimes its all a FUGAZI! $oklo $ionq $rgti $qubt $qbts $iren

this is a really bad take. for li, amazon etc. the relationships are well defined and known before constructing the graph. but if it comes to 'memory' for agents you don't want to rely on a graph since relations are unknown beforehand, keeping it up to date is a pain if the relations are semantic, ah and if you use an llm to build the graph you will suffer from models producing different relations for the same thing. don't use graphs, just give the models a really good search

I've got a MacBook w/128 GB of RAM coming today. My hypothesis: My money is better spent paying for greater hardware and running local coding models than paying a $100+/mo subscription. Follow for details of my setup and to see the results!

UI is pre-AI.

JUST IN: Elon Musk says AI and humanoid robots will "eliminate poverty" and "make everyone wealthy."