Rishab

172 posts

Rishab

@rishabtwi

ai tingz @metaintro | prev @letsunifyai (yc w23)

you're probably underestimating how crazy things are

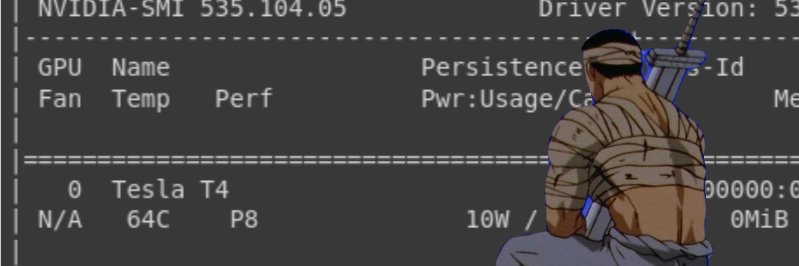

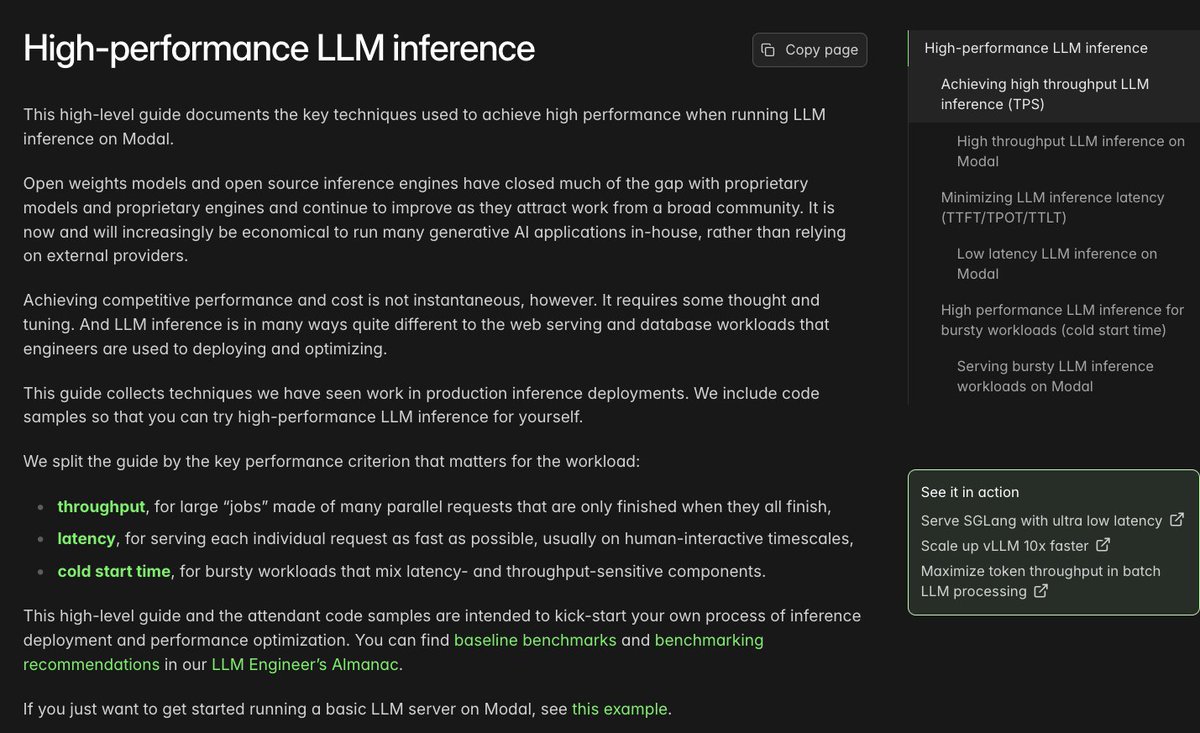

Note: Claude Code invalidates the KV cache for local models by prepending some IDs, making inference 90% slower. See how to fix it here: #fixing-90-slower-inference-in-claude-code" target="_blank" rel="nofollow noopener">unsloth.ai/docs/basics/cl…

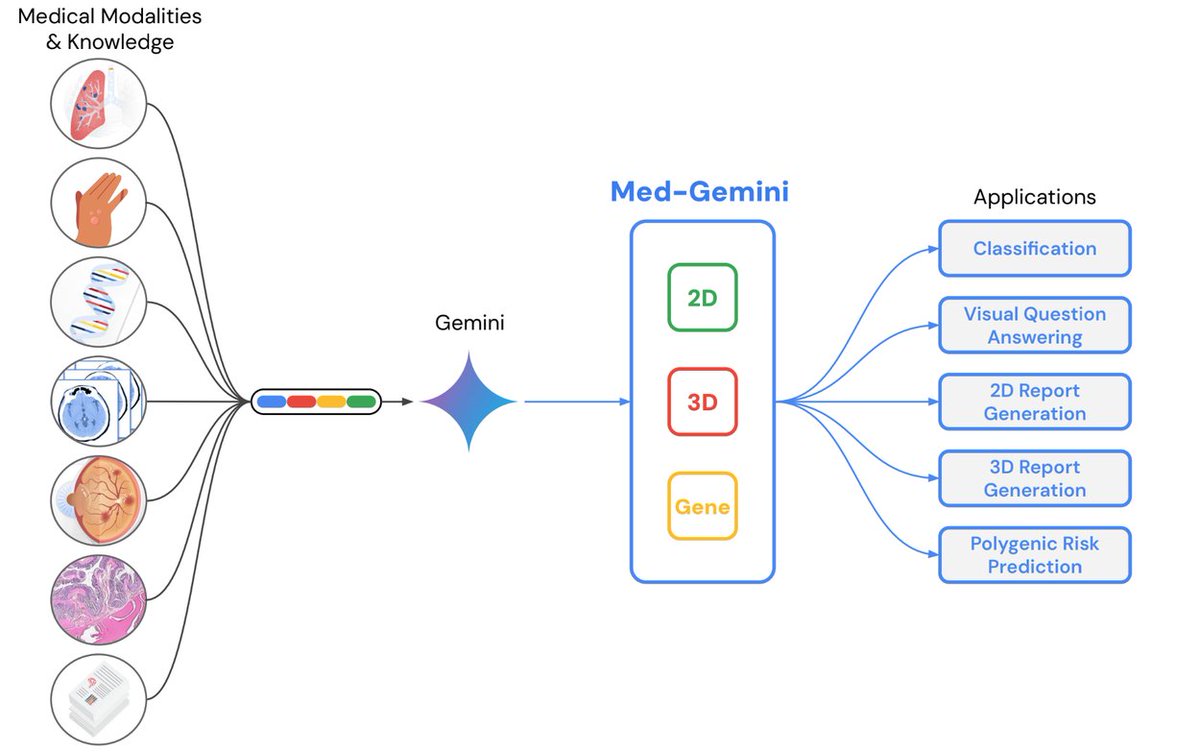

@iScienceLuvr "First of all, the exact same techniques (LLMs/foundation models) used to train ChatGPT are now being used to revolutionize medicine" Can you expand on that please? I asked grok but it seems to think they are vastly different technologies..

In our investigation, we uncovered three separate bugs. They were partly overlapping, making diagnosis even trickier. We've now resolved all three bugs and written a technical report on what happened, which you can find here: anthropic.com/engineering/a-…