Tweet ghim

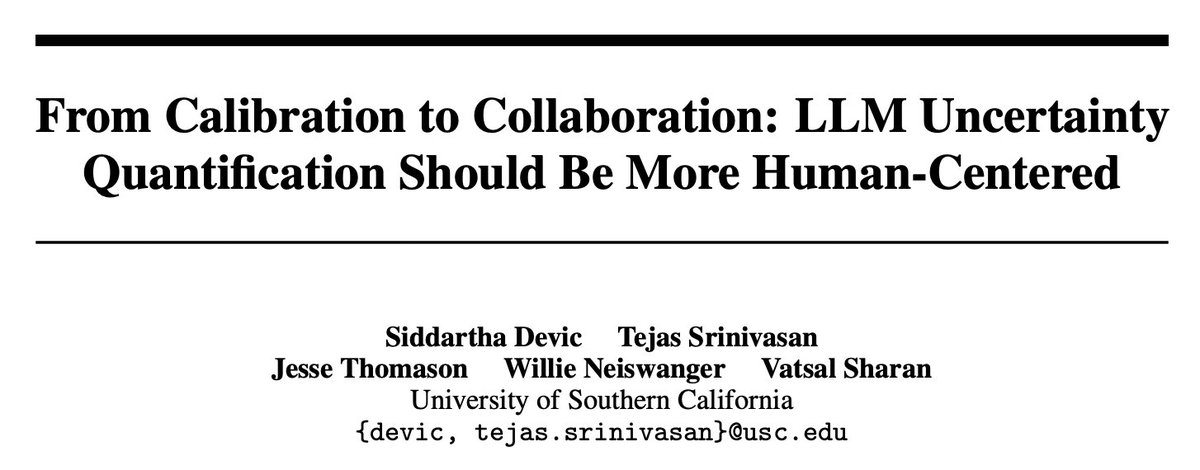

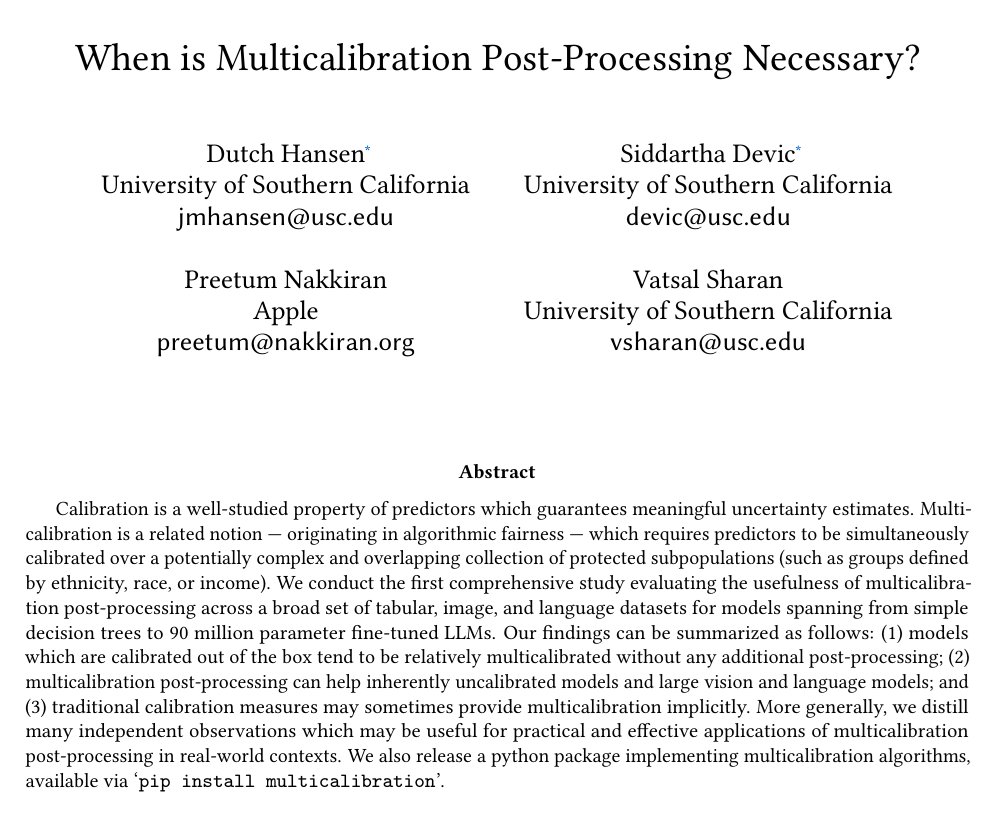

Multicalibration is a fairness notion which requires predictors to be calibrated over subgroups.

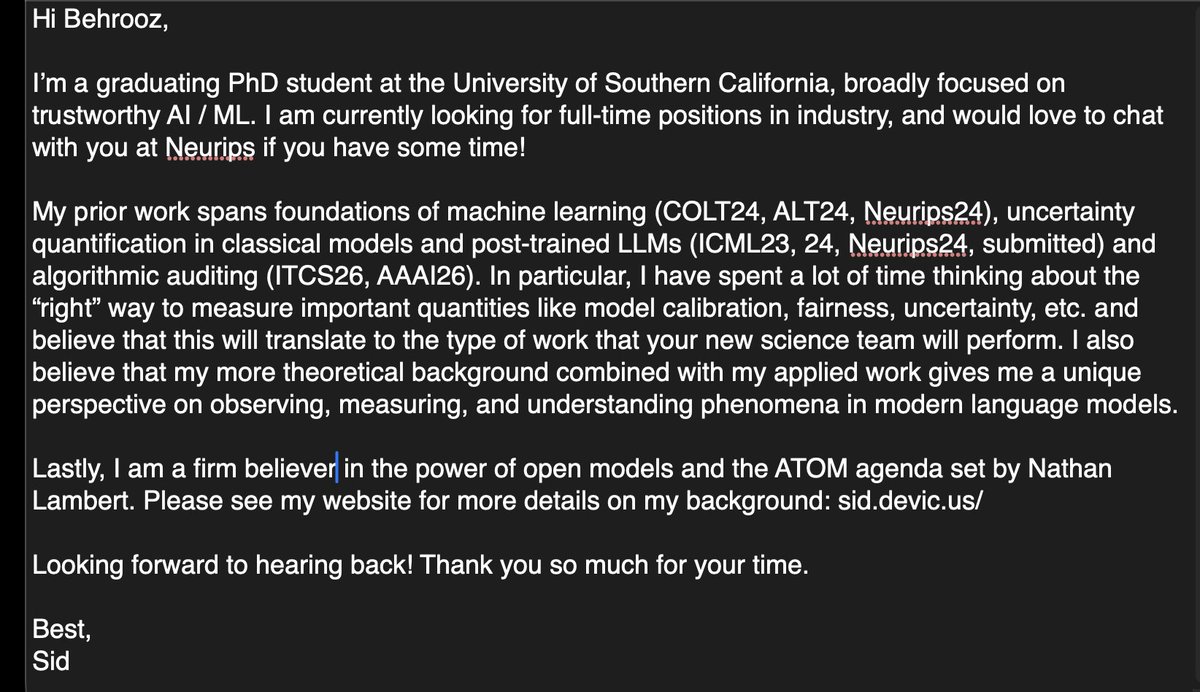

In work with @dutchhansen (applying to PhDs!), @PreetumNakkiran, and Vatsal Sharan, we empirically ask: when are machine learning models multicalibrated with no additional effort?🧵

English