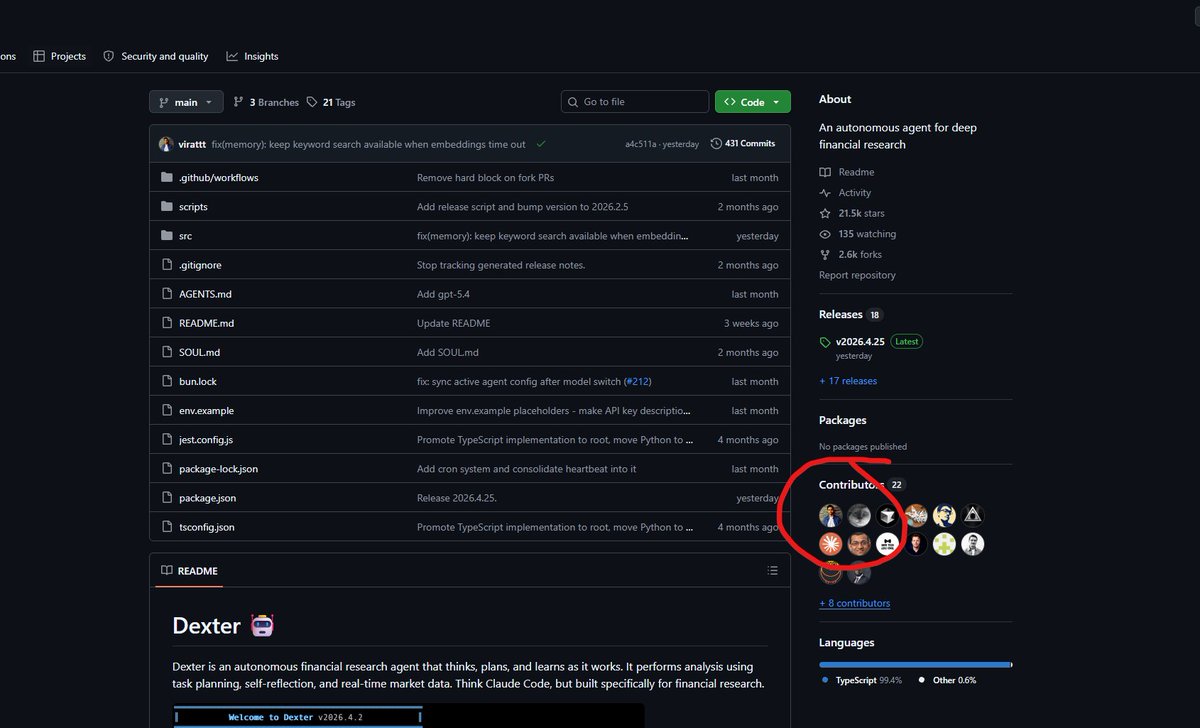

For GBrain I built a proper eval harness. 145 queries, Opus-generated corpus. The retrieval stack uses graph based, vector based and Grep based strategies in combination. The graph layer is worth +31 points on precision. Vector-only misses 170/261 correct answers that the full system finds. Keyword + vector + graph are three separable wins, each load-bearing. Standard information retrieval metrics: the same ones Google uses to measure search quality. Precision at 5: You ask a question, the system returns 5 results. How many of those 5 are actually useful? If 3 out of 5 are relevant, P@5 = 60%. It measures: am I wasting your time with junk results? Recall at 5: For a given question, there might be 3 pages in the entire brain that are genuinely relevant. If the system finds all 3 in its top 5, R@5 = 100%. If it only finds 1, R@5 = 33%. It measures: am I missing things you need? High precision = low noise. High recall = nothing slips through. GBrain's 97.9% R@5 means it almost never misses the right answer. The 49.1% P@5 means about half the results are relevant — which is good when you realize that for most queries there are only 1-2 right answers out of 17,888 pages, so 2.5 hits out of 5 is strong signal. Entity resolution is zero-LLM-call: regex extracts typed links (works_at, invested_in, founded) on every write. Re-embed on write not on a timer, so decay = stale pages, and stale pages get rewritten when new info lands. Scorecards: github.com/garrytan/gbrai…