Weedzin π

8.9K posts

Weedzin π

@WeedzinxD

It just that to be able to see the light, we first have to be in the middle of darkness.. Non-Conformist.

📣 MLPerf Inference v6.0 results are in. Learn how systems powered by NVIDIA Blackwell set the pace on inference, delivering the highest AI factory throughput. 🔗 nvda.ws/4sFFA0k

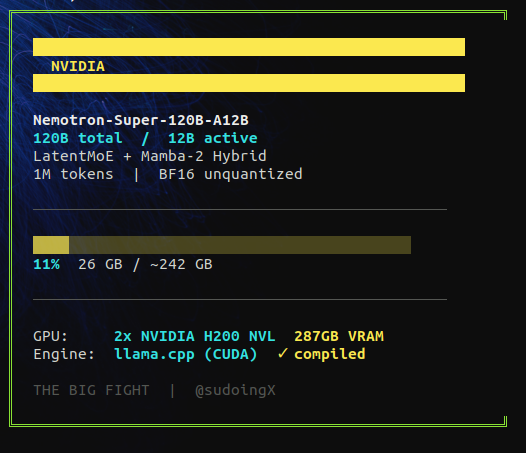

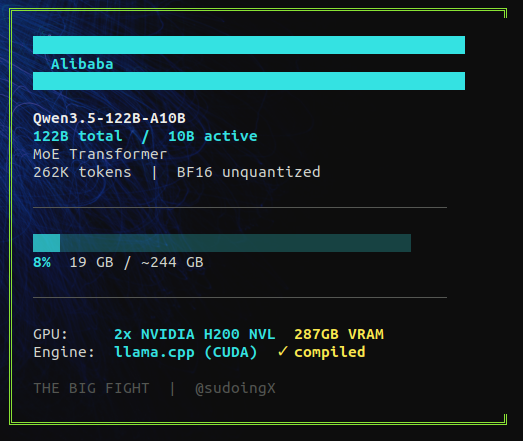

the past week i've been publishing head to head tests between nvidia vs alibaba AI models on consumer hardware like RTX 3090. same GPU, same tests, same inference engine. letting the architectures fight. nvidia failed twice on my 3090. cascade 2 at 3B active hit 187 tok/s but couldn't build a working game in five attempts. openreasoning 32B dense couldn't stop solving math problems long enough to write a single line of code. two architectures, two failures on same card. alibaba's qwen has been winning on every card i've tested. 27B dense on a single 3090 is still undisputed for agent coding. 9B also built a full game on a 12GB card from 2020. so i'm done with consumer hardware for this fight. loading both flagships on 2x H200 NVL. 287GB of VRAM. full BF16 precision. no quants. nvidia nemotron super 120B-A12B. 120 billion parameters, 12 billion active per token. latent mixture of experts with mamba-2. their GTC flagship. against alibaba's qwen 3.5 122B-A10B. 122 billion parameters, 10 billion active per token. the lineup that has been winning at every tier. both unquantized. both on the same hardware. same octopus invader game test, same agent, same prompt. the only variable is the model. if nvidia can't code at full precision on datacenter hardware it can't code anywhere. downloading now.