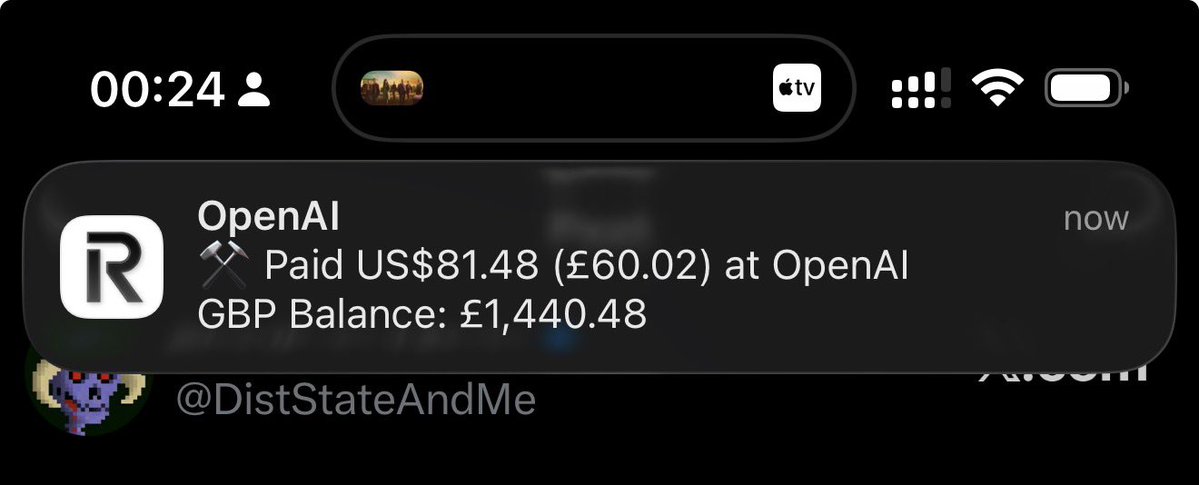

Used autoresearch to make @grail_ai GRPO trainer 1.8x faster on a single B200. I kept postponing this for weeks since the bottleneck in our decentralized framework was mainly communication. But after our proposed technique, PULSE, made weight sync 100x faster, the training update itself became the bottleneck. Even with a fully async trainer and inference, a slow trainer kills convergence speed. A task that could've eaten days of my time ran in parallel while I worked on other stuff. Unlike original autoresearch, where each experiment is 5 min, our feedback loop is way longer (10-17 min per epoch + 10-60 minutes of installations and code changes), so I did minimal steering when it was heading in bad directions to avoid burning GPU hours. The agent tried so many things that failed. But, eventually found the wins: Liger kernel, sequence packing, token-budget dynamic batching, and native FA4 via AttentionInterface. 27% to 47% MFU. 16.7 min to 9.2 min per epoch. If you wanna dig deeper or contribute: github.com/tplr-ai/grail We're optimizing everything at the scale of global nodes to make decentralized post-training as fast as centralized ones. Stay tuned for some cool models coming out of this effort. Cheers!