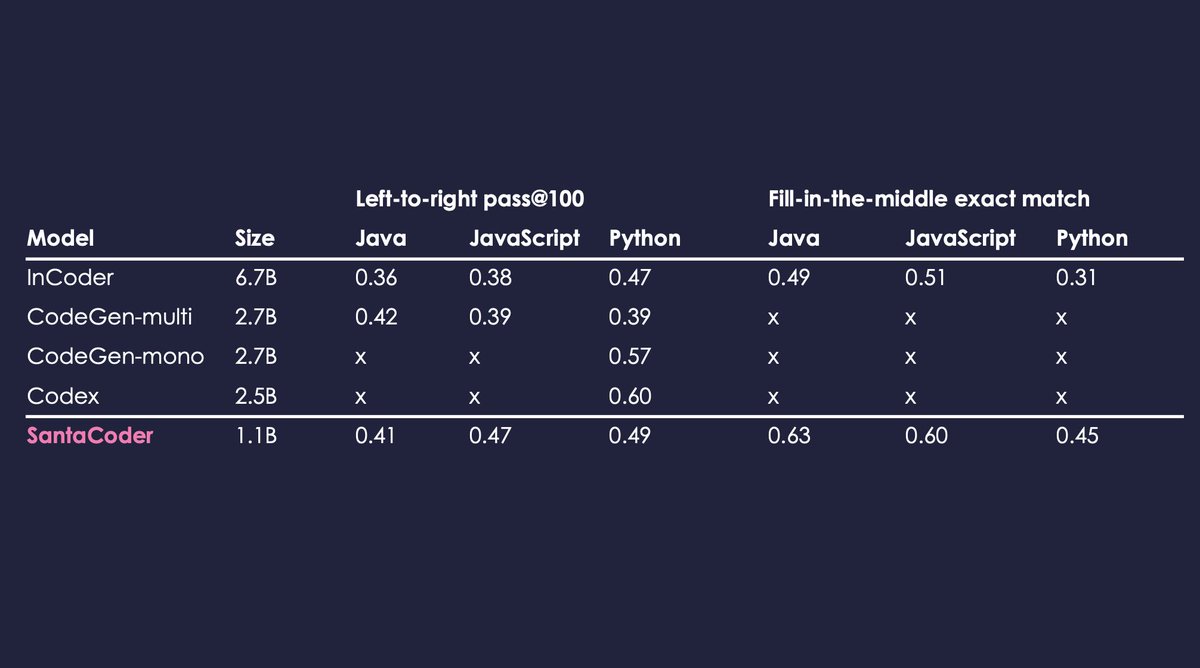

Announcing a holiday gift: 🎅SantaCoder - a 1.1B multilingual LM for code that outperforms much larger open-source models on both left-to-right generation and infilling!

Demo: hf.co/spaces/bigcode…

Paper: hf.co/datasets/bigco…

Attribution: hf.co/spaces/bigcode…

A🧵:

English