Post

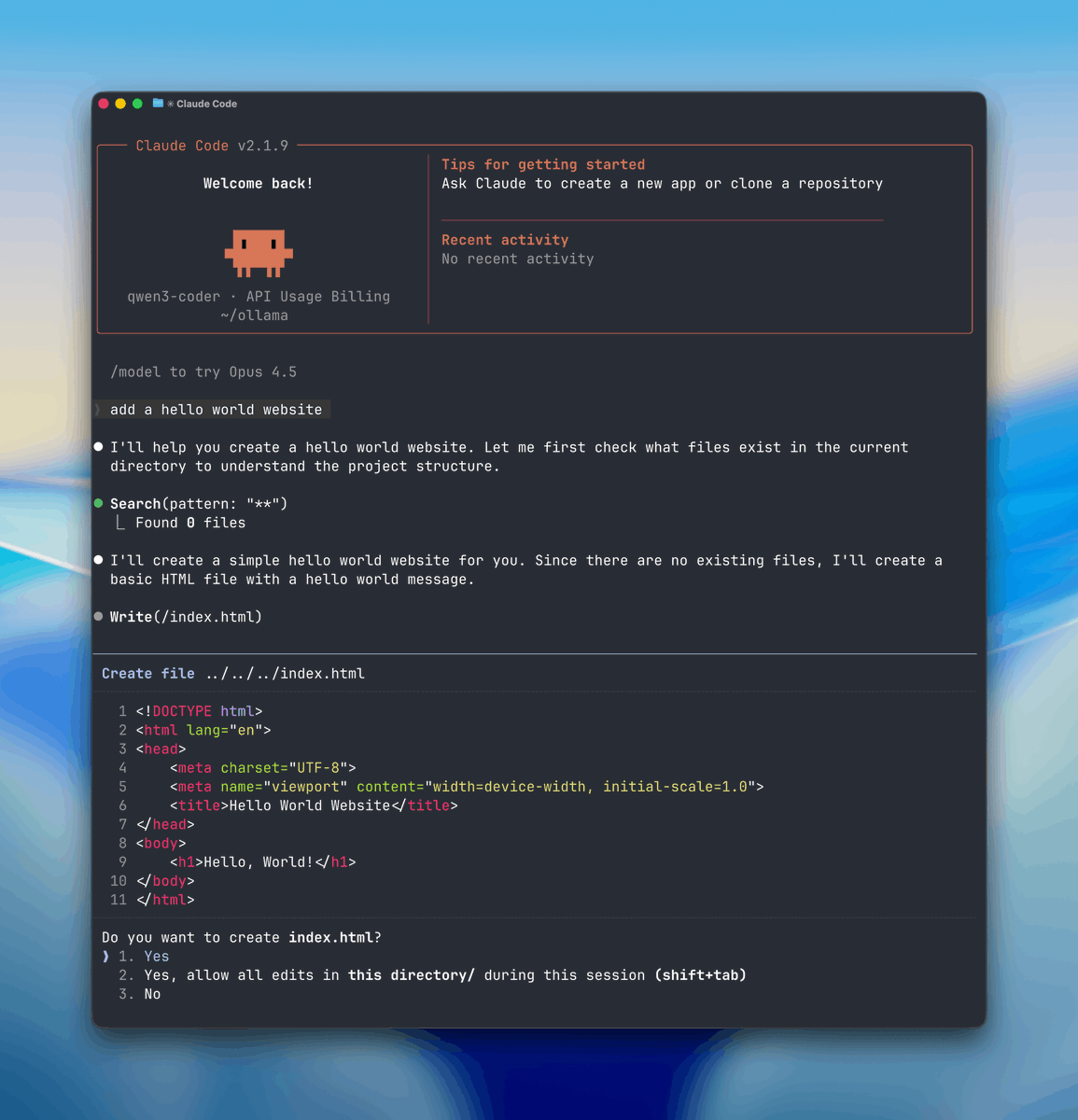

STEP 1: Select Your Local “Brain” (Ollama)

First you need a local engine that can run AI models and handle tool or function calls.

Here we will use Ollama so download ollama.com

Once it’s installed, Ollama runs quietly in the background on both Mac and Windows.

English

First, you need to get a special computer program for coding.

If your computer is really powerful, you can download a bigger program like qwen3-coder:30b.

If your computer isn't as strong, smaller programs like gemma:2b or qwen2.5-coder:7b will work just fine.

Once you choose one, open your computer's terminal and start downloading it by typing:

English

Sure!

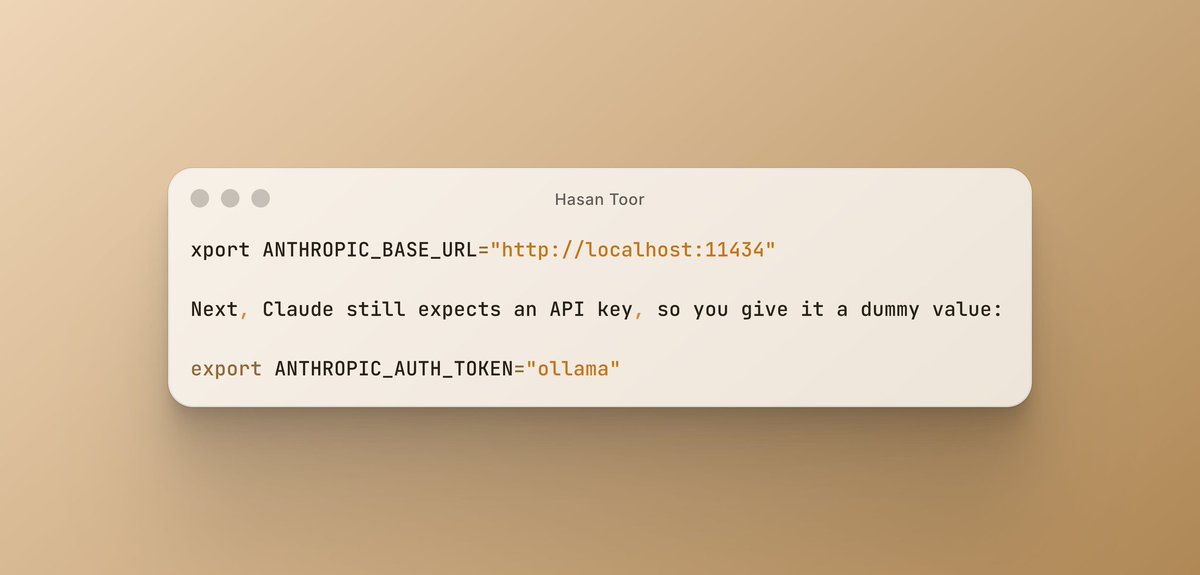

In Step 3, we redirect Claude Code from Anthropic’s cloud servers to your local Ollama (running at http://localhost:11434).

Quick way:

Run:

ollama launch claude --model qwen2.5-coder:7b

(Use a coding model that fits your hardware.)

Manual way:

Set these env vars then run claude:

export ANTHROPIC_BASE_URL=http://localhost:11434 export ANTHROPIC_API_KEY=ollama

This lets Claude Code use your local model for all agentic features (file editing, terminal, etc.) — 100% free & private.

Which part is unclear? Happy to help!

English