تغريدة مثبتة

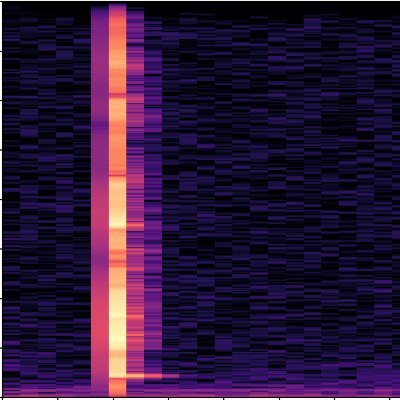

Looking forward to @NeurIPSConf #NeurIPS2024 next week, I am there from Dec 11th-15th. Join our Audio Imagination Workshop on Dec 14th for engaging discussions on all things in audio generation space. We have an exciting list of papers and speakers. audio-imagination.com

English