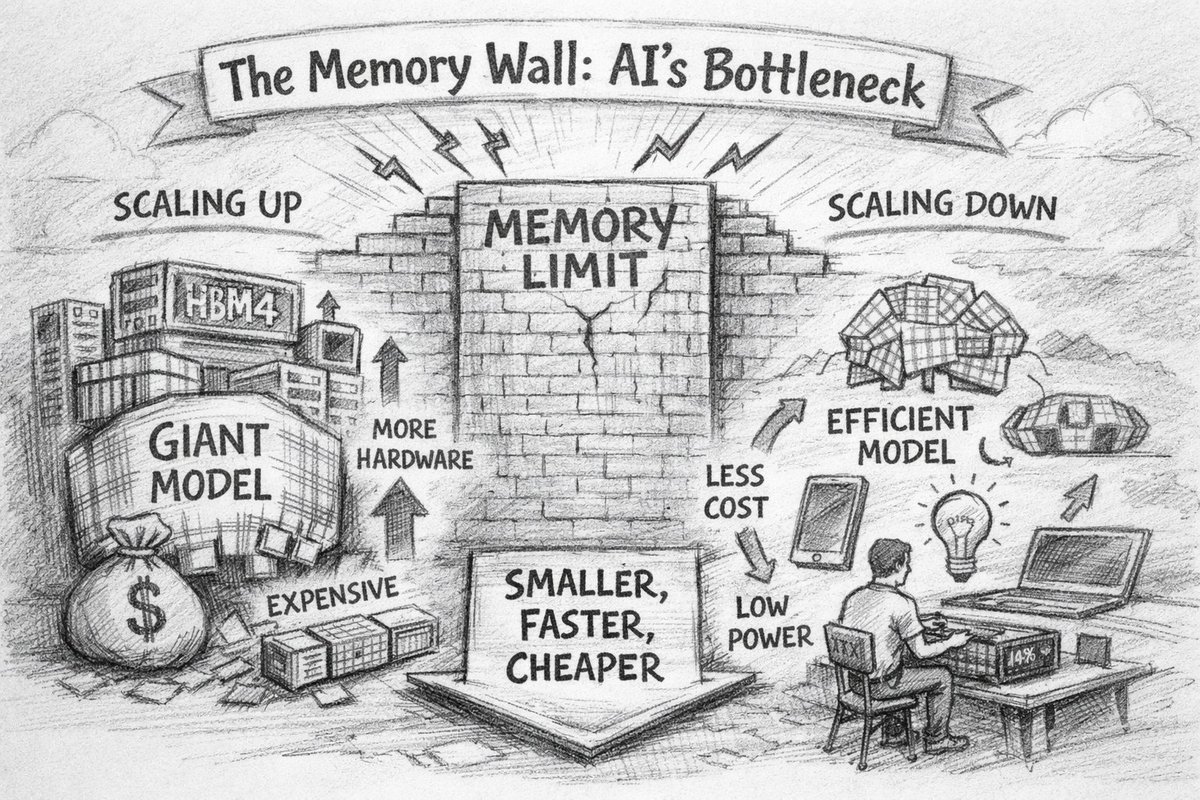

Grateful to @sallywf and @EETimes for the thoughtful writeup of my Embedded Vision Summit keynote. The thesis in one line: the next decade of AI won't be won by the biggest model, but by the smartest, most efficient one that lives on the devices you wear!

eetimes.com/scaling-down-i…

English