CM

634 posts

CM

@Creative_Math_

DL Research intern @blocks, Master’s student @UofT 🇨🇦 in a cool lab. Did pure math in a past life, now I obsess over RL. cashmere-y

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

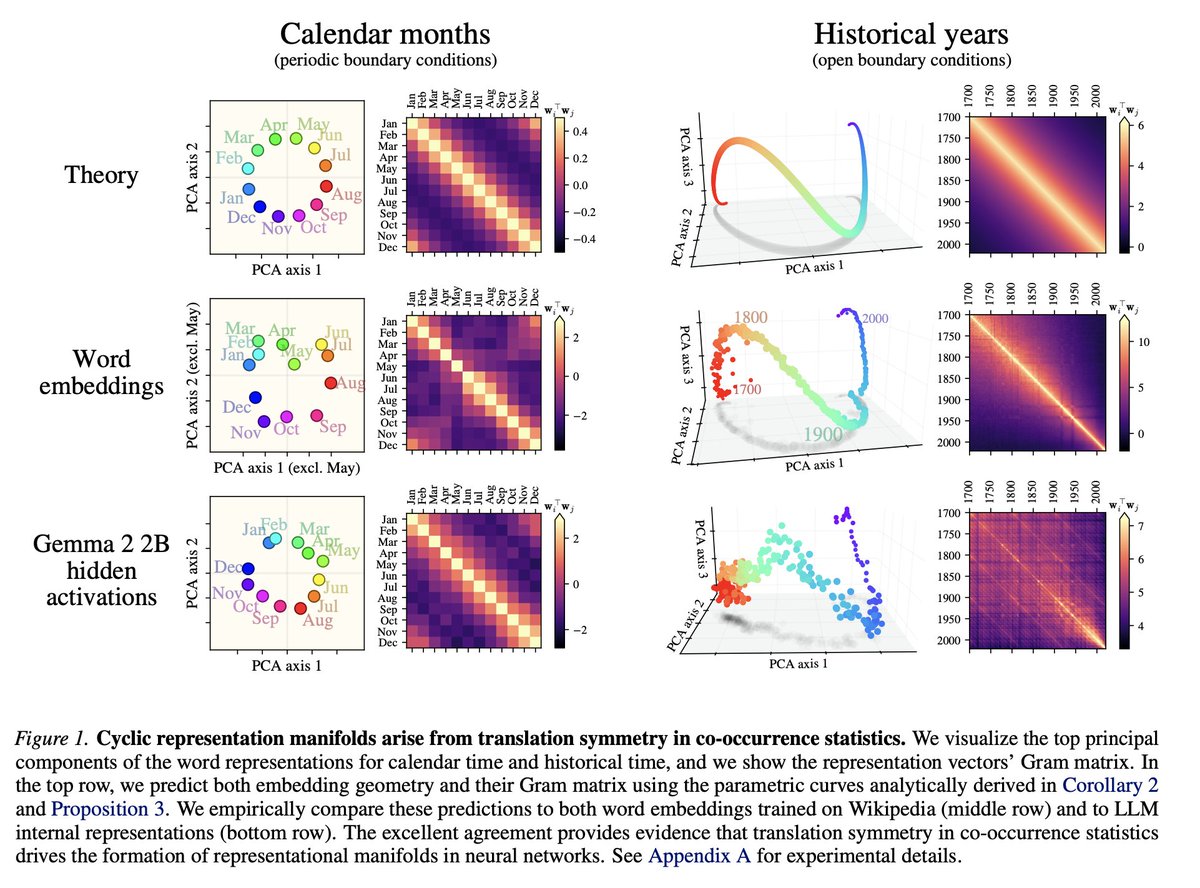

1/ LLMs spontaneously form perfect geometric manifolds: circles for months, spirals for timelines. We usually assume this requires deep, complex learning dynamics. A new paper proves it is actually just basic data statistics forcing the math. 🧵

The Block layoffs are going to become the new norm. I think this is the beginning of the end. Yes, this is my "doomer" video.

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War. anthropic.com/news/statement…

The best case scenario for how AI plays out: 1. automation frees everyone’s time 2. cost of baseline wealth required for survival and a good life drops so low that it becomes available to everyone as a basic right (like utilities) 3. people start spending their time on things humans are actually built for (instead of labor) - ie. cookouts, parties, lots of travel, group hangouts, creating art, spending time with family, learning, etc. 4. this creates an explosion of art, socialization, and “the experience economy” as all human attention flows into these domains This outcome shifts so much human time toward what actually brings us flow: connection, creation, competition (ie. sports, games), beautiful experiences, growth, etc. This world would be incredible, and most importantly, deeply human (instead stark contrast to other very inhuman possible outcomes like trans-humanist singularity) There are several obstacles in the way of this outcome though, most of them political rather than technical. And they are going to be very hard to get past. Will require unprecedented cooperation between labs, USG, and general population. I hope humanity can rise to the occasion, because we haven’t successfully coordinated on this scale before. But this outcome is what I’m really hoping for and trying to figure out what we can do to bring it closer.

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow. Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes. As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now. It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.