wontfix

18 posts

We now have an API for: - precedent search agent - literature/clinical trials/patents search agent - chemistry agent - data analysis agent (that can find data) You can generate an API key in platform and add, for example, novelty detection

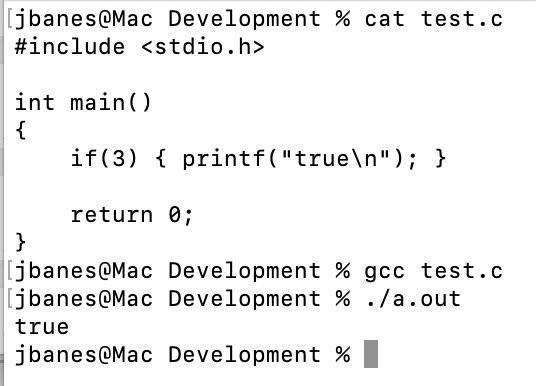

Changes to the X algo mean I’m seeing more relevant content. (Yay!) It also means that younger programmers are now on full blast. Not sure how I feel about this. I want to be helpful and explain. But I expect that many will not welcome correction. Yet they’re putting themselves out there and whipping others into a frenzy over their own misunderstandings. How do you think these situations should be handled? 🤔 (The problem, BTW, is that he needs a

tag. The browser auto-inserts it in raw HTML for backwards compatibility. Not including is technically wrong and won’t match what the browser renders.)

I wonder how many H100 hours have been burned waiting a handful of milliseconds for python to "glue" the next task to the previous one

Quite the contrary: We're using the language that was designed as a glue language for gluing pieces together that are written in the language(s) that were designed for peak performance. Everything working exactly as designed.