Bloop but now BURN

2K posts

Bloop but now BURN

@Economini

"If you don’t believe it or don’t get it, I don’t have the time to try to convince you, sorry." Satoshi Nakamoto

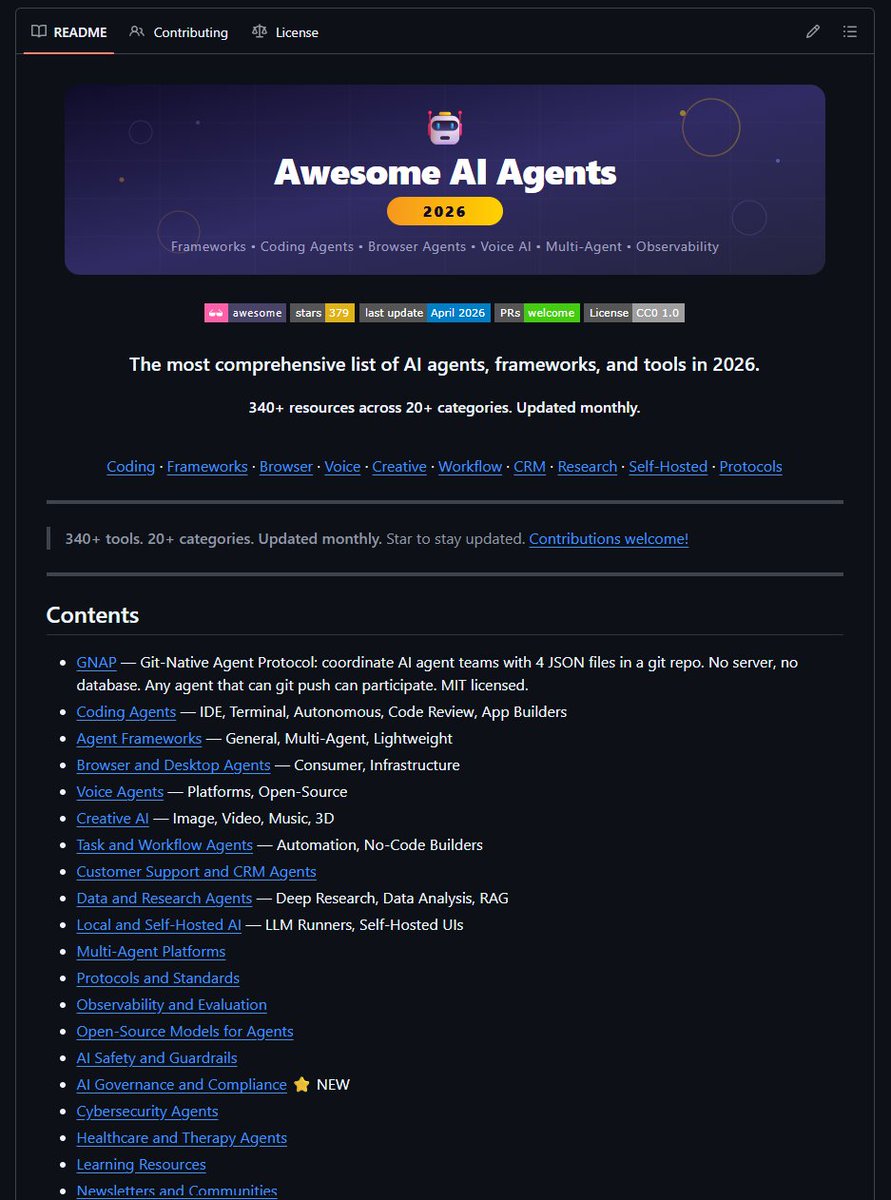

🚨 BREAKING: A new role is quietly emerging and it’s about to dominate the next 5 years. It’s not “AI engineer.” It’s not “prompt engineer.” It’s the Agent Operator. And it will sit inside almost every organization. Most people are still thinking about AI as a tool. That framing is already outdated. What’s actually happening is a shift from: humans using software to humans managing autonomous agents that execute work This is a fundamental redesign of how work gets done. So what is an Agent Operator? An Agent Operator is the person who: • Designs how agents interact with real workflows • Connects tools, data, and systems into agent pipelines • Translates business problems into executable agent behavior • Monitors, corrects, and improves agent performance over time They don’t just “use AI.” They orchestrate outcomes. and this matter because Every function marketing, legal, finance, biotech is becoming “agent-compatible.” Not because companies want it. Because they won’t have a choice. Agents can: • Run research loops • Execute multi-step workflows • Integrate across tools without APIs breaking the flow • Operate 24/7 at near-zero marginal cost The bottleneck is no longer capability. It’s implementation inside real-world systems. Required skills for AI Agent Operator role: → MCPs (Model Context Protocols) Understanding how agents access tools, memory, and structured context. → CLIs (Command Line Interfaces) Because serious agent workflows won’t live in GUIs—they’ll run in programmable environments. → Writing skills (the file kind) Clear specs, instructions, and structured documents. Agents run on precision, not vibes. → agents dot md fluency The ability to define agent roles, constraints, memory, and tool usage in persistent formats. → Business acumen Knowing what actually matters: Where automation creates leverage, not noise. What happens next Enterprises will begin to redesign workflows: Not around employees using dashboards… But around agents executing tasks. That means: • SOPs → Agent playbooks • Teams → Human + agent hybrids • Tools → Composable agent systems When that shift happens, companies won’t just need engineers. They’ll need operators who understand both the system and the business. The leverage is asymmetric One strong Agent Operator can: • Replace fragmented SaaS workflows • Multiply team output without adding headcount • Turn ideas into execution systems in days This is not incremental productivity. It’s operational transformation.