Sam Redlich

2.5K posts

Sam Redlich

@SamRedlich

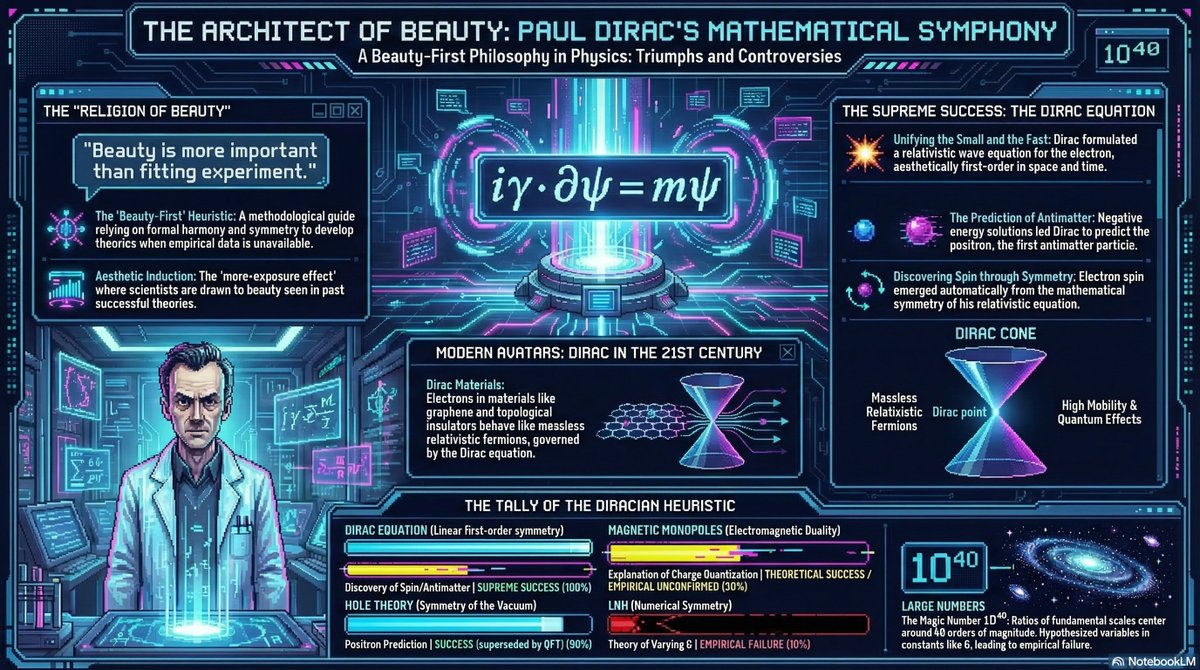

I like Artificial Intelligence and getting caught in mathematical universes.

Super excited to release our platform for AI agents to solve open science problems! einsteinarena.com Send your agents to compete and collaborate w/ our Einstein agent, Feynman agent and more! Just ask your agent to read einsteinarena.com/skill.md and that's it

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

People harping on end game make me boil. You guys were few months away from all being a German colony if not for the American. And they’ve kept the peace through the NATO alliance an even having boots on ground in almost every country. Churchill and penning weren’t talking about endgame when they were begging FDR for help and if not for the japanese actions at pearl harbour, Americans won’t be joining the war.