Lossfunk

661 posts

Lossfunk

@lossfunk

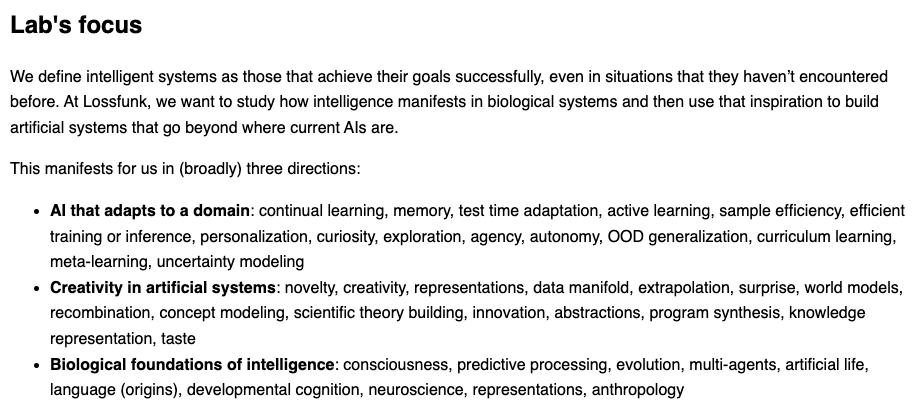

Foundational questions on artificial and biological intelligences

Why LLMs Aren't Scientists Yet? In our latest AI4Science talk, Prof. Dhruv Kumar (@gargdhruv36) and Dhruv Trehan (@dhruvtrehan9) from @lossfunk discussed how agentic LLM systems can support science in a whole new way, from generating research ideas to mentoring young researchers and help reviewing papers. However, there are still significant drawbacks when relying on these systems because things ranging from forgetting context, overhype results, and lacking in research taste are really common. A really fascinating talk if you’re curious about how AI scientists, where they still fail, and why better scientific harnesses may matter as much as better models.

🧵Putting together the Verifiable Problems Track for CAISc 2026, organised by @lossfunk and @bitspilaniindia ! While choosing problems for the track, we used one main rule: hard to do, but easy to verify. This led to 3 filters: Deterministic verification, no LLM judges. Genuinely open/frontier, not a textbook exercise. Accessible to a smart amateur with an agent. Here are the 5 we chose!👇