تغريدة مثبتة

Alessio Serra

1.4K posts

Alessio Serra

@aserra___

ai researcher | making the stochastic parrot smarter @Aleph__Alpha

Germany انضم Mart 2020

192 يتبع1.8K المتابعون

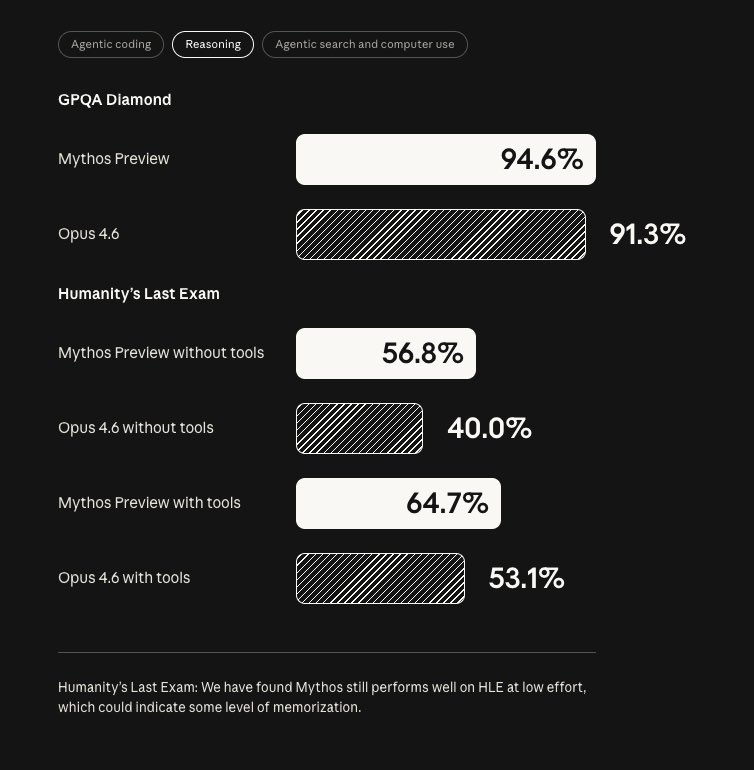

anthropic is winning the thinking game

Anthropic@AnthropicAI

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

English

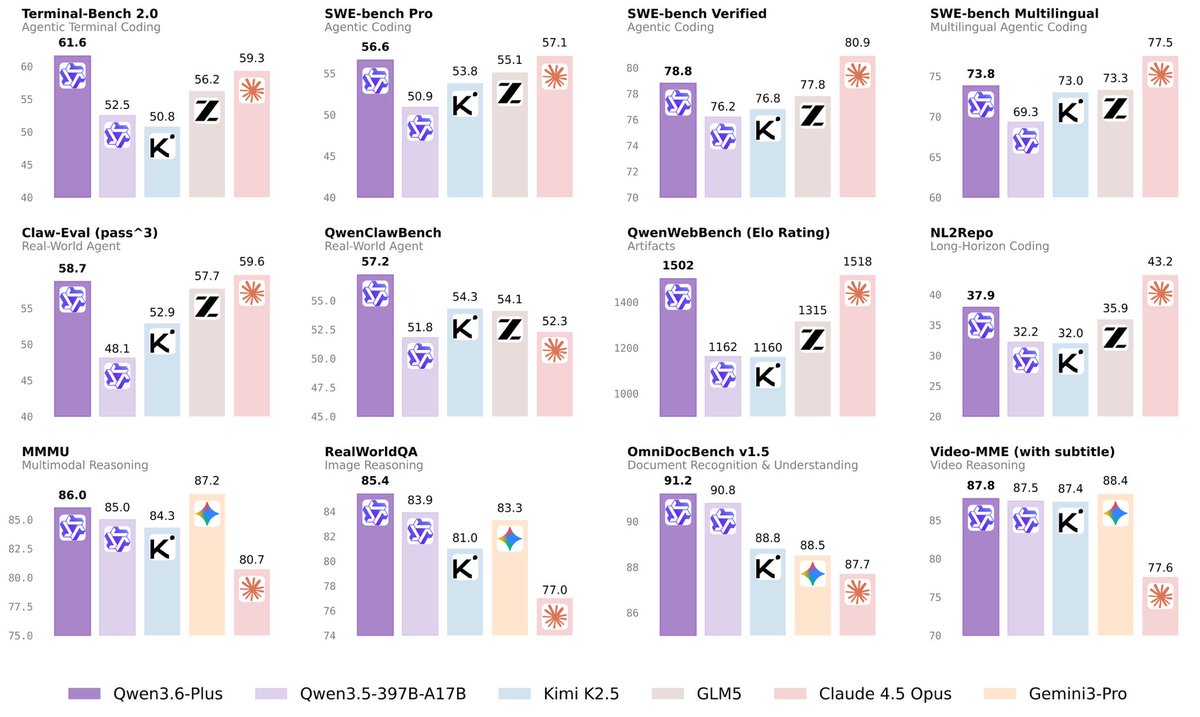

Yet another “near-frontier” model from the Amazon of China.

Not Qwen. Not Alibaba.

Rosinality@rosinality

An LLM from JD.com. It makes me think that you can build something interesting even with only openly available resources. (And with fancy RL objective, FiberPO.)

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

italian elegance meets autonomous research

Paradigma@paradigmainc

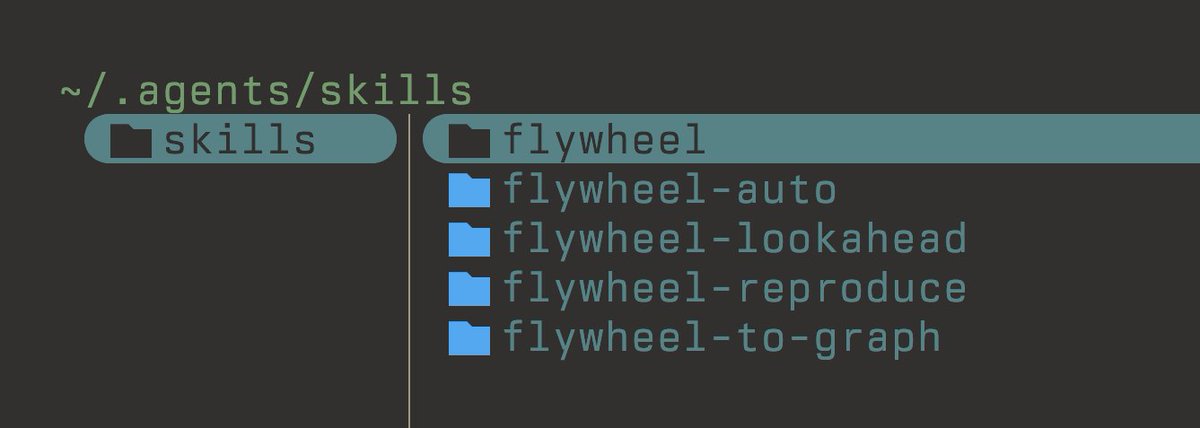

Flywheel release v2026.04.02 See some of the highlights below.

English

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

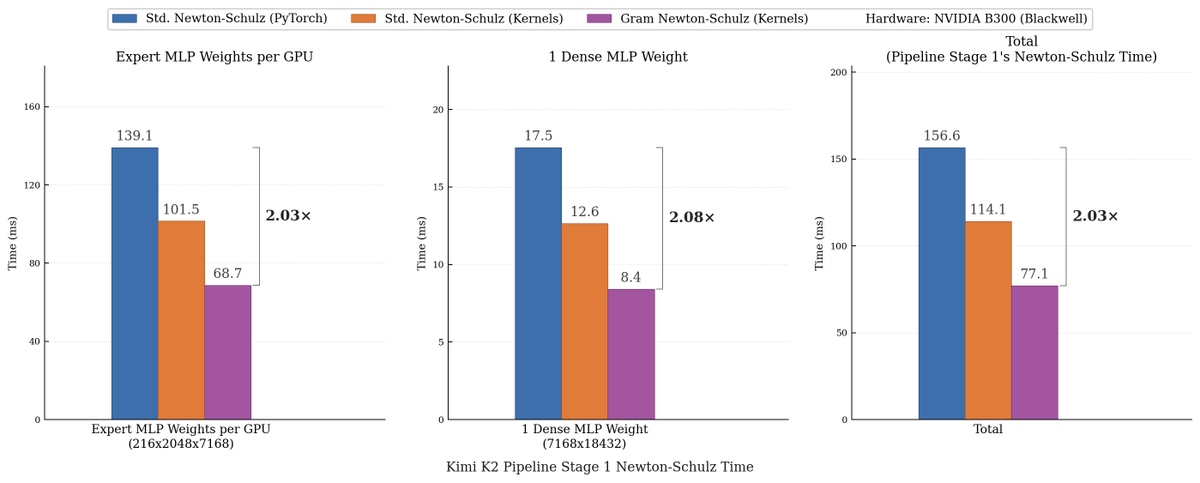

We made Muon run up to 2x faster for free!

Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition.

Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangular X matrix, we iterate on the small, square, symmetric XX^T Gram matrix to reduce FLOPs. This allows us to make more use of fast symmetric GEMM kernels on Hopper and Blackwell, halving the FLOPs of each of those GEMMs.

Gram Newton-Schulz is a drop-in replacement of Newton-Schulz for your Muon use case: we see validation perplexity preserved within 0.01, and share our (long!) journey stabilizing this algorithm and ensuring that training quality is preserved above all else.

This was a super fun project with @noahamsel, @berlinchen, and @tri_dao that spanned theory, numerical analysis, and ML systems! Blog and codebase linked below 🧵

English

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

Alessio Serra أُعيد تغريده

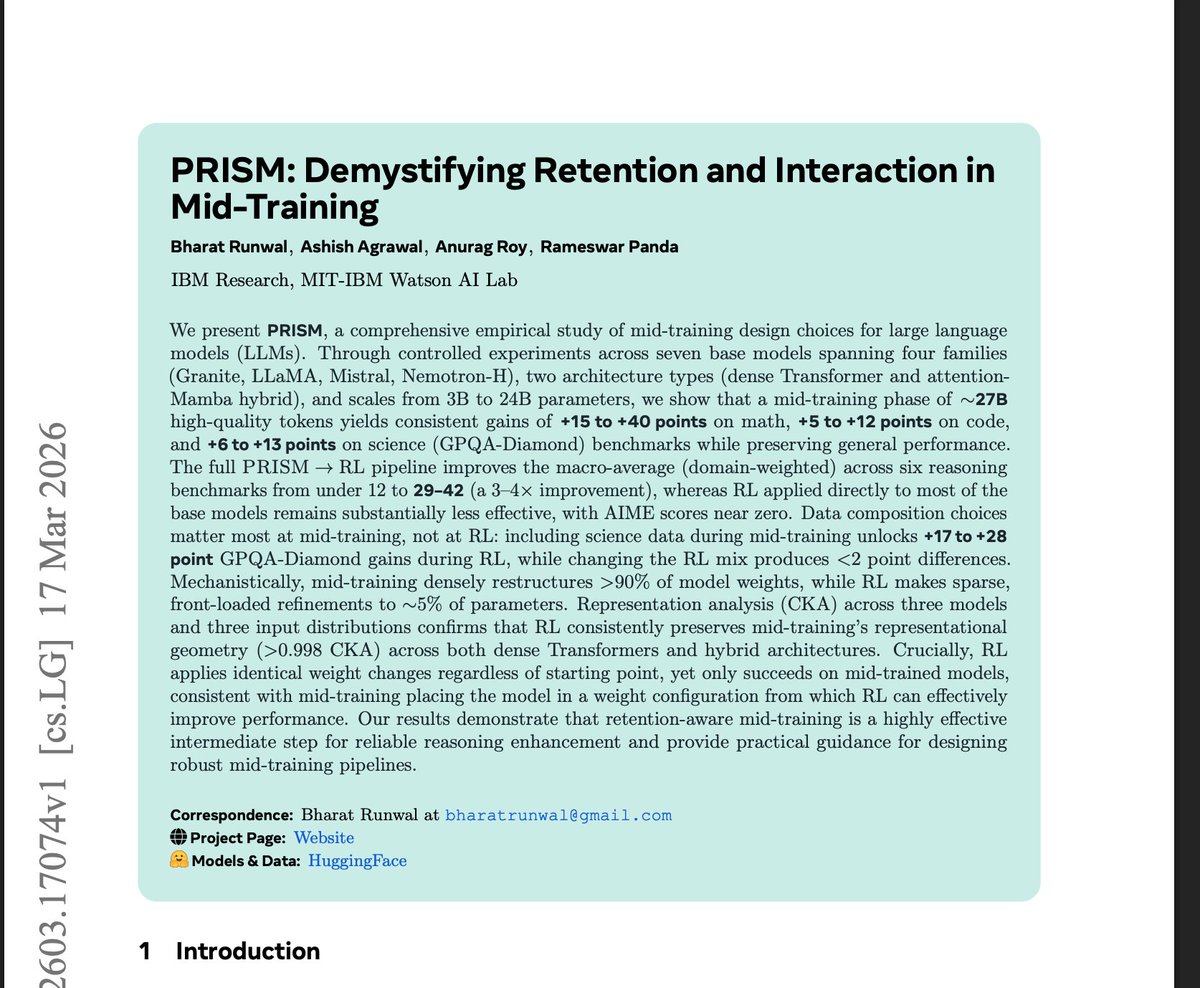

Found another great midtraining paper. I haven't seen it on my TL so thought I would share. Super excited to dig into it later but looks really promising. (ty @lukemerrick_ )

I love seeing more work unifying understanding of midtraining -> RL

English

you're not gonna believe what the next feature in Flywheel will be

OpenAI@OpenAI

Are you up for a challenge? openai.com/parameter-golf

English

We've reached an agreement to acquire Astral.

After we close, OpenAI plans for @astral_sh to join our Codex team, with a continued focus on building great tools and advancing the shared mission of making developers more productive.

openai.com/index/openai-t…

English

Alessio Serra أُعيد تغريده

Promised, Delivered! 🚀

Just 10 days ago, I put out a call for an @openclaw meetup in Rome. What a response! Tens of people reached out to volunteer (huge shout out here to @RomaStartup, the definitive hub for connecting to the Roman ecosystem).

Turns out Marco Galluccio (@margal96) and the fantastic team at #UrbeHub (@urbeEth) were already ahead of the curve with two early meetups. But looking at the massive demand, we realized we needed to go bigger. We needed something truly "Pan-Roman."

So, we connected the dots. I reached out to @AISalonAI Rome, that’s already making a massive impact on the Roman AI ecosystem (huge thanks to @RMagnifico and @KVGConsult for jumping in!). The result? The first Pan-Roman Openclaw Meetup🏛️

Our mission is simple: make it 100% open and accessible. Open but not dumb. Zero BS, zero theater. Real use cases and hands on. Whether you’re a hardcore developer or just curious about what OpenClaw can actually do for you, this event is where you have to be.

I’m thrilled to be taking the stage as a speaker, but more importantly, I can't wait to see how this experiment unfolds.

When: March 25, starting at 18.00

Where: The Zest Hub, Stazione Termini, Rome

What: Bring your laptop.

Register here: lnkd.in/eJXF9mrX

One final note. Rome hosts one of the most talented and Silicon Valley-connected AI communities in Europe. Acting very much under radar, generally shy (if not properly skeptical) of engaging the main actors of the Italian business and IT ecosystem. Thanks to Urbe Hub for the great job at bringing it together.

@GCarnovale @marcotrombetti @matteofago @gloq @barbaracarfagna @MGVitagliano @rstagi_ @FutureDies @tensorqt

English