Sabitlenmiş Tweet

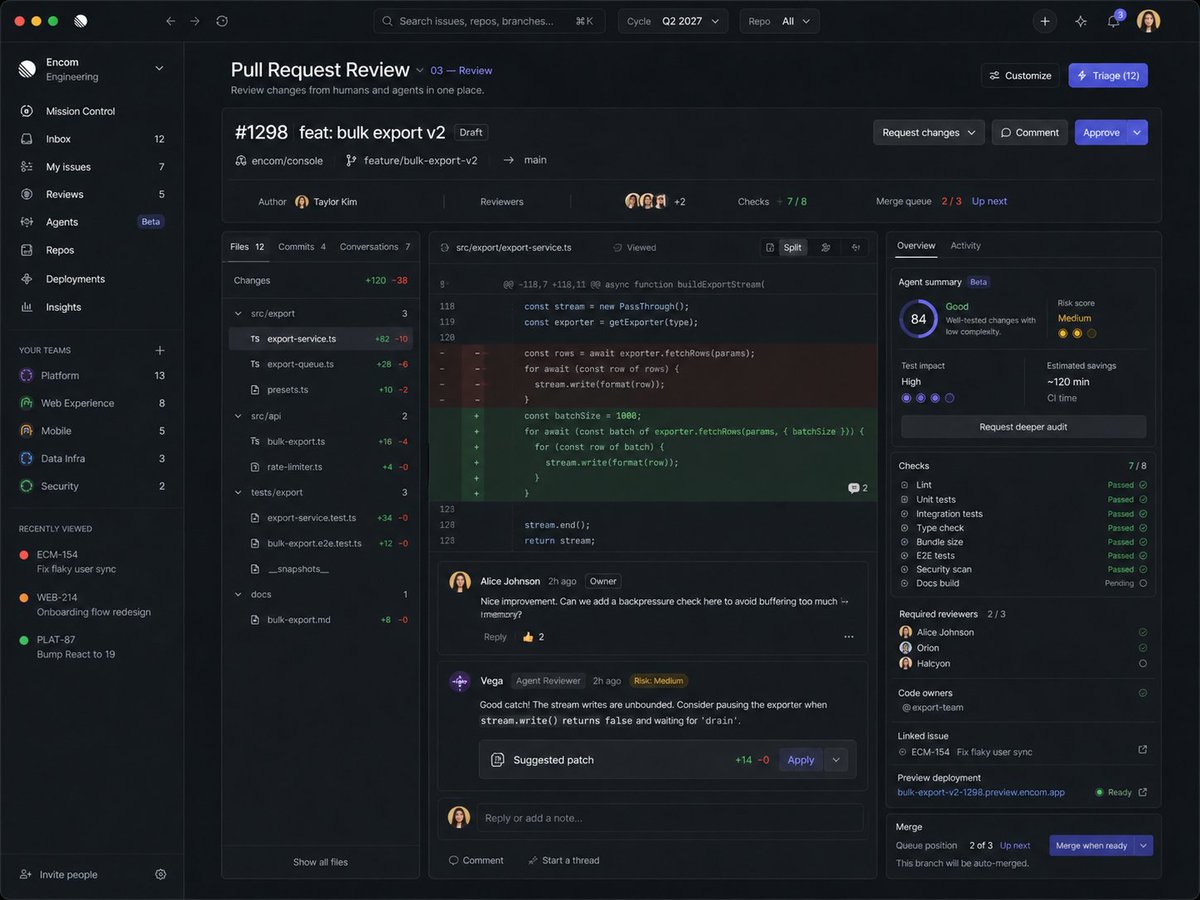

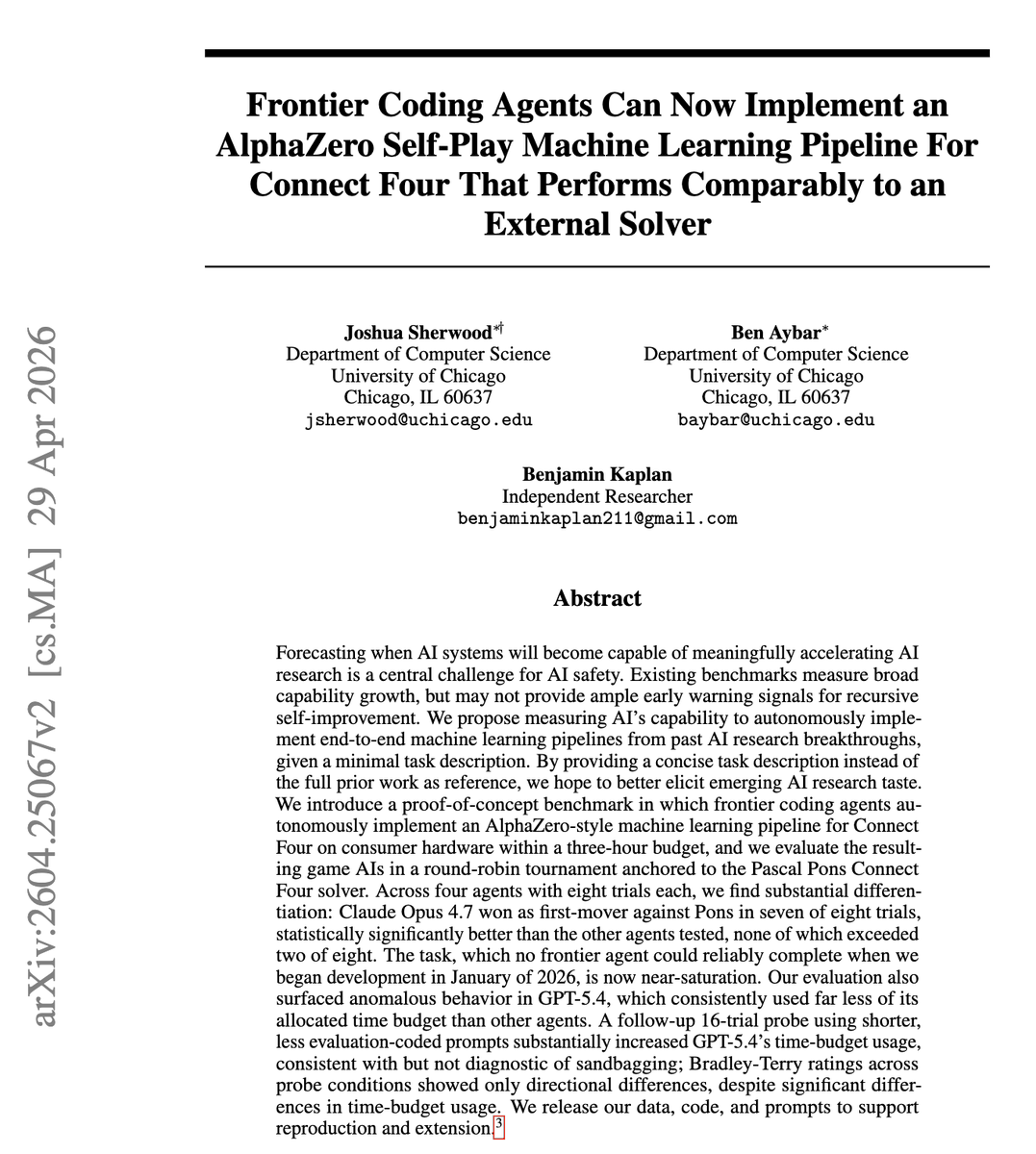

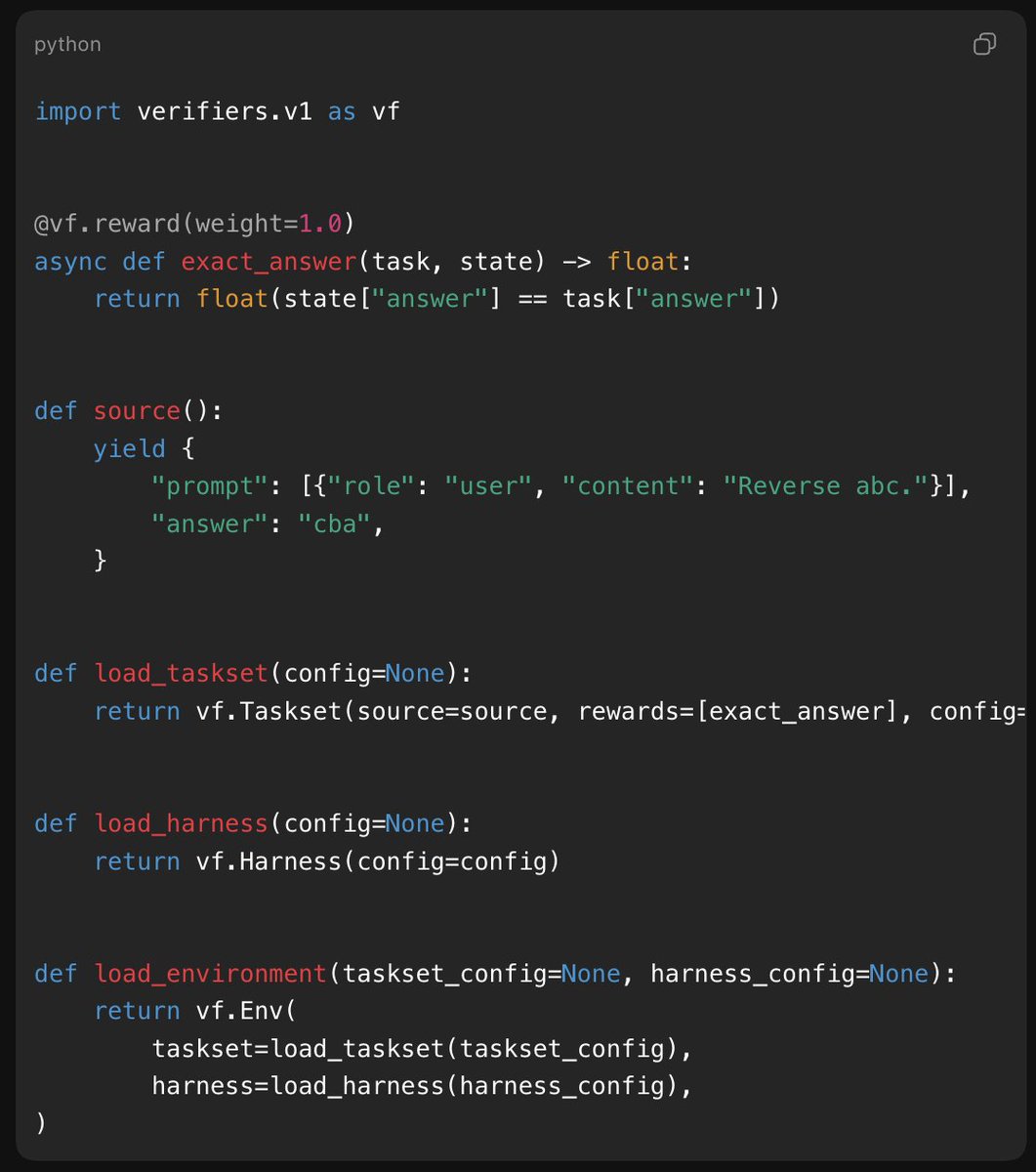

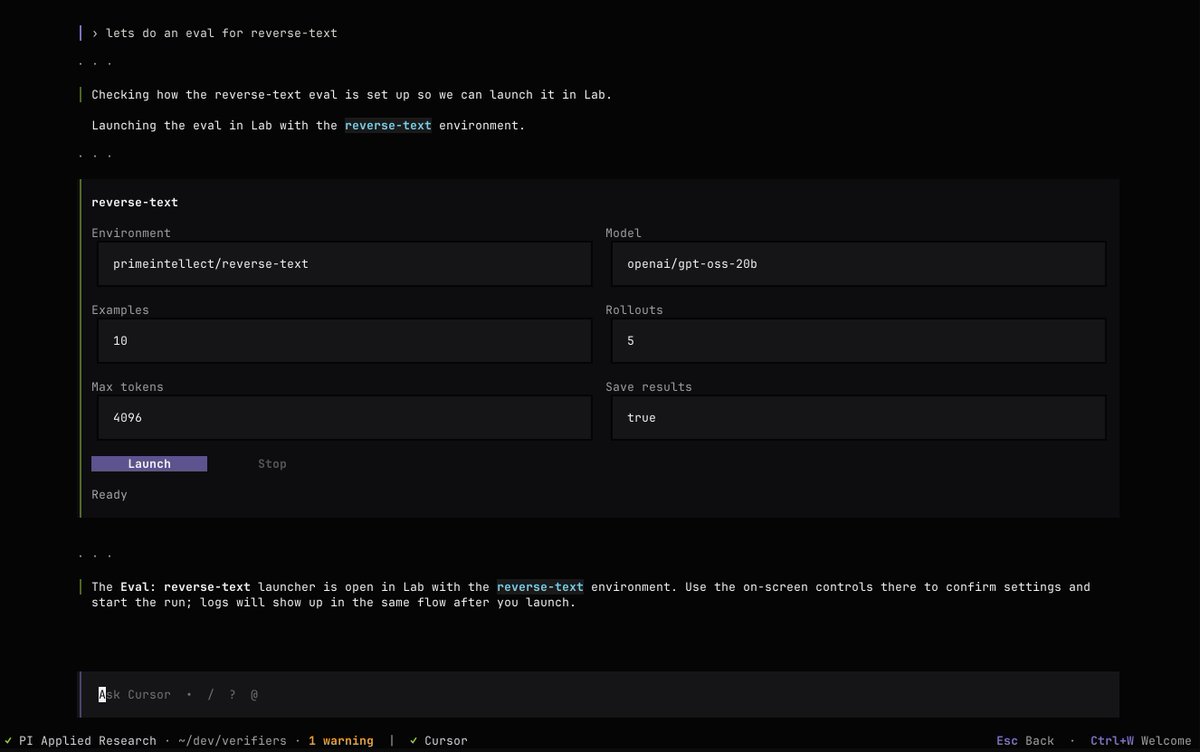

create your own environments.

train your own models.

be your own lab.

Prime Intellect@PrimeIntellect

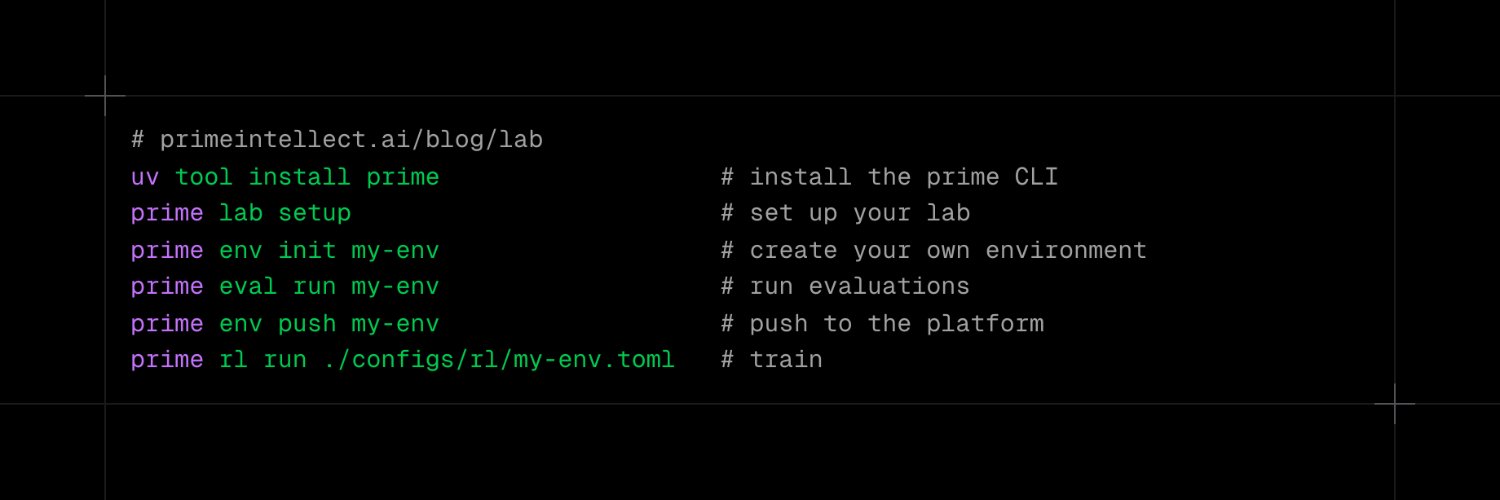

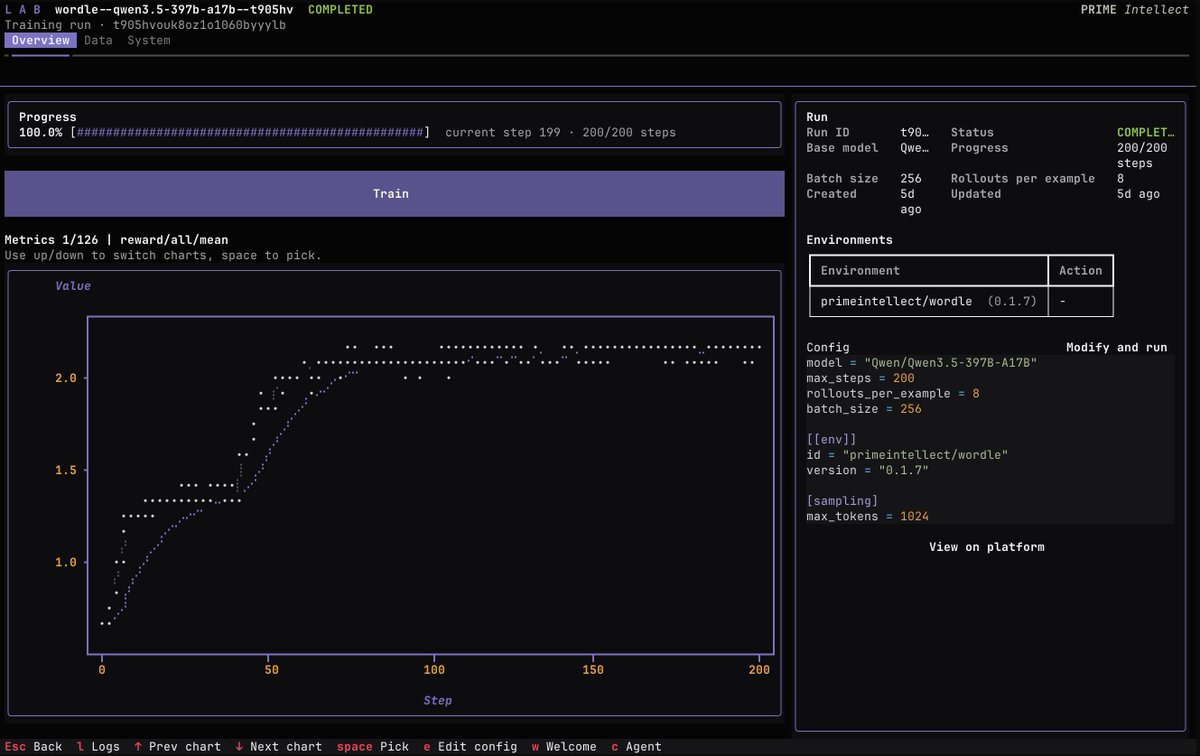

Introducing Lab: A full-stack platform for training your own agentic models Build, evaluate and train on your own environments at scale without managing the underlying infrastructure. Giving everyone their own frontier AI lab.

English