Sherlock Holmes

245 posts

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via @FireworksAI_HQ ' hosted RL and inference platform as part of an authorized commercial partnership.

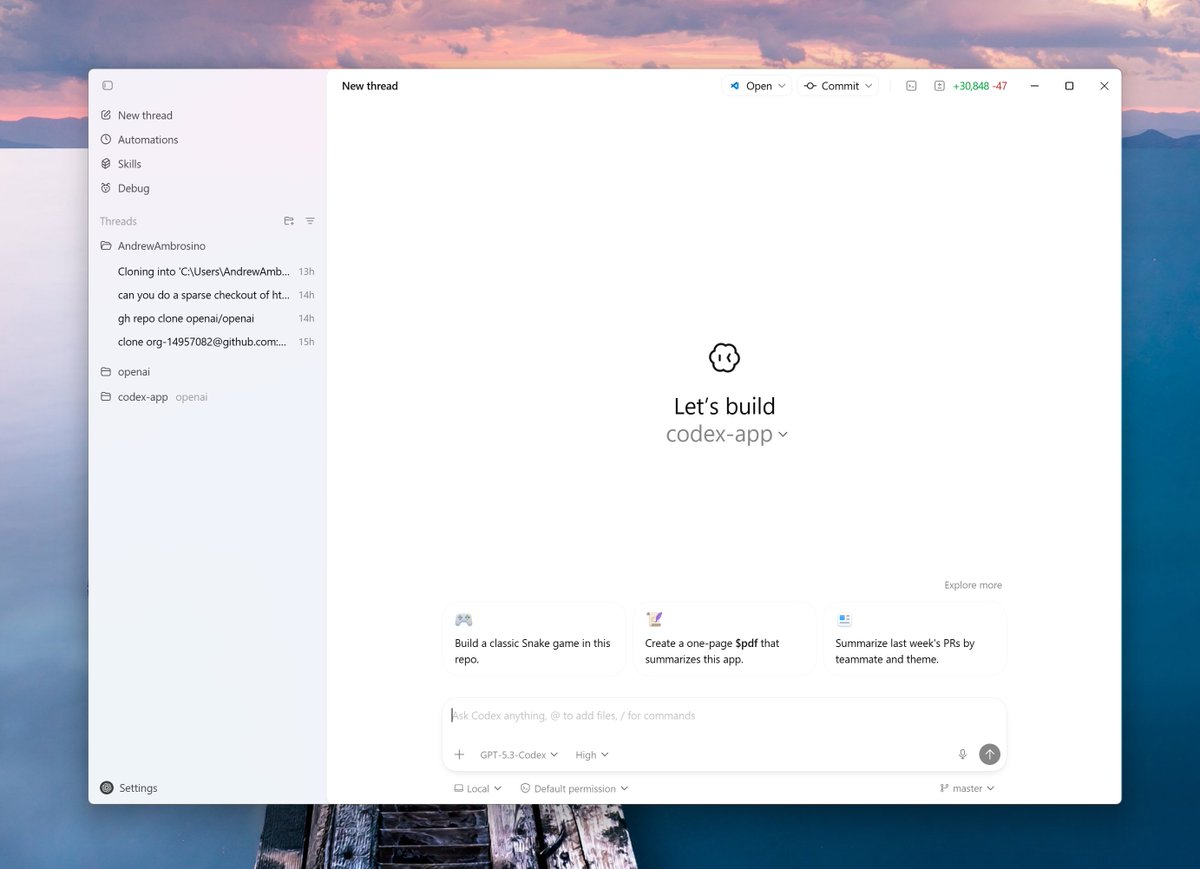

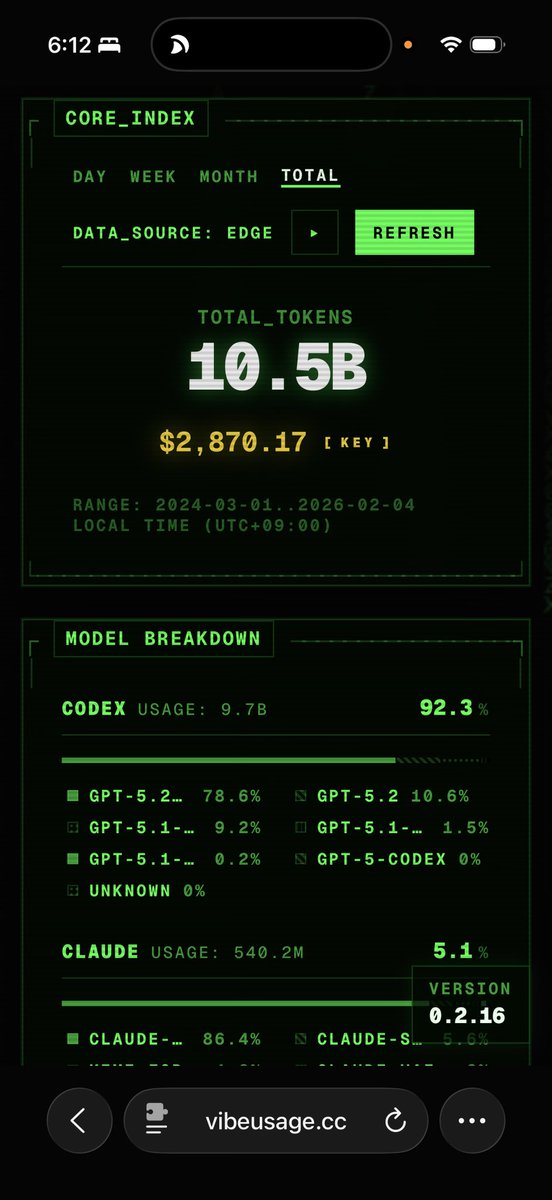

We estimate that GPT-5.2 with `high` (not `xhigh`) reasoning effort has a 50%-time-horizon of around 6.6 hrs (95% CI of 3 hr 20 min to 17 hr 30 min) on our expanded suite of software tasks. This is the highest estimate for a time horizon measurement we have reported to date.

GLM 4.7 is one of the strongest open-source coding models available—but most developers aren't prompting it correctly. We put together 10 rules to help you get the most out of it: - Front-load instructions (it has a strong recency bias) - Use firm language: "must" and "strictly" > soft suggestions - Break complex tasks into smaller steps - Disable reasoning for simple tasks, enable it for hard ones - Use critic agents for code review, QA, and validation - Pair it with a frontier model for the hardest 10% of workloads - and more… GLM 4.7 hits 96% on Tau² Bench and 86% on GPQA Diamond. At 1,500 tokens/sec on Cerebras, it's 20x faster than closed-source alternatives on GPUs.