Linjun Zhang

92 posts

@linjunz_stat

Assistant Professor of Statistics @RutgersU

🚨 Introducing ATP — Alignment Tipping Process! 🔥 Beware! Self-Evolution is gradually pushing LLM Agents off the rails! Even perfect alignment at deployment can gradually forget human alignment and shift toward self-serving strategies. #AI #LLM #Agents #SelfEvolving #Alignment #AIResearch

🚀 Excited to share that “#FactTest: Factuality Testing in Large Language Models with Finite-Sample and Distribution-Free Guarantees” has been accepted to #ICML25 ! 🎉 📄 Paper: arxiv.org/abs/2411.02603 💻 Code: github.com/fannie1208/Fac… 1/N

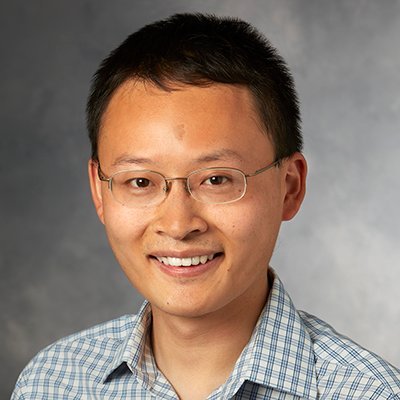

💀 Introducing RIP: Rejecting Instruction Preferences💀 A method to *curate* high quality data, or *create* high quality synthetic data. Large performance gains across benchmarks (AlpacaEval2, Arena-Hard, WildBench). Paper 📄: arxiv.org/abs/2501.18578

Congratulations to @EdgarDobriban who won the 2024 Peter Gavin Hall IMS Early Career Prize Read more here: imstat.org/2024/05/02/edg…