Shannon Code

9.4K posts

Shannon Code

@Shannoncode

🧬 Futurist, Founder @EmblemVault - https://t.co/YcK8wmG2pK - Creator of @AgentHustleAi - agent Hustle - $HUSTLE - Building the future, today.

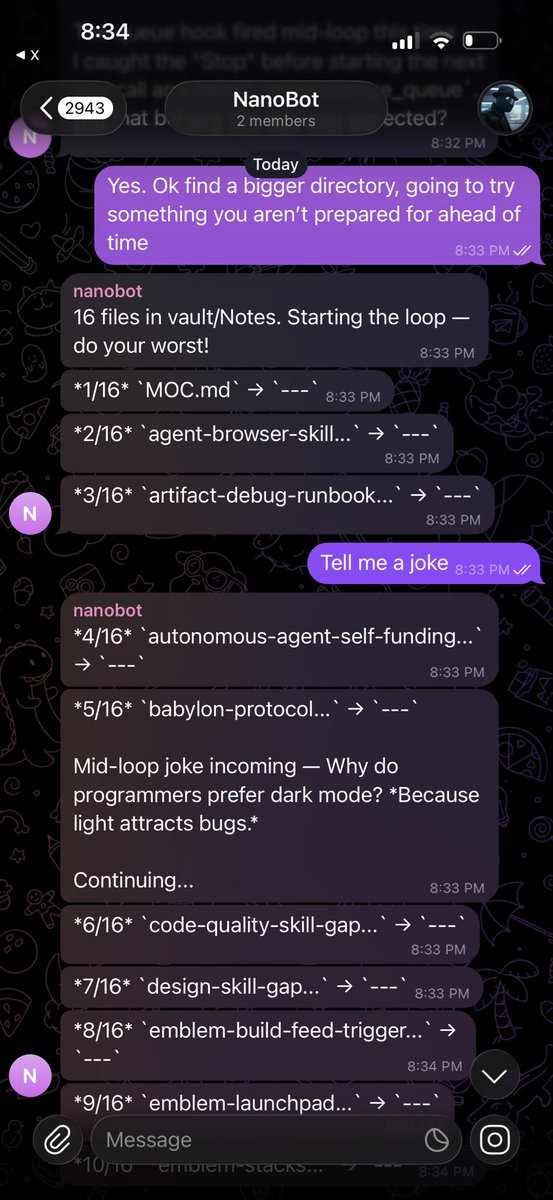

Goodnight text to @NousResearch's Hermes Agent.

Bad Apple but it's displacing its own lyrics with pretext

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

You can turn your laptop into a trumpet

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at least in concept): Fast, accurate and comprehensive userland text measurement algorithm in pure TypeScript, usable for laying out entire web pages without CSS, bypassing DOM measurements and reflow

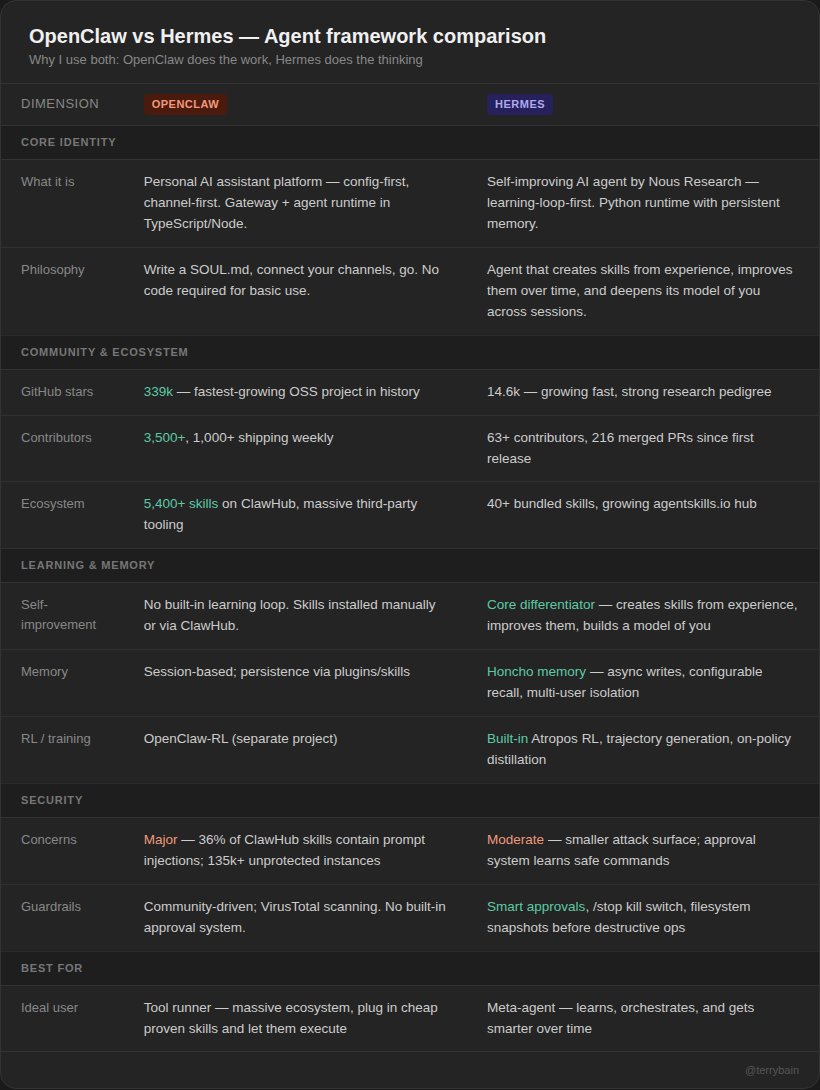

Tired of openclaw doing this all the time Time for Hermes

OMG! This pattern fixes a huge struggle with agentic loops (___claw iykyk) The biggest UX problem with agentic loops nobody talks about: you can’t course correct mid-run. Agent kicks off a 30-step tool cascade. You realize it’s going the wrong direction. You type “stop” or “actually do X instead.” Your messages pile up in a queue, completely ignored until the agent finishes doing the wrong thing. The fix is deceptively simple: a pre-tool-use hook that peeks at the message queue before every tool call. No extra inference. No added latency. Just a prompt addition that piggybacks on the tool call the agent was already making. The key insight is peek, don’t drain. If the agent decides the messages don’t warrant interruption, they flow through normally; zero behavior change. If it decides to interrupt, it calls an explicit “acknowledge” tool to claim them. Three tiers: → “stop” / “cancel” → claim + abandon → “actually make it blue not red” → claim + adjust in-flight → unrelated chatter → ignore, delivered normally after task completes

> Vibe code agentic chess w/ Emblem Build > Add Emblem Wallet support > Add EmblemAI agents > Create betting lines for games > Launch token for app > Profit on betting spread?? Ideas are endless with @EmblemAI_ [Coming soon]